DASP: Self-supervised Nighttime Monocular Depth Estimation with Domain Adaptation of Spatiotemporal Priors

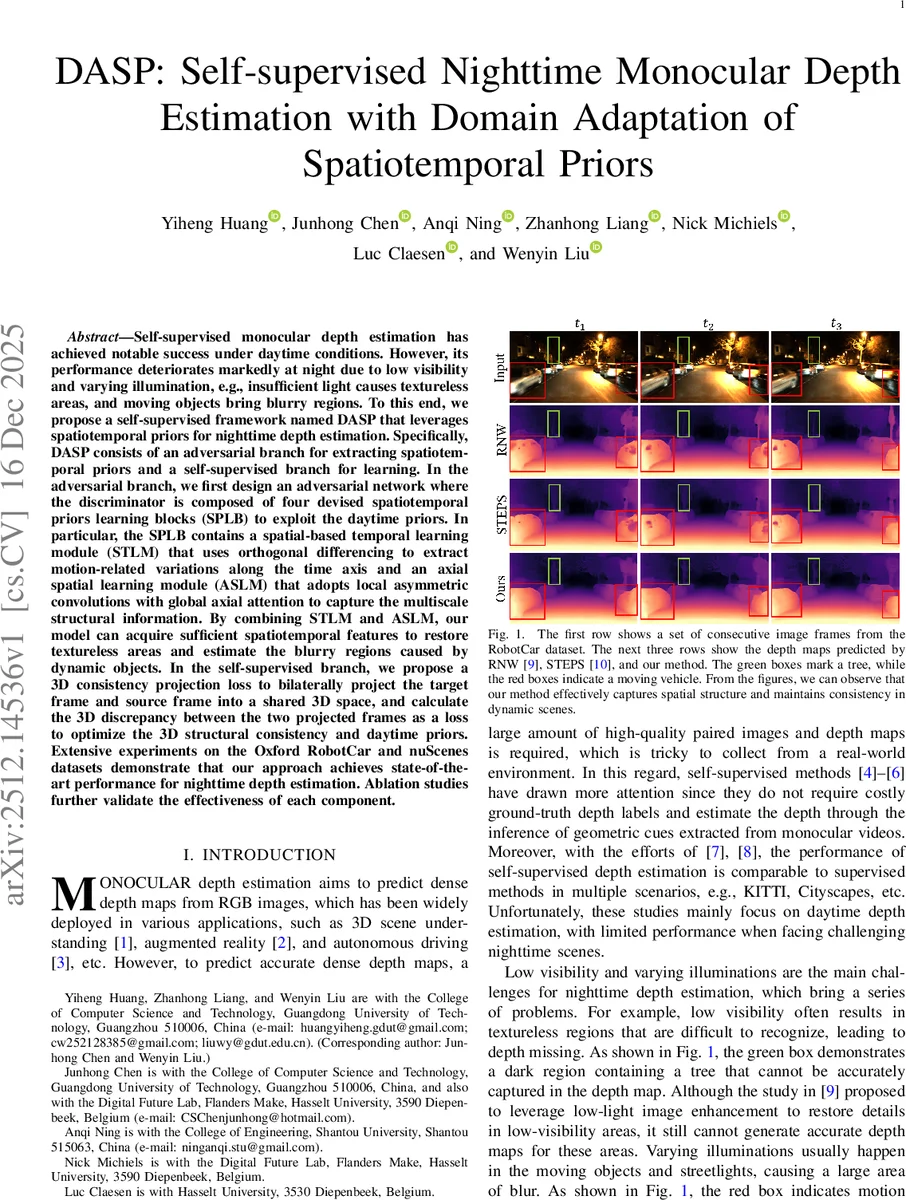

Self-supervised monocular depth estimation has achieved notable success under daytime conditions. However, its performance deteriorates markedly at night due to low visibility and varying illumination, e.g., insufficient light causes textureless areas, and moving objects bring blurry regions. To this end, we propose a self-supervised framework named DASP that leverages spatiotemporal priors for nighttime depth estimation. Specifically, DASP consists of an adversarial branch for extracting spatiotemporal priors and a self-supervised branch for learning. In the adversarial branch, we first design an adversarial network where the discriminator is composed of four devised spatiotemporal priors learning blocks (SPLB) to exploit the daytime priors. In particular, the SPLB contains a spatial-based temporal learning module (STLM) that uses orthogonal differencing to extract motion-related variations along the time axis and an axial spatial learning module (ASLM) that adopts local asymmetric convolutions with global axial attention to capture the multiscale structural information. By combining STLM and ASLM, our model can acquire sufficient spatiotemporal features to restore textureless areas and estimate the blurry regions caused by dynamic objects. In the self-supervised branch, we propose a 3D consistency projection loss to bilaterally project the target frame and source frame into a shared 3D space, and calculate the 3D discrepancy between the two projected frames as a loss to optimize the 3D structural consistency and daytime priors. Extensive experiments on the Oxford RobotCar and nuScenes datasets demonstrate that our approach achieves state-of-the-art performance for nighttime depth estimation. Ablation studies further validate the effectiveness of each component.

💡 Research Summary

The paper addresses the challenging problem of monocular depth estimation at night, where low illumination, texture‑less regions, and motion‑induced blur severely degrade the performance of existing self‑supervised methods that work well in daytime. The authors propose DASP (Depth Adaptation with Spatiotemporal Priors), a two‑branch framework that transfers daytime depth priors to nighttime scenes and enforces stronger geometric consistency.

In the adversarial branch, a GAN is built where the generator is a standard Monodepth2 network that predicts depth and pose for nighttime frames. A pretrained daytime Monodepth2 model provides fixed depth priors for daytime image pairs. The discriminator consists of four Spatiotemporal Priors Learning Blocks (SPLB). Each SPLB contains two complementary modules:

-

Spatial‑based Temporal Learning Module (STLM) – The input sequence is split along horizontal and vertical axes, compressed, and reshaped into temporal streams. Orthogonal differencing (a 3×3 convolutional difference between consecutive frames) extracts motion‑related changes. These differences are refined through axis‑specific asymmetric convolutions (1×3 and 3×1), global axial attention, and sigmoid gating, yielding multi‑scale temporal features that capture dynamic cues.

-

Axial Spatial Learning Module (ASLM) – This module applies local asymmetric convolutions together with multi‑head axial self‑attention along height and width to capture long‑range structural patterns (e.g., streetlights, building edges). The combination of STLM and ASLM produces a rich spatiotemporal representation that helps the discriminator distinguish daytime from nighttime depth maps, thereby encouraging the generator to produce nighttime depths that mimic daytime structural statistics.

The self‑supervised branch augments the classic photometric reprojection loss with three additional terms: a disparity smoothness loss, a geometric consistency loss, and a novel 3D Projection Consistency Loss (L_proj). L_proj projects each pixel from both target and source frames into a shared 3D coordinate system using the predicted depth and relative pose, then penalizes the Euclidean distance between the two 3D points. This bidirectional 3D alignment directly enforces depth‑pose consistency and is especially effective in occluded or blurred regions where traditional 2‑D reprojection is unstable. The overall self‑supervised loss is a weighted sum:

L_self = λ₁·L_p⊙M_s + λ₂·L_ds + λ₃·L_geom + λ₄·L_proj

with λ₁‑λ₄ set to 0.7, 0.1, 0.5, and 0.5 respectively. M_s is a self‑discovered mask derived from geometric inconsistency, which down‑weights dynamic pixels.

Training proceeds by feeding paired daytime and nighttime sequences simultaneously. Daytime depth priors are fixed, while nighttime depth/pose networks are updated through both adversarial and self‑supervised objectives. The combined optimization forces the nighttime network to inherit daytime spatiotemporal patterns while respecting the photometric and geometric constraints of the nighttime video.

Extensive experiments on the Oxford RobotCar night split and the nuScenes night subset demonstrate that DASP outperforms prior state‑of‑the‑art methods (RNW, STEPS, ADDS, PromptMono, etc.) across all standard metrics (Max Depth Error, Abs Rel, Sq Rel, RMSE, RMSE‑log, δ<1.25). Notably, the method reduces depth error by up to 30 % and improves the accuracy of dynamic objects such as moving vehicles, which are traditionally problematic. Ablation studies confirm the contribution of each component: removing SPLB blocks degrades performance, omitting STLM harms motion handling, dropping ASLM weakens structural recovery, and excluding L_proj leads to large errors in blurred regions.

The paper acknowledges limitations: SPLB’s axial attention incurs non‑trivial computational cost, and the quality of daytime priors limits transferability when daytime data lack diversity. Future work is suggested on lightweight axial mechanisms, multi‑scale or high‑resolution depth outputs, and multimodal fusion with LiDAR or radar to further strengthen nighttime depth estimation.

In summary, DASP introduces a principled way to leverage daytime spatiotemporal priors through adversarial domain adaptation and a 3D consistency loss, achieving robust and accurate monocular depth estimation under challenging nighttime conditions.

Comments & Academic Discussion

Loading comments...

Leave a Comment