Vector Prism: Animating Vector Graphics by Stratifying Semantic Structure

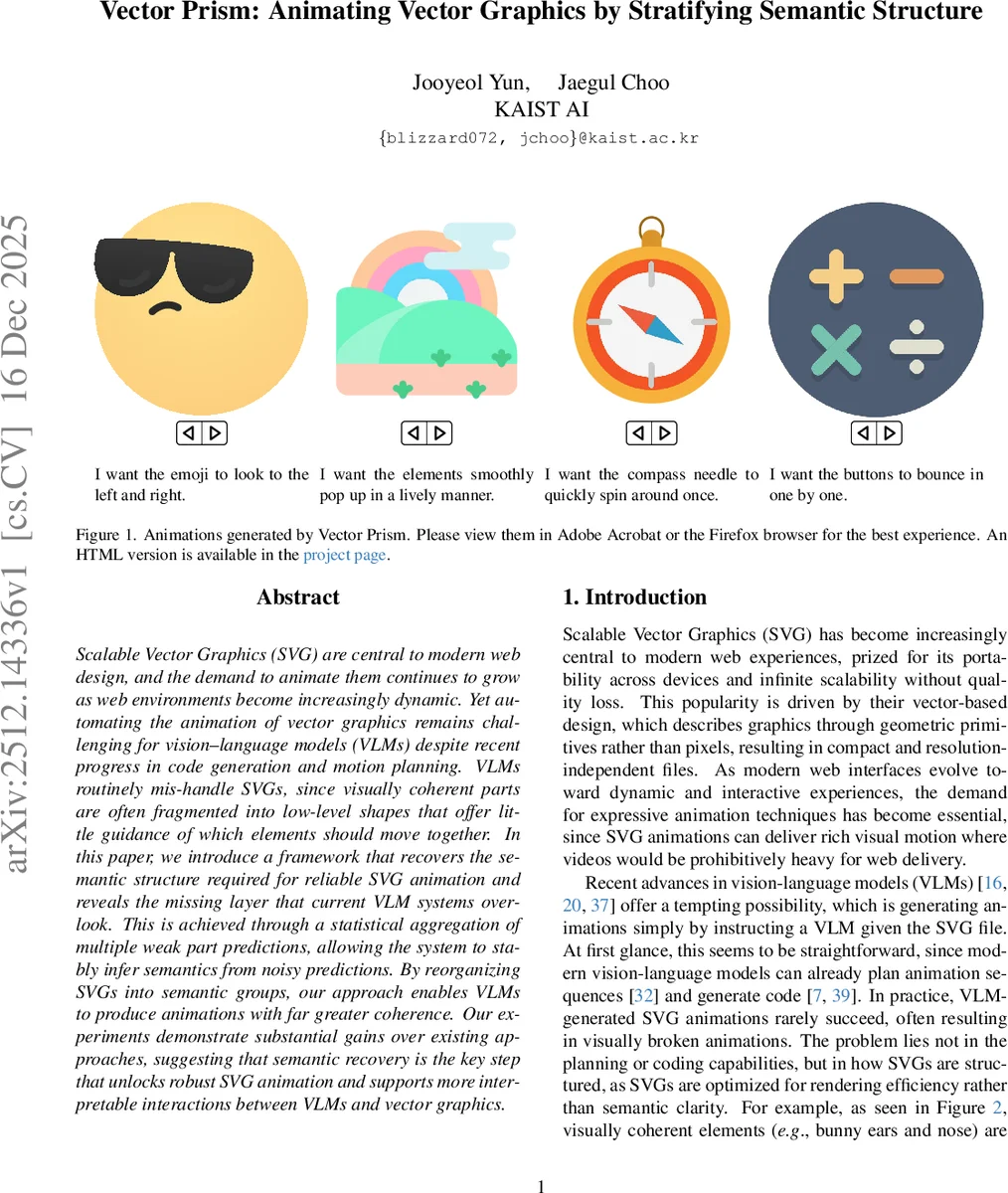

Scalable Vector Graphics (SVG) are central to modern web design, and the demand to animate them continues to grow as web environments become increasingly dynamic. Yet automating the animation of vector graphics remains challenging for vision-language models (VLMs) despite recent progress in code generation and motion planning. VLMs routinely mis-handle SVGs, since visually coherent parts are often fragmented into low-level shapes that offer little guidance of which elements should move together. In this paper, we introduce a framework that recovers the semantic structure required for reliable SVG animation and reveals the missing layer that current VLM systems overlook. This is achieved through a statistical aggregation of multiple weak part predictions, allowing the system to stably infer semantics from noisy predictions. By reorganizing SVGs into semantic groups, our approach enables VLMs to produce animations with far greater coherence. Our experiments demonstrate substantial gains over existing approaches, suggesting that semantic recovery is the key step that unlocks robust SVG animation and supports more interpretable interactions between VLMs and vector graphics.

💡 Research Summary

The paper tackles the long‑standing problem of automatically animating Scalable Vector Graphics (SVG) with modern vision‑language models (VLMs) and large language models (LLMs). While recent advances enable VLMs to generate code and plan motion, SVG files are inherently organized for rendering efficiency rather than semantic clarity. Low‑level primitives such as

Vector Prism introduces a four‑stage pipeline that inserts a missing “semantic layer” into SVGs, allowing VLMs to reason about meaningful parts. First, an animation planning stage renders the whole SVG to a raster image and asks a VLM to produce a high‑level plan that identifies which semantic components should move and how. Second, the semantic wrangling stage re‑labels each primitive. For every primitive, the system creates M different visualizations (highlight, bounding‑box overlay, zoom‑in crop, isolation on a blank canvas, etc.). The VLM is queried on each visualization, yielding a set of weak, noisy labels s_i(x). Because each visualization has a different reliability, a simple majority vote would be sub‑optimal.

To resolve this, the authors adopt a Dawid‑Skene statistical aggregation. They compute pairwise agreement probabilities A_ij between the label sets of two visualizations, which under a simple error model equals p_i p_j + (1‑p_i)(1‑p_j)/(k‑1), where p_i is the unknown accuracy of visualization i and k is the number of semantic categories. By subtracting the chance agreement (1/k) they obtain a centered agreement matrix B. The top eigenvector of B yields a scaled estimate of the reliability deviations δ_i = p_i – 1/k. From δ_i they recover p_i for each visualization. With these reliabilities, a Bayes decision rule assigns a weighted vote to each candidate label: w_i = log

Comments & Academic Discussion

Loading comments...

Leave a Comment