GLM-TTS Technical Report

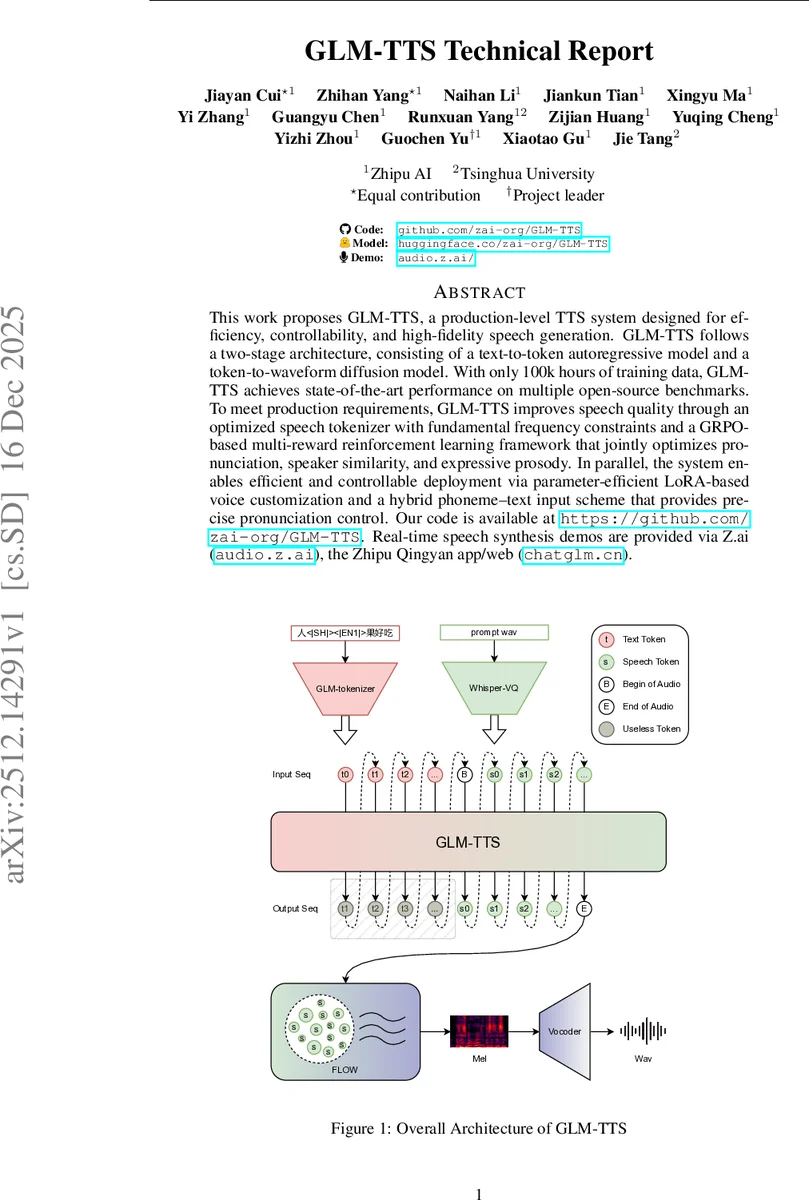

This work proposes GLM-TTS, a production-level TTS system designed for efficiency, controllability, and high-fidelity speech generation. GLM-TTS follows a two-stage architecture, consisting of a text-to-token autoregressive model and a token-to-waveform diffusion model. With only 100k hours of training data, GLM-TTS achieves state-of-the-art performance on multiple open-source benchmarks. To meet production requirements, GLM-TTS improves speech quality through an optimized speech tokenizer with fundamental frequency constraints and a GRPO-based multi-reward reinforcement learning framework that jointly optimizes pronunciation, speaker similarity, and expressive prosody. In parallel, the system enables efficient and controllable deployment via parameter-efficient LoRA-based voice customization and a hybrid phoneme-text input scheme that provides precise pronunciation control. Our code is available at https://github.com/zai-org/GLM-TTS. Real-time speech synthesis demos are provided via Z.ai (audio.z.ai), the Zhipu Qingyan app/web (chatglm.cn).

💡 Research Summary

GLM‑TTS is a production‑grade text‑to‑speech system that combines a text‑to‑token autoregressive model with a token‑to‑waveform diffusion model, forming a two‑stage generation pipeline. The authors claim state‑of‑the‑art performance on several open‑source benchmarks while using only 100 k hours of training data, far less than competing systems that often rely on millions of hours.

The data processing pipeline is extensive: raw audio is standardized to WAV, voice activity detection (VAD) extracts speech segments, a Mel‑Band RoFormer model separates background sounds, a custom denoiser further cleans the signal, and pyannote.audio performs speaker diarization. After amplitude normalization, segments from the same speaker are concatenated up to 40 seconds. High‑quality transcripts are obtained via dual ASR systems (Paraformer/SenseVoice for Chinese, Whisper/Reverb for English) and only samples with word‑error‑rate (WER) < 5 % are retained. Forced alignment and punctuation optimization refine the textual timing, and speaker embeddings plus speech tokens are extracted for downstream training. The entire pipeline runs on a gRPC‑based server‑worker architecture across multiple GPUs for scalability.

The text‑to‑token model builds on Whisper‑VQ but introduces several key upgrades: token generation rate is doubled from 12.5 Hz to 25 Hz, vocabulary size expands from 16 k to 32 k, a pitch‑estimation (PE) module is added to improve prosody alignment, and the causal constraint is removed in favor of a non‑causal convolutional backbone, eliminating sequential bottlenecks. Vocabulary pruning removes tokens composed of more than two Chinese characters, stabilizing the text‑to‑acoustic length ratio and easing training of the autoregressive decoder.

The token‑to‑waveform stage replaces the original Vocos GAN vocoder with Vocos2D, a 2‑D convolutional generator that processes frequency sub‑bands similarly to image processing. DiT‑style residual connections enhance high‑frequency detail reconstruction. Mixed training with high‑quality singing data broadens the model’s pitch range and robustness to complex acoustic conditions.

A major contribution is the multi‑reward reinforcement learning (RL) framework based on GRPO (Gradient‑Regularized Policy Optimization). Four rewards—character error rate (CER) for pronunciation, similarity (SIM) for timbre fidelity, an emotion reward for expressive naturalness, and a laughter reward for paralinguistic realism—are regularized hierarchically (individual → weighted fusion → overall). Dynamic resampling mitigates gradient vanishing by re‑sampling up to three times when batch rewards become homogeneous, while adaptive gradient clipping with high/low ε values prevents early reward hacking and later encourages exploration of low‑probability tokens. This design yields measurable gains in WER, SIM, and emotional expressivity compared with baseline models.

For voice customization, the authors adopt a LoRA‑based fine‑tuning strategy. By updating roughly 15 % of backbone parameters for about 100 epochs, they achieve performance comparable to full‑model fine‑tuning while requiring only one hour of single‑speaker audio. This reduces computational cost by ~80 % and improves stability, making premium voice cloning feasible in production without massive data collection.

Pronunciation control is addressed through “Phoneme‑In”, a hybrid phoneme‑plus‑text input scheme. Dedicated vocabularies for polyphonic characters and rare words are constructed. During training, a probabilistic replacement strategy converts a random subset of standard characters to phonemes (p ≈ 0.2, replacement ratio uniformly sampled up to 0.5), while polyphone/rare characters remain as text to preserve semantic context. At inference, a grapheme‑to‑phoneme (G2P) module generates a full phoneme list; the system then swaps only the ambiguous characters with their phoneme equivalents, delivering precise pronunciation without sacrificing natural prosody.

Experimental results show that GLM‑TTS attains a speaker similarity score of 76.1 and a character error rate of 1.03 % on the Seed‑TTS‑eval zh test set, outperforming several commercial baselines on the CV3‑eval‑emotion benchmark for happy, sad, and angry expressions. The LoRA‑based premium voice customization matches full‑parameter fine‑tuning while using far less data and compute.

In summary, GLM‑TTS integrates an optimized speech tokenizer, a robust two‑stage generation pipeline, a carefully engineered multi‑reward RL loop, efficient LoRA fine‑tuning, and a hybrid phoneme‑text interface to deliver high‑quality, low‑latency, and highly controllable speech synthesis suitable for real‑world deployment. Remaining challenges include extending multilingual and dialectal coverage, further reducing inference latency, and validating the RL reward generalization across unseen domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment