When many noisy genes optimize information flow

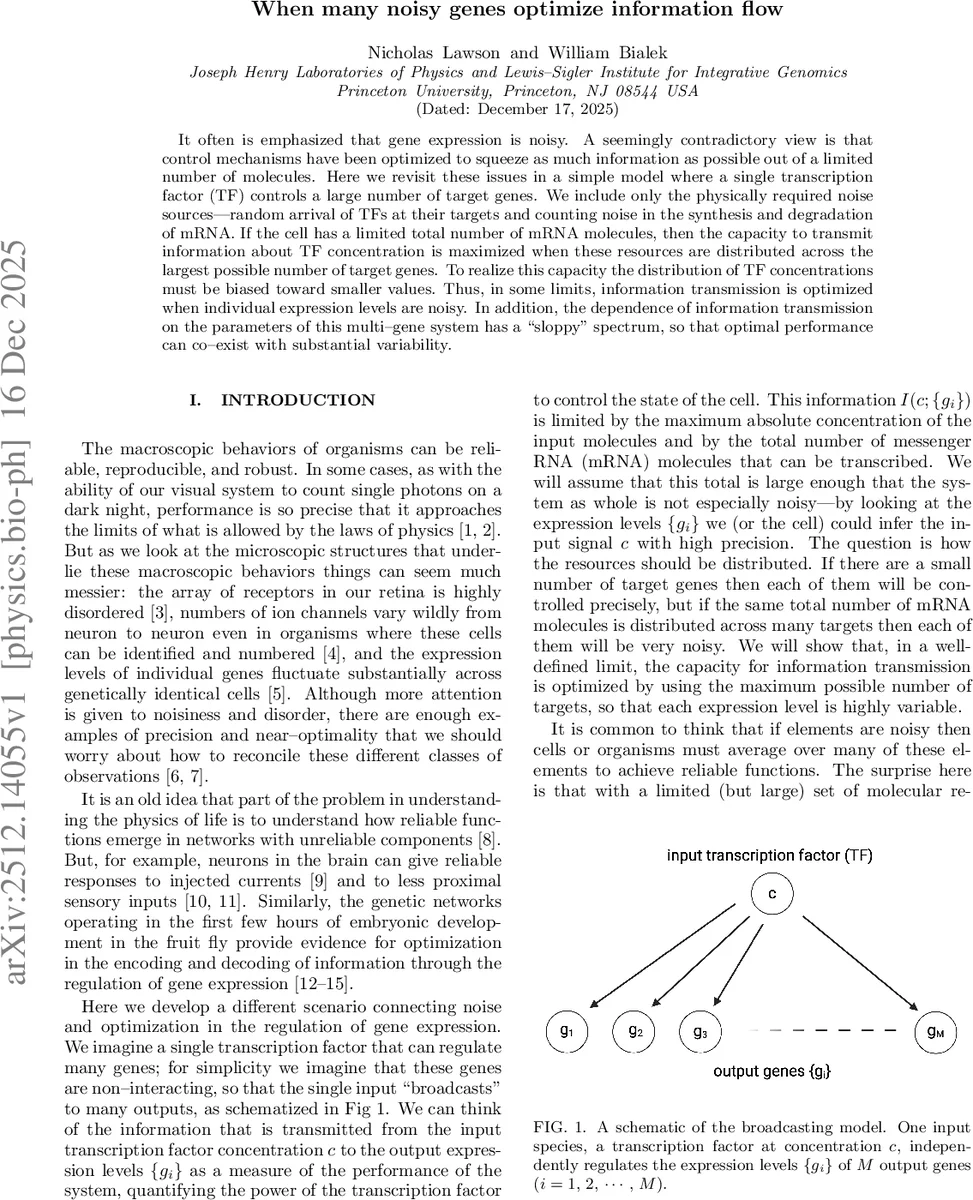

It often is emphasized that gene expression is noisy. A seemingly contradictory view is that control mechanisms have been optimized to squeeze as much information as possible out of a limited number of molecules. Here we revisit these issues in a simple model where a single transcription factor (TF) controls a large number of target genes. We include only the physically required noise sources: random arrival of TFs at their targets and counting noise in the synthesis and degradation of mRNA. If the cell has a limited total number of mRNA molecules, then the capacity to transmit information about TF concentration is maximized when these resources are distributed across the largest possible number of target genes. To realize this capacity the distribution of TF concentrations must be biased toward smaller values. Thus, in some limits, information transmission is optimized when individual expression levels are noisy. In addition, the dependence of information transmission on the parameters of this multi-gene system has a “sloppy” spectrum, so that optimal performance can co-exist with substantial variability.

💡 Research Summary

This paper, titled “When many noisy genes optimize information flow,” by Nicholas Lawson and William Bialek, presents a theoretical exploration of the interplay between noise and optimization in gene regulatory networks. It addresses an apparent paradox: while macroscopic biological functions can be precise, their underlying molecular components, such as gene expression levels, are often highly variable and noisy. The authors reconcile these observations using an information-theoretic approach applied to a minimal model.

The core model is a “broadcasting” network where a single input—the concentration of a transcription factor (TF), denoted as c—regulates the expression levels {g_i} of M independent target genes. The analysis incorporates only two physically unavoidable noise sources: the random arrival of TF molecules at their binding sites (Berg-Purcell noise) and the Poisson counting noise inherent in the synthesis and degradation of mRNA molecules. A key constraint is that the cell has a finite total budget of mRNA molecules, N_tot = M * N_max, where N_max is the maximum number of mRNA molecules per fully expressed gene.

The investigation proceeds through three layers of optimization. First, for a given network with fixed parameters and noise characteristics, the distribution of input TF concentrations, P_TF(c), is optimized to maximize the mutual information I(c; {g_i}) between input and outputs. This optimal distribution is proportional to the inverse of the local error in estimating c from the outputs, P_TF*(c) ∝ 1/σ_c(c). The resulting maximum information defines the network’s information capacity.

Second, the parameters of the network itself—specifically, the input-output functions for each gene—are optimized. These functions are modeled using Hill equations, ¯g_i(c) = c^{n_i}/(c^{n_i} + K_i^{n_i}), characterized by a sensitivity n_i and a half-maximal activation constant K_i. The two noise sources combine into a total output variance, leading to a natural concentration scale c_0 where the noises are comparable.

The third and most significant optimization concerns the allocation of the fixed total resource N_tot. The authors explore the trade-off between having a small number of highly expressed, precise target genes (small M, large N_max) versus a large number of weakly expressed, noisy genes (large M, small N_max). Counterintuitively, they find that in the limit of a large but finite N_tot, the information capacity is maximized by distributing resources across the largest possible number of target genes. This means the optimal strategy is to use many noisy channels rather than a few reliable ones. To achieve this capacity, the optimal input distribution P_TF*(c) must be biased towards lower TF concentrations.

The rationale is that while each individual gene becomes noisier as N_max decreases, having a large number M of such genes provides a more efficient coverage of the input concentration range through the aggregate of their input-output slopes. The total estimation precision, derived from combining all channels, outweighs the increased per-channel noise.

An additional crucial finding is the “sloppiness” of the system near this optimum. When M is large and information is near its maximum, the capacity becomes remarkably insensitive to the precise values of individual gene parameters (K_i, n_i). There exist broad, soft modes in parameter space where combinations of parameters can vary significantly without substantially degrading performance. This implies that optimal information transmission can coexist with substantial variability in the underlying regulatory mechanisms across cells or species.

In conclusion, the paper provides a proof of principle that noise in gene expression is not merely a nuisance to be overcome but can emerge as a direct consequence of optimizing information flow under resource constraints. The simple broadcasting model demonstrates how biological systems might leverage numerous noisy components to maximize their informational capabilities, offering a novel perspective on the evolutionary design principles of genetic networks and the observed coexistence of robustness and variability in biology.

Comments & Academic Discussion

Loading comments...

Leave a Comment