SELECT: Detecting Label Errors in Real-world Scene Text Data

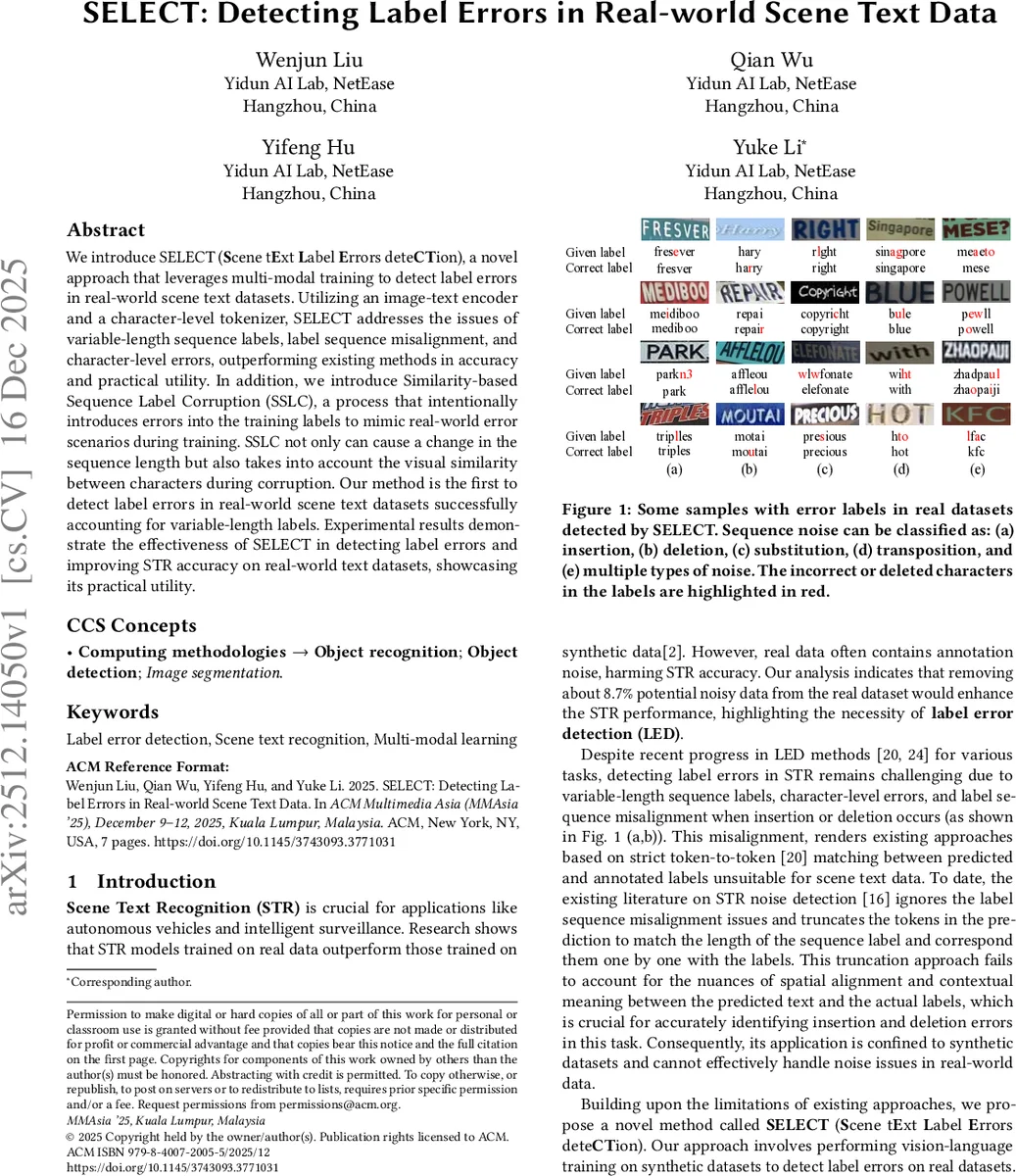

We introduce SELECT (Scene tExt Label Errors deteCTion), a novel approach that leverages multi-modal training to detect label errors in real-world scene text datasets. Utilizing an image-text encoder and a character-level tokenizer, SELECT addresses the issues of variable-length sequence labels, label sequence misalignment, and character-level errors, outperforming existing methods in accuracy and practical utility. In addition, we introduce Similarity-based Sequence Label Corruption (SSLC), a process that intentionally introduces errors into the training labels to mimic real-world error scenarios during training. SSLC not only can cause a change in the sequence length but also takes into account the visual similarity between characters during corruption. Our method is the first to detect label errors in real-world scene text datasets successfully accounting for variable-length labels. Experimental results demonstrate the effectiveness of SELECT in detecting label errors and improving STR accuracy on real-world text datasets, showcasing its practical utility.

💡 Research Summary

This paper introduces SELECT (Scene tExt Label Errors deteCTion), a novel framework designed to detect label errors in real-world scene text datasets. The core challenge addressed is that real-world STR datasets often contain noisy annotations (e.g., insertions, deletions, substitutions, transpositions), which degrade model performance. Existing label error detection methods are ill-suited for sequence text data as they rely on token-to-token alignment between predictions and labels, failing to handle variable-length sequences and misalignments caused by insertions/deletions.

SELECT innovatively reframes the problem as a multi-modal matching task. The model architecture consists of a visual encoder (Vision Transformer) and an image-text encoder inspired by BLIP. Instead of first recognizing text and then comparing it to the label, SELECT takes the raw image and its corresponding text label (tokenized at the character level) as direct input. The image-text encoder processes these multimodal inputs and outputs a binary prediction: whether the image and the text label are correctly matched (Matched) or not (Unmatched). This approach bypasses the need for explicit sequence alignment, inherently accommodating variable-length labels. The use of a character-level tokenizer is crucial for detecting fine-grained character errors (e.g., ‘i’ vs ’l’). An auxiliary STR decoder head is added for auxiliary learning, enhancing the model’s text localization and understanding capabilities.

A key component for training SELECT is the proposed SSLC (Similarity-based Sequence Label Corruption) technique. Since SELECT is trained on synthetic data (which has clean labels) to work on real data, SSLC generates realistic negative samples (Unmatched pairs) by corrupting the clean labels during training. SSLC employs four corruption operations: insertion, deletion, substitution, and transposition. Its most significant contribution is the “similarity-based noise transition matrix” used for the substitution operation. This matrix is built by calculating the visual similarity between characters based on their image features (extracted by a ResNet50 from rendered character images in various fonts and angles). It prioritizes substituting a character with a visually similar one (e.g., ‘o’->‘0’, ‘c’->‘e’), effectively simulating the confusion a human annotator might experience. This makes the model robust to the complex noise patterns found in real-world data.

Experiments were conducted on both synthetic and real-world datasets. On synthetic data with controlled noise, SELECT achieved a high error detection accuracy of 98.45%, significantly outperforming a baseline method adapted from Confident Learning. More importantly, on the real-world Real-L dataset, the practical utility of SELECT was demonstrated. By removing the top 8.7% of samples flagged as most likely erroneous by SELECT, and then retraining STR models (ABINet, PARSeq) on this “cleaned” dataset, the character accuracy improved by approximately 1.5 percentage points compared to training on the original noisy dataset. Ablation studies confirmed the importance of both the diverse corruption operations and the visual similarity matrix within SSLC.

In summary, this work presents a paradigm shift for label error detection in scene text by leveraging multi-modal matching. SELECT effectively solves the challenges of variable-length sequences and misalignment, while SSLC provides a sophisticated method for simulating real-world annotation noise during training. The experimental results validate both the accuracy of the detection method and its practical value in improving STR model performance through dataset cleaning.

Comments & Academic Discussion

Loading comments...

Leave a Comment