Robust Single-shot Structured Light 3D Imaging via Neural Feature Decoding

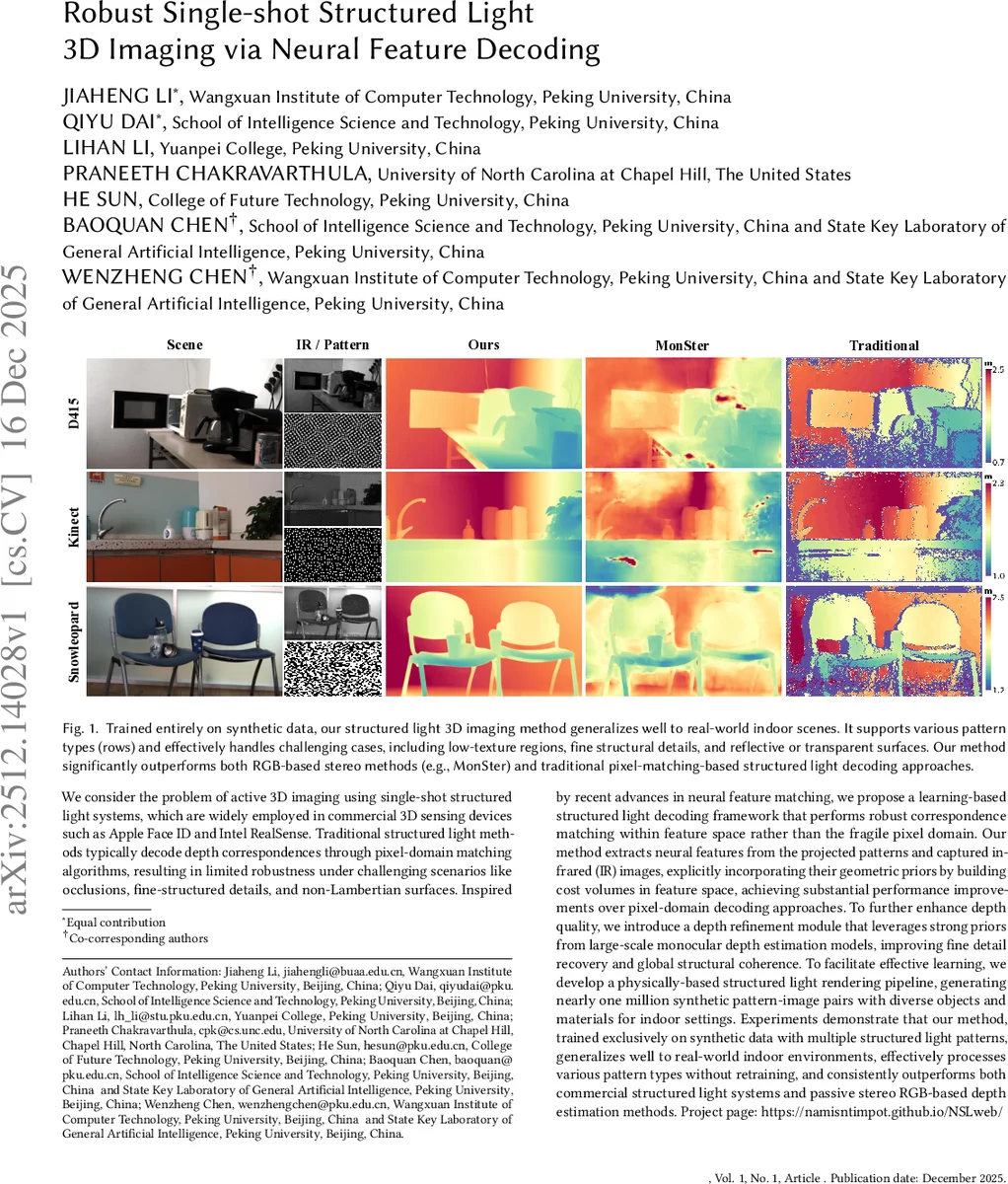

We consider the problem of active 3D imaging using single-shot structured light systems, which are widely employed in commercial 3D sensing devices such as Apple Face ID and Intel RealSense. Traditional structured light methods typically decode depth correspondences through pixel-domain matching algorithms, resulting in limited robustness under challenging scenarios like occlusions, fine-structured details, and non-Lambertian surfaces. Inspired by recent advances in neural feature matching, we propose a learning-based structured light decoding framework that performs robust correspondence matching within feature space rather than the fragile pixel domain. Our method extracts neural features from the projected patterns and captured infrared (IR) images, explicitly incorporating their geometric priors by building cost volumes in feature space, achieving substantial performance improvements over pixel-domain decoding approaches. To further enhance depth quality, we introduce a depth refinement module that leverages strong priors from large-scale monocular depth estimation models, improving fine detail recovery and global structural coherence. To facilitate effective learning, we develop a physically-based structured light rendering pipeline, generating nearly one million synthetic pattern-image pairs with diverse objects and materials for indoor settings. Experiments demonstrate that our method, trained exclusively on synthetic data with multiple structured light patterns, generalizes well to real-world indoor environments, effectively processes various pattern types without retraining, and consistently outperforms both commercial structured light systems and passive stereo RGB-based depth estimation methods. Project page: https://namisntimpot.github.io/NSLweb/.

💡 Research Summary

This paper introduces “NSL” (Neural Structured Light), a novel learning-based framework designed to overcome the robustness limitations of traditional single-shot structured light 3D imaging systems, such as those used in Apple Face ID and Intel RealSense. Conventional methods decode depth by performing pixel-intensity matching between a known projected pattern and a captured infrared (IR) image, which often fails under challenging conditions like occlusions, fine details, and non-Lambertian surfaces.

The core innovation of NSL is shifting the correspondence matching from the fragile pixel domain to a robust neural feature space. The pipeline consists of two main stages. First, the Neural Feature Matching module extracts deep feature maps from both the IR image(s) and the projected pattern using CNN encoders. It then constructs a cost volume pyramid by correlating the IR features with the pattern features, explicitly leveraging the spatial prior encoded in the projection. This cost volume is iteratively refined by a GRU-based updater to produce an initial depth map.

Second, the Monocular Depth Refinement module enhances this initial depth by integrating strong visual and structural priors from a large-scale pretrained monocular depth estimation model (DPT). The initial depth acts as a geometric prompt, guiding the refinement network to recover fine details, sharpen boundaries, and correct mismatches, resulting in a final high-quality depth map with improved global coherence.

A critical contribution supporting this work is the development of a large-scale, physically-based synthetic dataset. Using a Blender simulation pipeline, the authors generated approximately 953,000 high-fidelity indoor scene samples with diverse objects, materials, lighting conditions, and multiple pattern types, complete with ground-truth depth. Remarkably, NSL is trained exclusively on this synthetic data.

Extensive experiments demonstrate that NSL generalizes effectively to real-world indoor scenes without any fine-tuning, successfully processing various pattern types at inference time. It consistently outperforms both commercial structured light systems (e.g., Intel RealSense D415) and state-of-the-art passive stereo RGB-based depth estimation methods, particularly in challenging areas such as textureless regions, specular/transparent surfaces, and complex occlusions. This work establishes that learning feature-based matching in the structured light context, powered by high-quality synthetic data, is a highly effective path toward robust, high-performance 3D sensing.

Comments & Academic Discussion

Loading comments...

Leave a Comment