VIBE: Can a VLM Read the Room?

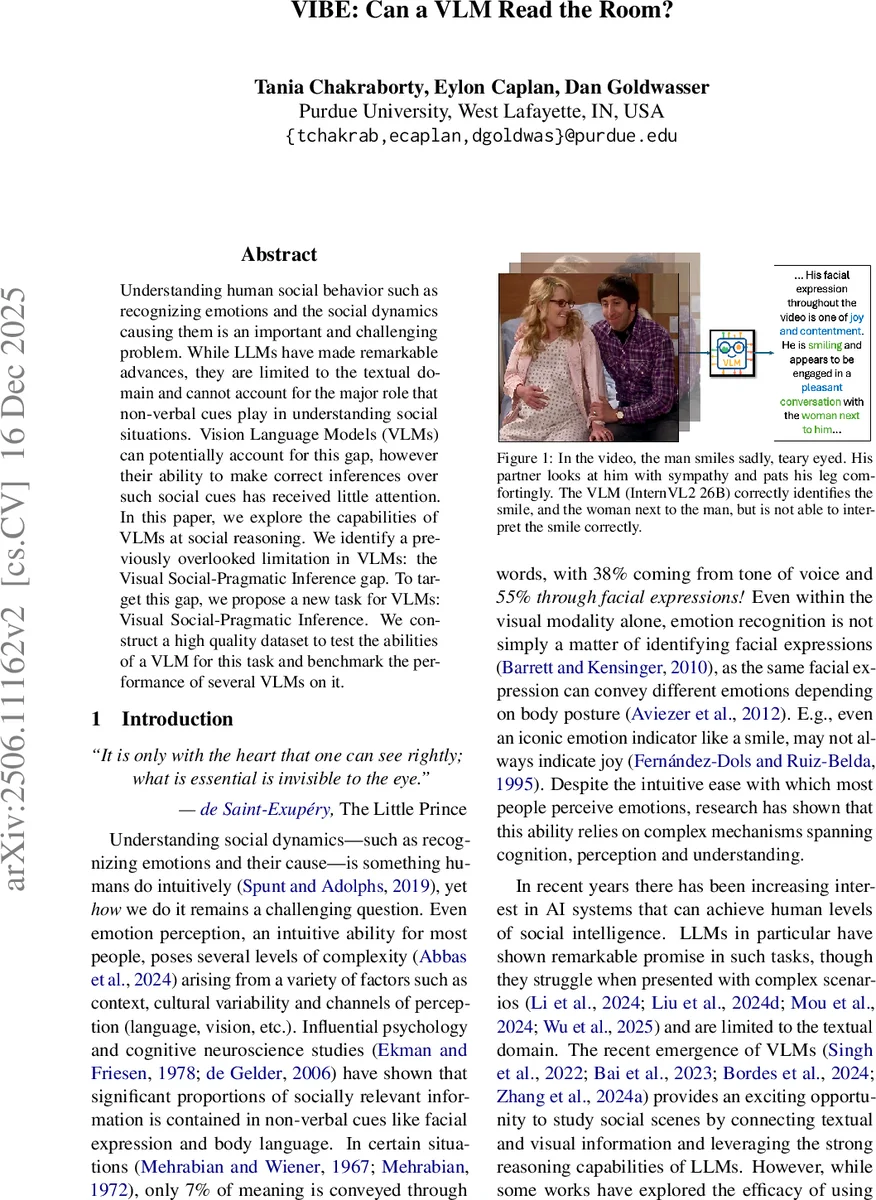

Understanding human social behavior such as recognizing emotions and the social dynamics causing them is an important and challenging problem. While LLMs have made remarkable advances, they are limited to the textual domain and cannot account for the major role that non-verbal cues play in understanding social situations. Vision Language Models (VLMs) can potentially account for this gap, however their ability to make correct inferences over such social cues has received little attention. In this paper, we explore the capabilities of VLMs at social reasoning. We identify a previously overlooked limitation in VLMs: the Visual Social-Pragmatic Inference gap. To target this gap, we propose a new task for VLMs: Visual Social-Pragmatic Inference. We construct a high quality dataset to test the abilities of a VLM for this task and benchmark the performance of several VLMs on it.

💡 Research Summary

The paper “VIBE: Can a VLM Read the Room?” investigates whether modern Vision‑Language Models (VLMs) can understand social situations by correctly interpreting non‑verbal cues in videos. While large language models (LLMs) have shown impressive reasoning abilities, they are limited to text and cannot directly process visual signals such as facial expressions, body posture, or eye gaze that are crucial for human emotion perception. The authors argue that VLMs, which combine visual perception with LLM‑style reasoning, could fill this gap, but their capacity for social‑pragmatic inference has not been systematically examined.

To address this, the authors define a new problem called Visual Social‑Pragmatic (VSP) Inference. VSP inference requires a model to take a clearly observable visual cue (e.g., a smile) and, using the surrounding visual context, decide which pragmatic interpretation is correct (e.g., a sad smile versus a joyful smile). They distinguish this from the more commonly studied “hallucination” problem, where a model invents visual elements that are not present. In VSP inference, the cue is guaranteed to exist, so any error stems from mis‑interpretation rather than hallucination.

Two experimental tasks are built around this definition. The first is a diagnostic Emotion Prediction task that uses multi‑party conversational videos from the MC‑EIU dataset. Each instance provides a transcript of the spoken utterance and a short video clip (<4 seconds). Models must predict the speaker’s emotion. This task serves to gauge whether VLMs can fuse visual and textual information effectively. The second, novel task is the VSP Inference task itself. For each video, a visual cue is identified, and two mutually exclusive pragmatic interpretations are offered; the model must select the correct one.

To evaluate VSP inference in isolation, the authors construct the VIBE dataset (Visual Social‑Pragmatic Inference of Behavior and Emotion), containing 994 carefully curated instances. Each instance includes: (i) a short video where the cue is visibly present, (ii) a textual description of the cue, and (iii) two candidate interpretations. Human annotators provide gold‑standard labels, ensuring that the task is answerable and that any model error can be attributed to mis‑interpretation.

Four state‑of‑the‑art VLMs are benchmarked: InternVL2‑8B, InternVL2‑26B, Qwen‑3B, and Qwen‑7B. Experiments are conducted under two settings. In the “VLM‑only” setting, each model processes the video (sampling up to 30 frames) and directly outputs an emotion label. In the “VLM‑LLM agent” setting, the VLM acts as a perception module, generating a textual description of the visual cue at three levels of VSP inference (pure cue, cue + some inference, full inference). These descriptions are then fed to a strong LLM (GPT‑4o‑mini) which performs the final reasoning.

Results on the Emotion Prediction task reveal a surprising trend: the best performance is achieved by the text‑only GPT‑4o‑mini (F1 = 0.538). Adding visual information from VLMs does not yield a statistically significant boost; the best VLM (InternVL2‑26B) reaches 0.493 with text only and 0.468 when vision is fused. This suggests that current VLMs either fail to extract useful visual signals for emotion reasoning or introduce noisy descriptions that degrade downstream reasoning.

On the VSP Inference task, humans outperform the best VLM by 17.2 percentage points (≈82 % vs. 65 % accuracy). Error analysis shows that the dominant failure mode is mis‑interpretation of subtle cues such as eye movement, tears, and nuanced body posture, rather than outright hallucination of nonexistent cues. Even when the visual cue (e.g., a smile) is correctly identified, the model often defaults to the stereotypical mapping (“smile → joy”) and ignores contextual modifiers that would indicate a sad or bittersweet smile. Moreover, in the VLM‑LLM agent configuration, the VLM’s generated descriptions sometimes contain contradictory or overly generic statements, which mislead the LLM and reduce overall accuracy.

The paper’s contributions are threefold: (1) exposing the Visual Social‑Pragmatic inference gap in current VLMs, (2) providing a dedicated benchmark (VIBE) that isolates this gap from hallucination, and (3) demonstrating how this gap directly impacts downstream social‑science tasks such as emotion recognition. The authors argue that closing this gap will require new training objectives that explicitly link visual cues to pragmatic meanings, better prompt engineering to control the level of inference a VLM is allowed to make, and architectures capable of modeling longer temporal dependencies in video.

In summary, while VLMs have made impressive strides in visual perception, they remain far from human‑level social intelligence. The VIBE dataset offers a valuable testbed for future research aiming to endow multimodal models with the nuanced, context‑aware reasoning necessary to truly “read the room.”

Comments & Academic Discussion

Loading comments...

Leave a Comment