LWGANet: Addressing Spatial and Channel Redundancy in Remote Sensing Visual Tasks with Light-Weight Grouped Attention

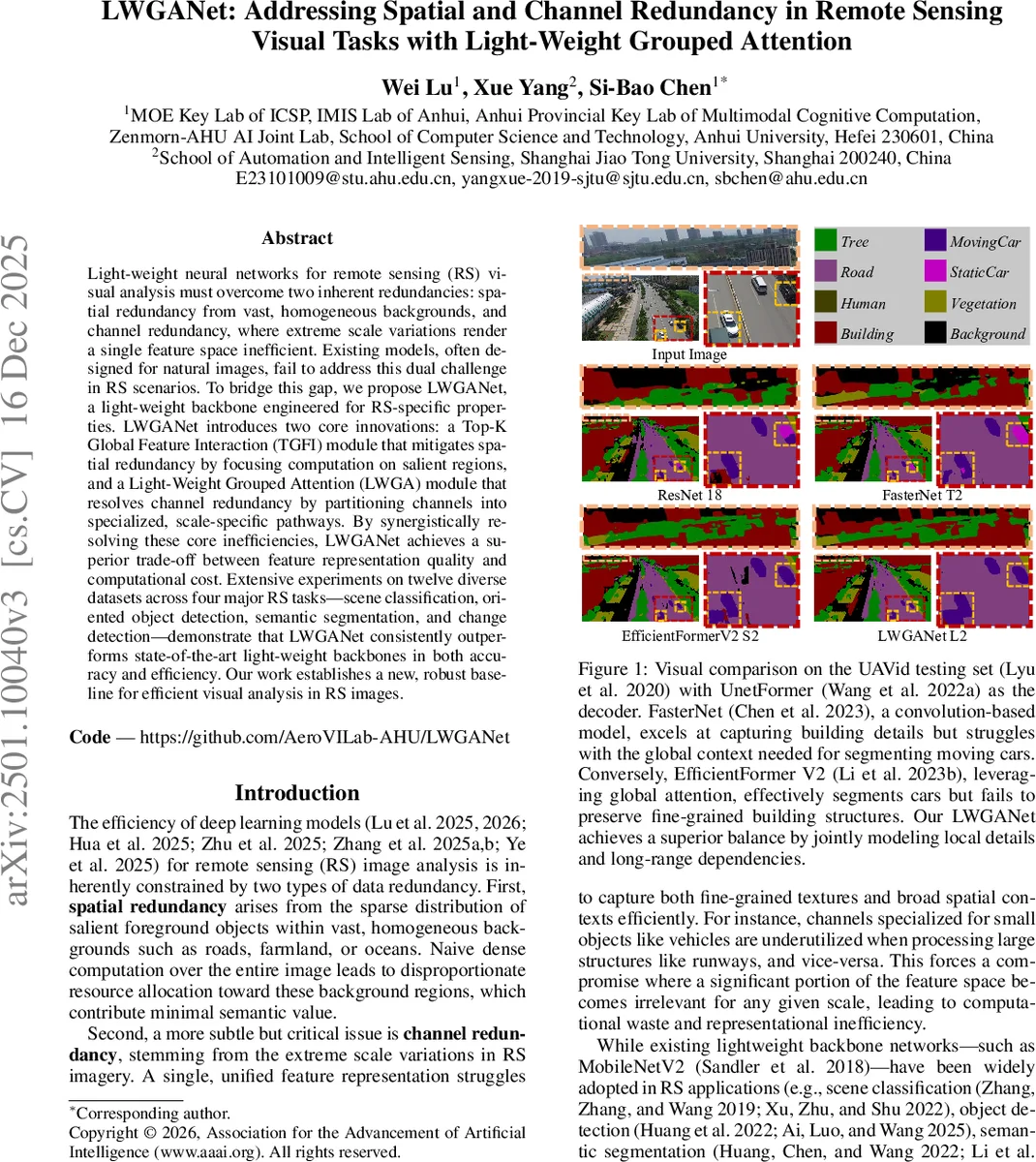

Light-weight neural networks for remote sensing (RS) visual analysis must overcome two inherent redundancies: spatial redundancy from vast, homogeneous backgrounds, and channel redundancy, where extreme scale variations render a single feature space inefficient. Existing models, often designed for natural images, fail to address this dual challenge in RS scenarios. To bridge this gap, we propose LWGANet, a light-weight backbone engineered for RS-specific properties. LWGANet introduces two core innovations: a Top-K Global Feature Interaction (TGFI) module that mitigates spatial redundancy by focusing computation on salient regions, and a Light-Weight Grouped Attention (LWGA) module that resolves channel redundancy by partitioning channels into specialized, scale-specific pathways. By synergistically resolving these core inefficiencies, LWGANet achieves a superior trade-off between feature representation quality and computational cost. Extensive experiments on twelve diverse datasets across four major RS tasks–scene classification, oriented object detection, semantic segmentation, and change detection–demonstrate that LWGANet consistently outperforms state-of-the-art light-weight backbones in both accuracy and efficiency. Our work establishes a new, robust baseline for efficient visual analysis in RS images.

💡 Research Summary

LWGANet is a lightweight backbone specifically designed for remote sensing (RS) visual analysis, addressing two intrinsic redundancies that hinder efficiency: spatial redundancy caused by vast homogeneous backgrounds and channel redundancy arising from extreme scale variations of objects. The authors introduce two core modules to tackle these issues. The Top‑K Global Feature Interaction (TGFI) module first partitions the feature map into non‑overlapping regions, selects the most salient token from each region (based on activation magnitude), and performs global context modeling only on this reduced token set. After interaction (via convolution or attention), the enhanced tokens are restored to their original spatial locations, effectively eliminating costly computation on background areas while preserving long‑range dependencies.

The Light‑Weight Grouped Attention (LWGA) module resolves channel redundancy by splitting the channel dimension into four non‑overlapping groups, each routed through a specialized pathway optimized for a particular scale of features: (1) Gate Point Attention (GPA) enhances fine‑grained details using a 1×1 expansion‑BN‑activation‑1×1 contraction‑sigmoid pipeline; (2) Regular Local Attention (RLA) captures local patterns with a standard 3×3 convolution; (3) Sparse Medium‑range Attention (SMA) leverages TGFI‑reduced tokens and an 11‑pixel window to model medium‑range context; (4) Sparse Global Attention (SGA) provides global context, using a lightweight combination of grouped and dilated convolutions in early stages and full multi‑head self‑attention in later, low‑resolution stages. The four processed groups are concatenated, yielding a multi‑scale enriched feature map.

Architecturally, LWGANet follows a four‑stage hierarchical design, progressively down‑sampling spatial resolution by factors of 4, 8, 16, and 32. Three capacity variants (L0, L1, L2) differ only in stem channel width (32, 64, 96) and dropout rates, allowing flexible trade‑offs between parameters and FLOPs. Each stage consists of a sequence of LWGA blocks (counts

Comments & Academic Discussion

Loading comments...

Leave a Comment