The Optimal Approximation Factor in Density Estimation

Consider the following problem: given two arbitrary densities $q_1,q_2$ and a sample-access to an unknown target density $p$, find which of the $q_i$’s is closer to $p$ in total variation. A remarkable result due to Yatracos shows that this problem is tractable in the following sense: there exists an algorithm that uses $O(\epsilon^{-2})$ samples from $p$ and outputs~$q_i$ such that with high probability, $TV(q_i,p) \leq 3\cdot\mathsf{opt} + \epsilon$, where $\mathsf{opt}= \min{TV(q_1,p),TV(q_2,p)}$. Moreover, this result extends to any finite class of densities $\mathcal{Q}$: there exists an algorithm that outputs the best density in $\mathcal{Q}$ up to a multiplicative approximation factor of 3. We complement and extend this result by showing that: (i) the factor 3 can not be improved if one restricts the algorithm to output a density from $\mathcal{Q}$, and (ii) if one allows the algorithm to output arbitrary densities (e.g.\ a mixture of densities from $\mathcal{Q}$), then the approximation factor can be reduced to 2, which is optimal. In particular this demonstrates an advantage of improper learning over proper in this setup. We develop two approaches to achieve the optimal approximation factor of 2: an adaptive one and a static one. Both approaches are based on a geometric point of view of the problem and rely on estimating surrogate metrics to the total variation. Our sample complexity bounds exploit techniques from {\it Adaptive Data Analysis}.

💡 Research Summary

The paper studies agnostic density estimation under the total variation (TV) distance, focusing on the task of selecting, from a finite class of candidate distributions 𝒬, a hypothesis that is competitive with the best member of 𝒬 with respect to an unknown target distribution p. The classic result of Yatracos (1985) shows that a proper algorithm—one that must output a member of 𝒬—can achieve a multiplicative approximation factor of 3 using O(ε⁻²) samples. The authors ask whether this factor can be improved and whether allowing the algorithm to output arbitrary (improper) distributions can lead to a better factor.

The main contributions are twofold. First, they prove a lower bound: for any α < 3 there exists a class 𝒬 of size two that cannot be properly α‑learned. This establishes that the factor 3 is optimal for proper learning. The proof adapts Le Cam’s method, tensorizes it, and uses a birthday‑paradox style argument to construct two distributions that are indistinguishable with fewer than the required number of samples, forcing any proper algorithm to incur at least a 3‑approximation.

Second, they show that if the algorithm is allowed to output any distribution (including mixtures of members of 𝒬), the optimal factor drops to 2, and this is tight. The tightness follows from a lower bound of Chan et al. (2014), which demonstrates that even with improper output the factor cannot be less than 2. To achieve the factor 2, the authors present two algorithmic frameworks:

-

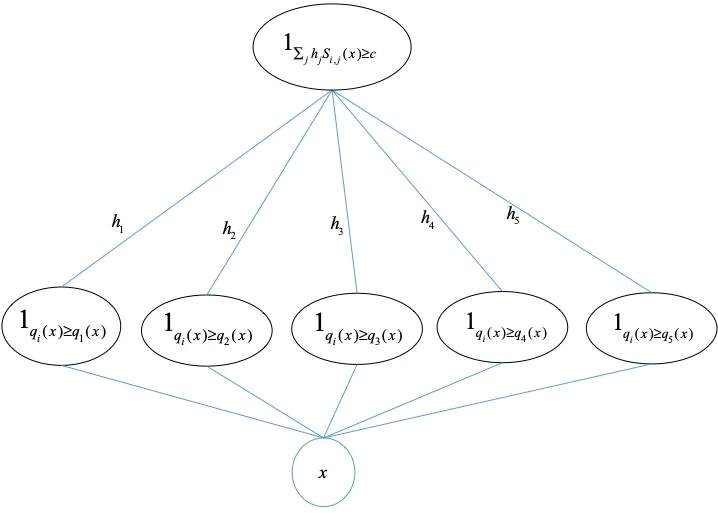

Static method – Extends Yatracos’ construction by defining a family 𝔽 of bounded functions (symmetric under complement) whose supremum defines a surrogate metric d_𝔽 that approximates TV. By estimating d_𝔽(p, q_i) for all q_i∈𝒬 using empirical averages (leveraging the finite VC dimension of 𝔽), they obtain a distribution q̂ satisfying v(q̂) ≤ v(p)+ε·1, where v(·) is the vector of distances to each q_i. Lemma 3 then guarantees TV(q̂, p) ≤ 2·opt + ε. The sample complexity is O(|𝒬|·ε⁻²·log|𝒳|).

-

Adaptive method – Maintains lower bounds z_i ≤ TV(p, q_i) for each candidate. In each iteration, it identifies a function f_i (via the minimax theorem) such that a linear combination of the gaps |E_p

Comments & Academic Discussion

Loading comments...

Leave a Comment