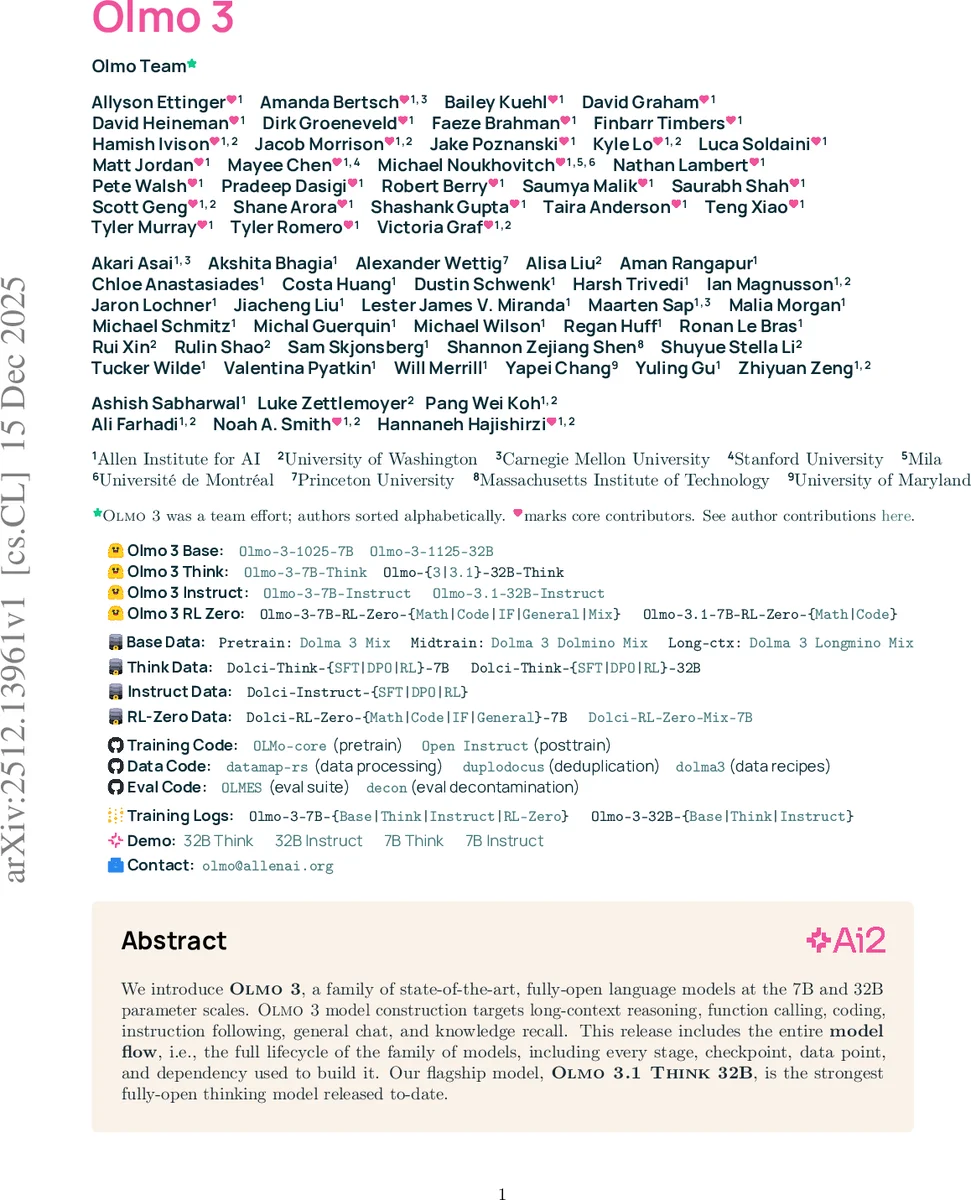

Olmo 3

We introduce Olmo 3, a family of state-of-the-art, fully-open language models at the 7B and 32B parameter scales. Olmo 3 model construction targets long-context reasoning, function calling, coding, instruction following, general chat, and knowledge recall. This release includes the entire model flow, i.e., the full lifecycle of the family of models, including every stage, checkpoint, data point, and dependency used to build it. Our flagship model, Olmo 3 Think 32B, is the strongest fully-open thinking model released to-date.

💡 Research Summary

Olmo 3 introduces a comprehensive family of fully open‑source language models at 7 B and 32 B parameter scales, covering a complete model lifecycle—from raw data collection and preprocessing to final checkpoints and post‑training variants. The authors release not only the final models but also every intermediate checkpoint, the exact data mixes used at each stage, and the full training and evaluation code, enabling unrestricted customization and reproducibility.

The core of the effort is the Olmo 3 Base model, trained in three distinct phases. Phase 1 (pre‑training) consumes a 6 T‑token “Dolma 3 Mix” that incorporates novel trillion‑token‑scale deduplication, a new academic PDF OCR pipeline (olmOCR), and token‑constrained mixing with quality‑aware up‑sampling. Phase 2 (mid‑training) adds 100 B tokens from a curated “Dolma 3 Dolmino Mix”, specifically enriched for code, mathematics, and general QA, using a two‑part framework that combines lightweight distributed feedback loops with centralized integration tests to assess mix quality and downstream trainability. Phase 3 (long‑context extension) introduces the “Dolma 3 Longmino Mix”, a massive collection of scientific PDFs exceeding 8 K tokens (22.3 M documents) and 32 K tokens (4.5 M documents), allowing the model to handle up to 65 K tokens of context.

Evaluation of the base model is performed with a bespoke benchmark suite called OlmoBaseEval. To obtain reliable signals from small‑scale proxy models, the authors aggregate scores across task clusters, devise proxy metrics for noisy tasks, and increase sample sizes or drop problematic tasks altogether. Olmo 3 Base 32 B outperforms contemporary open‑weight models such as Qwen 2.5 32 B, Mistral Small 3.1 24 B, and Gemma 3 27 B on long‑context benchmarks despite a relatively short extension stage.

On top of the base, three downstream variants are released:

-

Olmo 3 Think – a “thinking” model that generates structured reasoning traces before producing a final answer. Training proceeds through supervised fine‑tuning (SFT) with the Dolci Think SFT dataset (synthetic examples containing step‑by‑step traces), preference optimization via Direct Preference Optimization (DPO) using a high‑quality contrastive set (Dolci Think DPO), and finally reinforcement learning with verifiable rewards (RL‑VR) using the OlmoRL framework. The RL stage incorporates verifiable reward functions for math, code, and general dialogue, achieving a 4× speed‑up over prior RL pipelines. Olmo 3.1 Think 32 B reaches state‑of‑the‑art performance among fully open thinking models, narrowing the gap to the proprietary Qwen 3 32 B while using six times fewer tokens.

-

Olmo 3 Instruct – an instruction‑following model optimized for concise, low‑latency responses and function‑calling capabilities. The Dolci Instruct SFT dataset enriches training with function‑calling examples; Dolci Instruct DPO adds multi‑turn preference data and length‑intervention strategies to encourage brevity; RL‑VR further refines the model while preserving concise output. Olmo 3 Instruct 32 B surpasses notable open‑weight models such as Gemini 3 27 B, IBM Granite 3.3, and Llama 3 on a broad suite of chat and instruction benchmarks, reducing the remaining performance gap to Qwen 3.

-

Olmo 3 RL‑Zero – a variant that applies reinforcement learning directly on the base model without any intermediate fine‑tuning. This design enables researchers to study how the base data distribution influences RL performance, addressing a limitation of prior open RL work where the underlying base weights were hidden.

All data mixes (pre‑training, mid‑training, long‑context) are released both as the actual token‑level mixes and as the raw source pools (9 T tokens pre‑training, 2 T tokens mid‑training, 640 B tokens long‑context). Smaller sample mixes (150 B pre‑training, 10 B mid‑training) are provided for low‑resource experimentation. Training code includes the OLMo‑core pre‑training framework, Open Instruct for post‑training, datamap‑rs for data processing, duplodocus for deduplication, and the dolma3 recipes. Evaluation tools (OLMES, decontamination pipeline) and the OlmoRL reinforcement‑learning framework are also open‑source.

Key technical contributions include: (i) a scalable trillion‑token deduplication pipeline; (ii) the olmOCR pipeline that converts scientific PDFs into linearized text, yielding the largest publicly available long‑context corpus; (iii) token‑constrained mixing and quality‑aware up‑sampling for better data curriculum; (iv) a two‑stage feedback loop for mid‑training mix selection; (v) Delta Learning‑based contrastive data generation for DPO; and (vi) verifiable reward design that extends RL beyond purely synthetic tasks to code and open‑ended dialogue.

Overall, Olmo 3 sets a new standard for openness in large‑language‑model research by publishing the entire model flow, enabling the community to reproduce, inspect, and extend each stage of development. This transparency is expected to accelerate innovation in specialized domains, improve reproducibility, and foster a more collaborative ecosystem for future LLM advancements.

Comments & Academic Discussion

Loading comments...

Leave a Comment