Nexels: Neurally-Textured Surfels for Real-Time Novel View Synthesis with Sparse Geometries

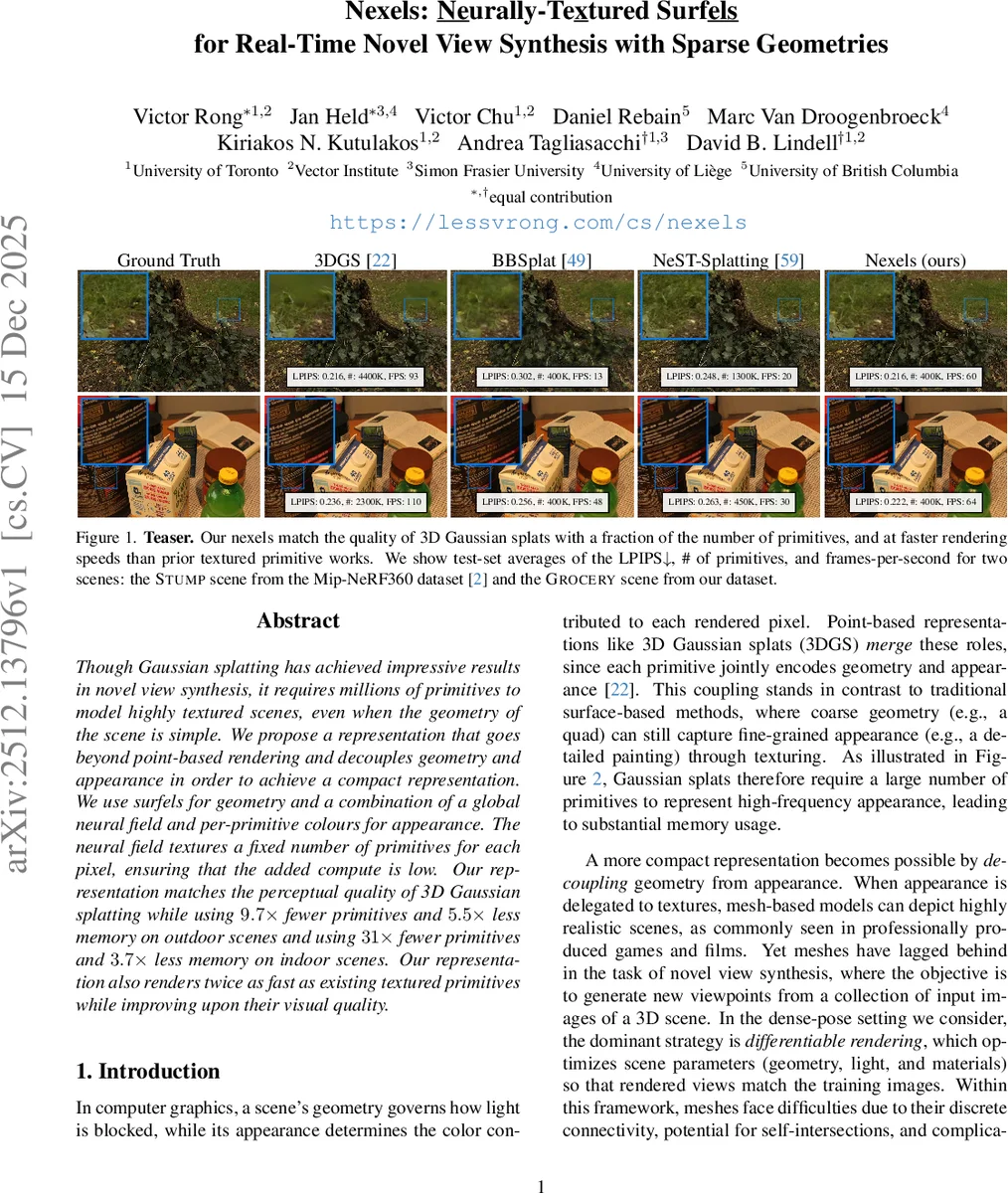

Though Gaussian splatting has achieved impressive results in novel view synthesis, it requires millions of primitives to model highly textured scenes, even when the geometry of the scene is simple. We propose a representation that goes beyond point-based rendering and decouples geometry and appearance in order to achieve a compact representation. We use surfels for geometry and a combination of a global neural field and per-primitive colours for appearance. The neural field textures a fixed number of primitives for each pixel, ensuring that the added compute is low. Our representation matches the perceptual quality of 3D Gaussian splatting while using $9.7\times$ fewer primitives and $5.5\times$ less memory on outdoor scenes and using $31\times$ fewer primitives and $3.7\times$ less memory on indoor scenes. Our representation also renders twice as fast as existing textured primitives while improving upon their visual quality.

💡 Research Summary

The paper addresses a fundamental limitation of 3D Gaussian splatting (3DGS) for novel view synthesis: the tight coupling of geometry and appearance forces the use of millions of primitives to capture high‑frequency textures, leading to excessive memory consumption and reduced scalability. To overcome this, the authors introduce “Nexels,” a novel primitive that decouples geometry from appearance while retaining the differentiable, point‑based optimization advantages of 3DGS.

Geometry is represented by surfels (surface elements) equipped with a generalized Gaussian kernel. Each surfel has a mean position, rotation, anisotropic scale, opacity, and a gamma parameter that controls the kernel’s shape. When gamma approaches one, the kernel behaves like a standard Gaussian; as gamma grows large, it converges to a sharp rectangular (quad) indicator. This flexibility allows Nexels to model both smooth surfaces and hard‑edged planar regions efficiently, using far fewer parameters than traditional splats.

Appearance is split into two modes. The non‑textured mode retains the original 2DGS spherical‑harmonic color representation for fast baseline rendering. The textured mode leverages a global neural field based on the Instant‑NGP architecture (multiresolution hash‑grid + tiny MLP). The neural field receives a 3‑D world‑space position and outputs view‑dependent radiance encoded as spherical‑harmonic coefficients. Crucially, the neural field is queried only for a small, per‑pixel subset of surfels that contribute most to the final color, dramatically limiting the number of expensive neural evaluations.

Rendering proceeds in two passes. In the first “collection” pass, all surfels intersecting a pixel are rasterized, their alpha values and transmittance are accumulated, and an initial image is produced using only the non‑textured colors. Simultaneously, the algorithm maintains a per‑pixel buffer of the K surfels with the highest blending weights (alpha × transmittance). In the second “texturing” pass, the stored K surfels are queried through the neural field; hash‑grid features are attenuated by a focal‑length‑based factor to reduce aliasing, and the resulting colors replace the corresponding contributions in the initial image. Because K ≪ M (the total number of intersecting surfels), the neural field is evaluated a constant, small number of times per pixel, enabling real‑time performance (>30 FPS).

Training jointly optimizes surfel parameters (position, rotation, scale, opacity, gamma) and the neural field. The loss combines photometric L2, depth consistency, regularization, and an adaptive density control that encourages the surfel distribution to match scene complexity. This results in compact surfel clouds that faithfully capture geometry while the neural field supplies high‑frequency texture detail.

Empirical evaluation on outdoor (Mip‑NeRF360 “STUMP”) and indoor (a newly captured “GROCERY”) scenes demonstrates that Nexels achieve perceptual parity with 3DGS while using dramatically fewer primitives: 9.7× fewer on outdoor scenes (4.4 M vs. 44 M) and 31× fewer on indoor scenes (0.4 M vs. 12.4 M). Memory consumption drops by 5.5× (outdoor) and 3.7× (indoor). Rendering speed more than doubles that of prior textured‑primitive methods (e.g., BBSplat, Textured Gaussians), reaching 60–110 FPS at the reported resolutions. LPIPS scores remain comparable (≈0.216), confirming that visual quality is preserved despite the compact representation.

In summary, Nexels present a compelling solution that (1) decouples geometry and appearance to achieve high parameter efficiency, (2) adopts a “render‑only‑where‑needed” neural texture strategy inspired by traditional mesh rasterization, and (3) maintains differentiable rendering pipelines suitable for end‑to‑end optimization. The work opens avenues for further research into dynamic scenes, advanced lighting models, and hardware‑accelerated implementations, positioning Nexels as a promising building block for real‑time, high‑quality novel view synthesis.

Comments & Academic Discussion

Loading comments...

Leave a Comment