Constrained Policy Optimization via Sampling-Based Weight-Space Projection

Safety-critical learning requires policies that improve performance without leaving the safe operating regime. We study constrained policy learning where model parameters must satisfy unknown, rollout-based safety constraints. We propose SCPO, a sampling-based weight-space projection method that enforces safety directly in parameter space without requiring gradient access to the constraint functions. Our approach constructs a local safe region by combining trajectory rollouts with smoothness bounds that relate parameter changes to shifts in safety metrics. Each gradient update is then projected via a convex SOCP, producing a safe first-order step. We establish a safe-by-induction guarantee: starting from any safe initialization, all intermediate policies remain safe given feasible projections. In constrained control settings with a stabilizing backup policy, our approach further ensures closed-loop stability and enables safe adaptation beyond the conservative backup. On regression with harmful supervision and a constrained double-integrator task with malicious expert, our approach consistently rejects unsafe updates, maintains feasibility throughout training, and achieves meaningful primal objective improvement.

💡 Research Summary

The paper introduces SCPO (Sampling‑based Constrained Policy Optimization), a novel method for safe learning in safety‑critical control and reinforcement‑learning settings. Unlike prior approaches that enforce safety at the action level, through expectation‑based constraints, or by using trust‑region methods in policy space, SCPO directly guarantees that the policy parameters themselves remain within a safe set throughout training.

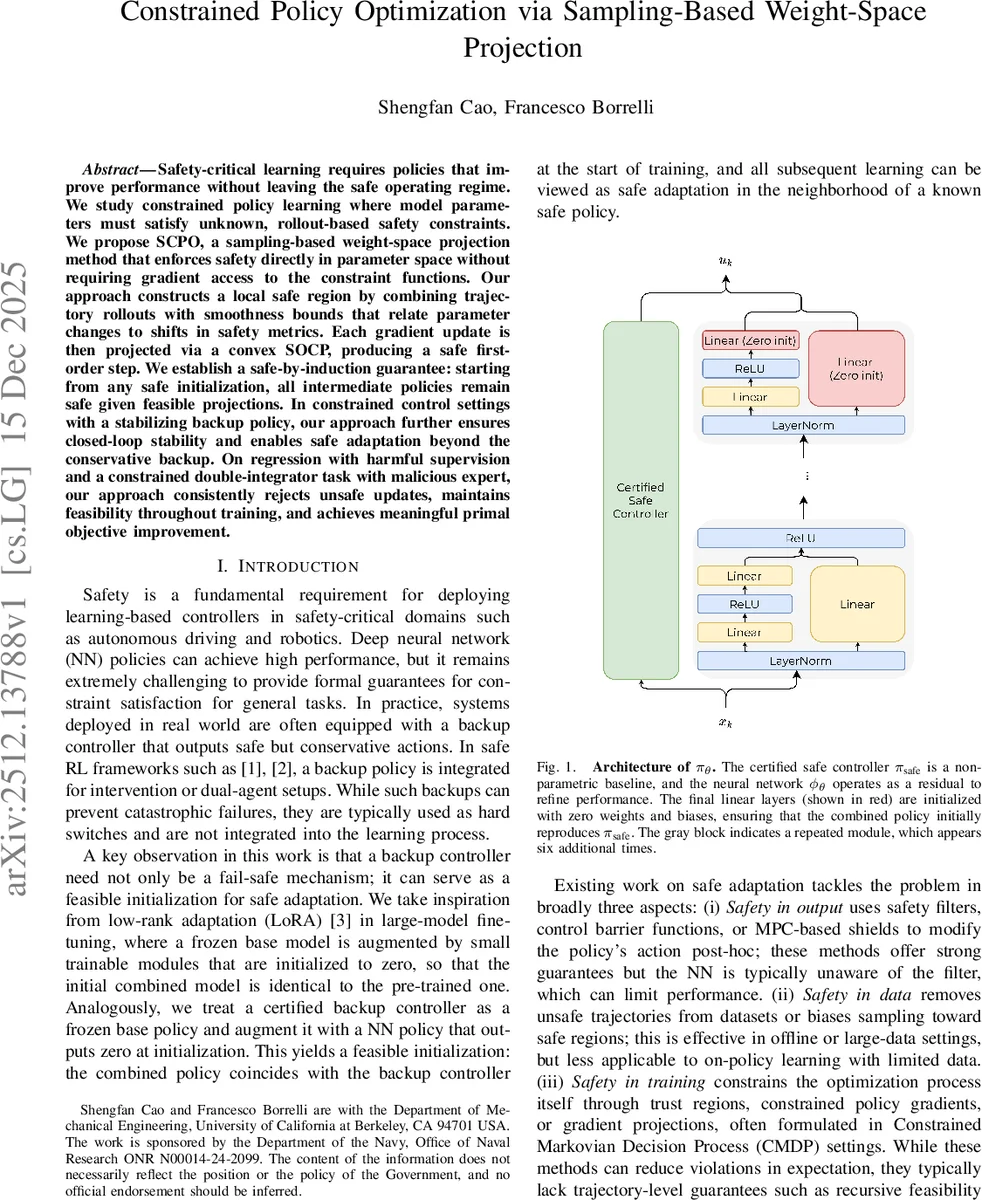

The authors start from a feasible “backup” controller π_safe, which is known to satisfy all safety constraints. A residual neural network φ_θ, initialized with zero weights, is added on top of π_safe so that the combined policy π_θ = π_safe + φ_θ coincides with the safe controller at the beginning of training. This provides a safe initialization point for any subsequent adaptation.

The learning problem is formulated as minimizing a task loss L(θ) subject to unknown rollout‑based safety metrics g(θ) ≤ 0. The exact form of g and its Jacobian are not required; only pointwise evaluations are assumed. Assuming each component g_i is locally L_i‑smooth, the authors derive a quadratic upper bound:

g_i(θ+Δθ) ≤ g_i(θ) + ∇g_i(θ)ᵀΔθ + (L_i/2)‖Δθ‖².

Using a first‑order Taylor expansion of the loss plus an ℓ₂ regularizer, the raw gradient step Δθ_raw = –η∇L(θ) can be interpreted as the minimizer of a quadratic distance to Δθ_raw.

Directly solving the resulting convex quadratic program in the full parameter space is infeasible for large neural networks because the Jacobian J_g(θ) is unavailable and the dimension d is huge. SCPO circumvents this by restricting Δθ to the linear span of a small set of recent candidate updates D =

Comments & Academic Discussion

Loading comments...

Leave a Comment