Image Diffusion Preview with Consistency Solver

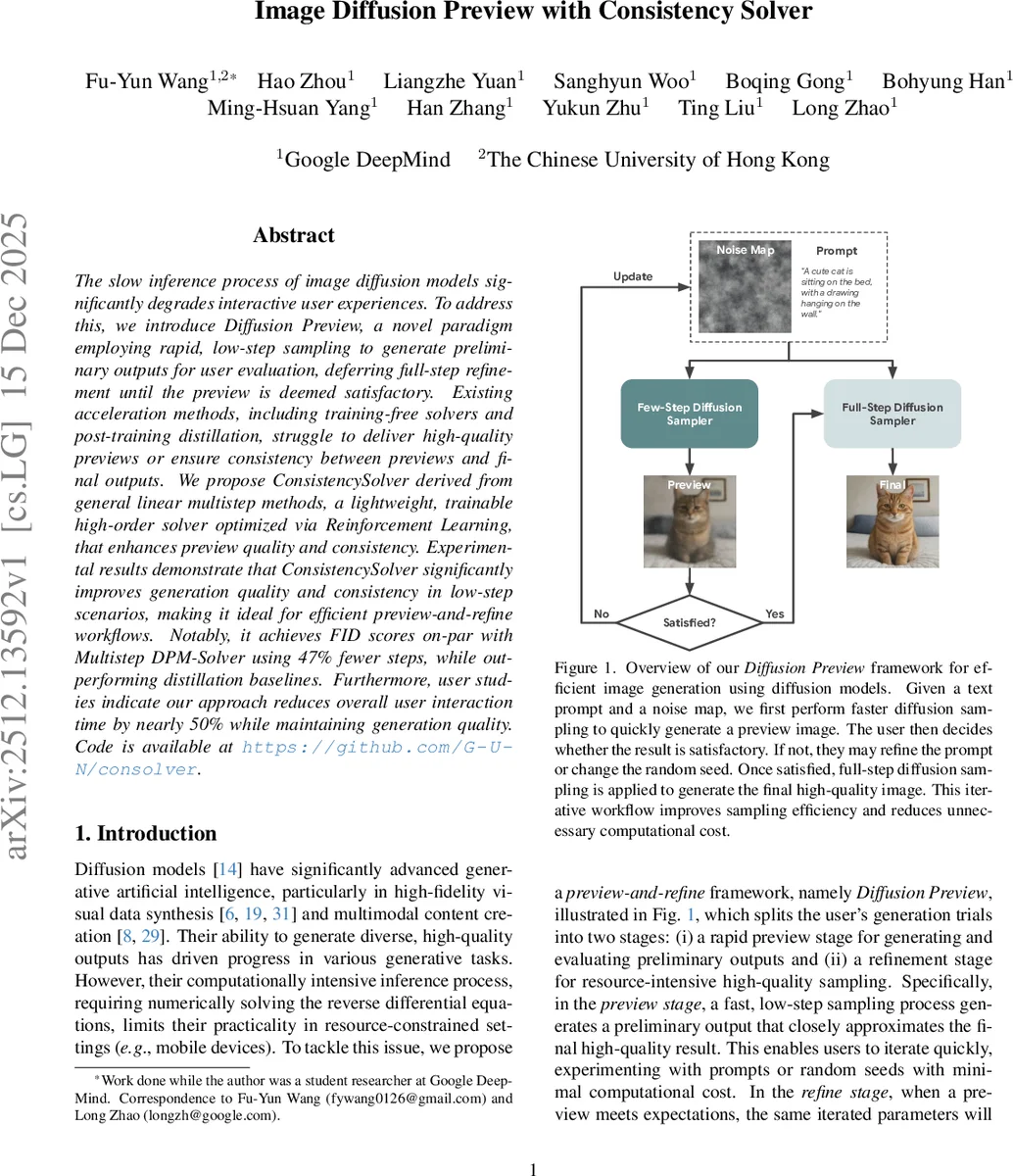

The slow inference process of image diffusion models significantly degrades interactive user experiences. To address this, we introduce Diffusion Preview, a novel paradigm employing rapid, low-step sampling to generate preliminary outputs for user evaluation, deferring full-step refinement until the preview is deemed satisfactory. Existing acceleration methods, including training-free solvers and post-training distillation, struggle to deliver high-quality previews or ensure consistency between previews and final outputs. We propose ConsistencySolver derived from general linear multistep methods, a lightweight, trainable high-order solver optimized via Reinforcement Learning, that enhances preview quality and consistency. Experimental results demonstrate that ConsistencySolver significantly improves generation quality and consistency in low-step scenarios, making it ideal for efficient preview-and-refine workflows. Notably, it achieves FID scores on-par with Multistep DPM-Solver using 47% fewer steps, while outperforming distillation baselines. Furthermore, user studies indicate our approach reduces overall user interaction time by nearly 50% while maintaining generation quality. Code is available at https://github.com/G-U-N/consolver.

💡 Research Summary

The paper tackles the notorious latency of diffusion‑based image generation, which hampers interactive applications such as design prototyping or rapid content creation. The authors introduce a new workflow called Diffusion Preview, which splits generation into two stages: a fast, low‑step “preview” stage that produces a coarse image for quick user feedback, and a “refine” stage that runs a full‑step sampler to obtain the final high‑fidelity result once the preview is deemed satisfactory. This paradigm demands three properties: (1) Fidelity – previews must be visually and structurally close to the final output; (2) Efficiency – the preview must be generated with minimal computation; and (3) Consistency – the mapping from the initial random seed (and prompt) to the final image must be deterministic, so that refining a satisfactory preview yields the expected high‑quality image.

Existing acceleration techniques fall short. Training‑free ODE solvers (e.g., DDIM, DPM‑Solver, UniPC) rely on fixed analytical approximations that often produce low‑quality previews and lack guarantees of consistency when the number of steps is drastically reduced. Post‑training distillation methods (ODE distillation, score distillation) embed the acceleration into the model weights, achieving good quality but at the cost of expensive retraining, loss of flexibility (e.g., variable step numbers), and disruption of the deterministic PF‑ODE trajectory, which harms preview‑refine consistency.

To overcome these limitations, the authors propose ConsistencySolver, a learnable high‑order multistep ODE solver specifically designed for the preview‑refine workflow. ConsistencySolver is built upon the classical Linear Multistep Method (LMM) but departs from traditional fixed coefficients. Instead, a lightweight neural policy network (a small MLP) takes the current and next timesteps ((t_i, t_{i+1})) as input and outputs a set of adaptive integration weights ({w_j(t_i, t_{i+1})}_{j=1}^m). The update rule is:

\

Comments & Academic Discussion

Loading comments...

Leave a Comment