3D Human-Human Interaction Anomaly Detection

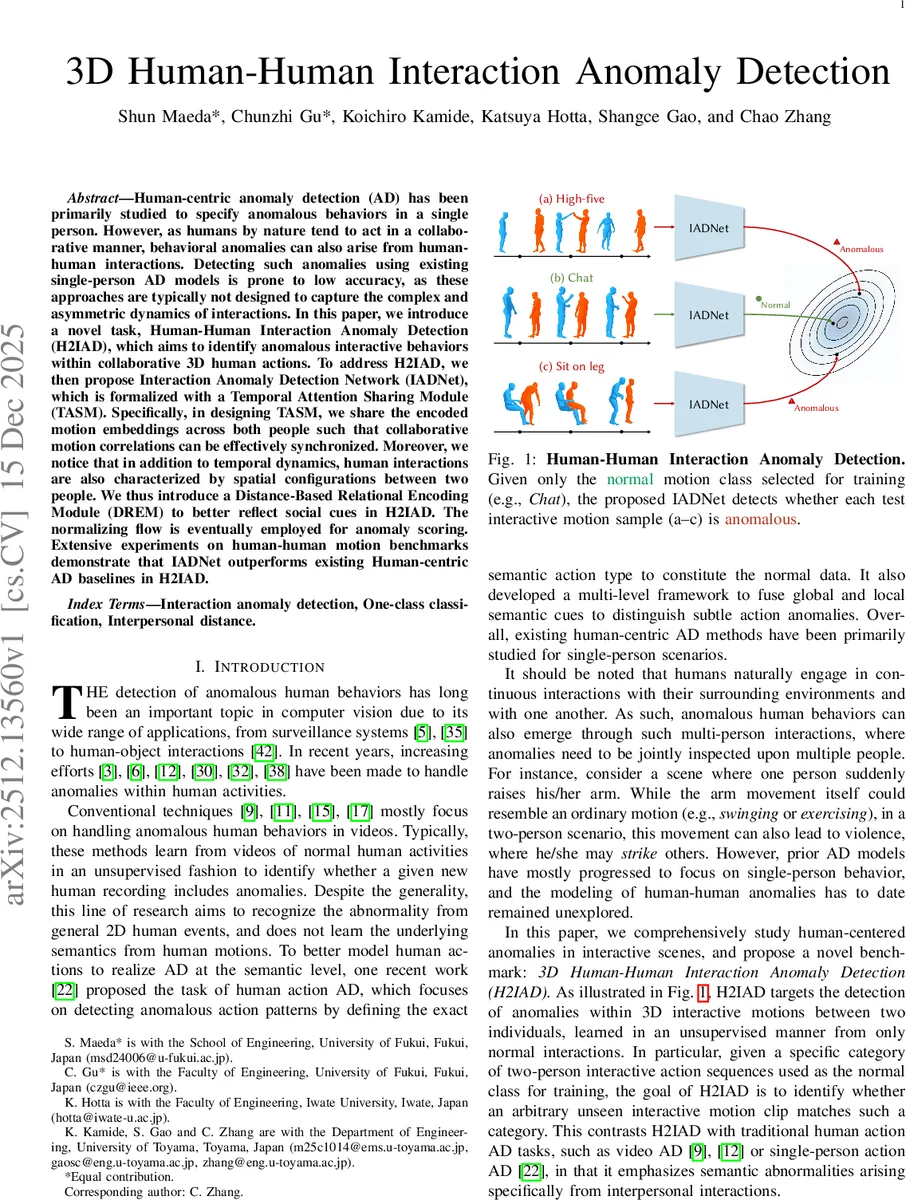

Human-centric anomaly detection (AD) has been primarily studied to specify anomalous behaviors in a single person. However, as humans by nature tend to act in a collaborative manner, behavioral anomalies can also arise from human-human interactions. Detecting such anomalies using existing single-person AD models is prone to low accuracy, as these approaches are typically not designed to capture the complex and asymmetric dynamics of interactions. In this paper, we introduce a novel task, Human-Human Interaction Anomaly Detection (H2IAD), which aims to identify anomalous interactive behaviors within collaborative 3D human actions. To address H2IAD, we then propose Interaction Anomaly Detection Network (IADNet), which is formalized with a Temporal Attention Sharing Module (TASM). Specifically, in designing TASM, we share the encoded motion embeddings across both people such that collaborative motion correlations can be effectively synchronized. Moreover, we notice that in addition to temporal dynamics, human interactions are also characterized by spatial configurations between two people. We thus introduce a Distance-Based Relational Encoding Module (DREM) to better reflect social cues in H2IAD. The normalizing flow is eventually employed for anomaly scoring. Extensive experiments on human-human motion benchmarks demonstrate that IADNet outperforms existing Human-centric AD baselines in H2IAD.

💡 Research Summary

The paper introduces a novel problem called Human‑Human Interaction Anomaly Detection (H2IAD), which aims to identify abnormal collaborative behaviors between two people using only normal interaction data for training. To address this task, the authors propose Interaction Anomaly Detection Network (IADNet), which consists of three main components.

First, the Temporal Attention Sharing Module (TASM) processes the motion sequences of both participants with a pair of identical Transformer encoders that share parameters. This design enforces synchronized temporal representations and captures asymmetric dependencies that independent encoders would miss. Instead of fixed sinusoidal positional encodings, TASM employs a learnable positional embedding matrix shared across the two streams, allowing the model to adaptively encode interaction‑specific timing.

Second, the Distance‑Based Relational Encoding Module (DREM) computes frame‑wise pairwise joint distances between the two subjects, forming a dynamic distance map that encodes social‑spatial cues (e.g., proximity, reach). These relational embeddings are injected into the Motion Cross‑Attention stage of TASM, enabling the network to fuse temporal dynamics with spatial context.

Third, an Anomaly Scoring Module based on Normalizing Flow (NF) learns the likelihood distribution of normal interaction embeddings. During inference, anomalous clips receive low likelihood scores, which are directly used as anomaly scores without reconstruction or prediction errors.

Extensive experiments on large‑scale 3D interaction datasets (derived from Human3.6M and CMU Mocap) show that IADNet outperforms existing video‑based, VAE, diffusion, and GCN‑based human‑centric AD baselines, achieving 8–12 percentage‑point gains in AUC/AP, especially on interactions where distance changes are critical (e.g., slap, push). Ablation studies confirm the individual contributions of parameter sharing, shared positional embeddings, and distance‑based relational encoding.

Overall, IADNet provides the first dedicated architecture for H2IAD, demonstrating that jointly modeling synchronized temporal attention and explicit spatial relationships is essential for reliable detection of abnormal human‑human interactions, with potential impact on surveillance, collaborative robotics, and safety‑critical autonomous systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment