CogniEdit: Dense Gradient Flow Optimization for Fine-Grained Image Editing

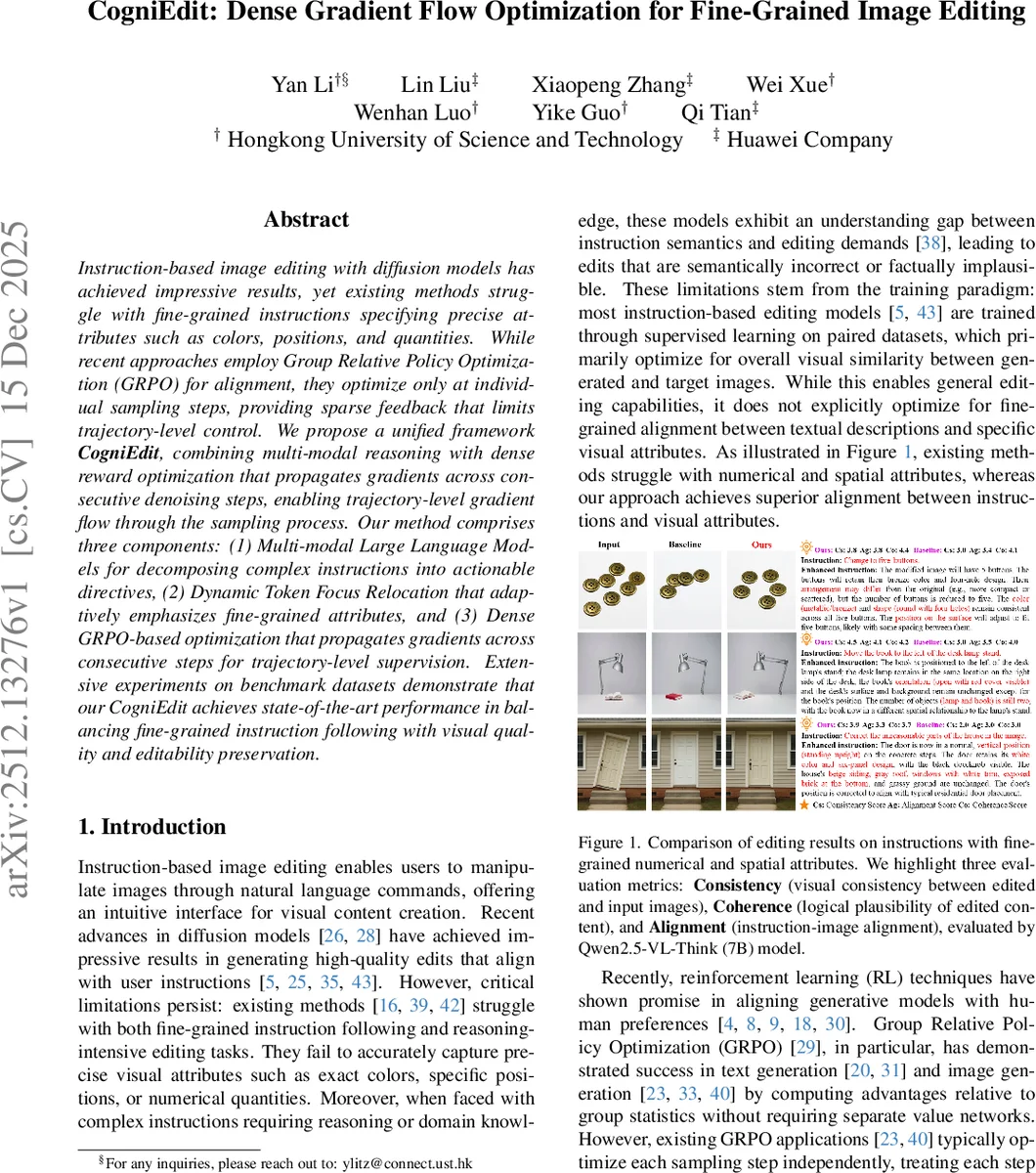

Instruction-based image editing with diffusion models has achieved impressive results, yet existing methods struggle with fine-grained instructions specifying precise attributes such as colors, positions, and quantities. While recent approaches employ Group Relative Policy Optimization (GRPO) for alignment, they optimize only at individual sampling steps, providing sparse feedback that limits trajectory-level control. We propose a unified framework CogniEdit, combining multi-modal reasoning with dense reward optimization that propagates gradients across consecutive denoising steps, enabling trajectory-level gradient flow through the sampling process. Our method comprises three components: (1) Multi-modal Large Language Models for decomposing complex instructions into actionable directives, (2) Dynamic Token Focus Relocation that adaptively emphasizes fine-grained attributes, and (3) Dense GRPO-based optimization that propagates gradients across consecutive steps for trajectory-level supervision. Extensive experiments on benchmark datasets demonstrate that our CogniEdit achieves state-of-the-art performance in balancing fine-grained instruction following with visual quality and editability preservation

💡 Research Summary

CogniEdit tackles the persistent challenge of following fine‑grained textual instructions—such as exact colors, precise positions, and specific quantities—in diffusion‑based image editing. Existing approaches either rely on supervised learning that optimizes overall visual similarity, or apply Group Relative Policy Optimization (GRPO) only at individual denoising steps, which provides sparse feedback and prevents trajectory‑level guidance.

The proposed framework integrates three complementary components. First, a Multi‑modal Large Language Model (MLLM) parses complex user commands into structured sub‑directives (action, target, attribute), ensuring semantic correctness before generation. Second, Dynamic Token Focus Relocation predicts, for each transformer layer, which instruction tokens should receive heightened attention. Learnable soft tokens are injected at the predicted positions, allowing early layers to focus on high‑level intent while deeper layers attend to fine‑grained attributes such as “purple”, “five”, or “on the left”. Third, Dense GRPO extends the standard GRPO objective by (a) injecting controlled stochasticity into the deterministic ODE diffusion process via Euler‑Maruyama discretization, thereby generating diverse samples for advantage computation, and (b) computing advantages at the batch level to reduce variance. Crucially, the method evaluates rewards over a randomly chosen window of k consecutive denoising steps and uses stop‑gradient operations to propagate gradients backward through the sampling trajectory, delivering dense supervision throughout the editing process.

Experiments on benchmark datasets (including COCO‑Edit and a custom fine‑grained instruction set) demonstrate that CogniEdit outperforms state‑of‑the‑art models such as InstructPix2Pix, BrushEdit, and Qwen‑Image across three fine‑grained metrics: color accuracy, positional precision, and quantity matching. Additionally, the model achieves higher overall consistency, coherence, and alignment scores as measured by the Qwen2.5‑VL‑Think (7B) evaluator. Ablation studies confirm that both Dynamic Token Focus Relocation and Dense GRPO independently contribute to performance gains, with their combination yielding the strongest results.

Despite a modest 20 % increase in computational overhead relative to standard GRPO, training remains stable thanks to KL‑divergence regularization and clipping. Limitations include the reliance on large MLLMs for instruction parsing and the sensitivity of stochastic noise injection to editing stability, which the authors plan to address through lightweight language models and adaptive noise schedules in future work.

In summary, CogniEdit presents a unified approach that couples multi‑modal reasoning with trajectory‑aware dense reinforcement learning, enabling precise, fine‑grained instruction following while preserving source‑image consistency—a significant step forward for instruction‑driven image editing.

Comments & Academic Discussion

Loading comments...

Leave a Comment