LegalRikai: Open Benchmark -- Benchmark for Complex Japanese Corporate Legal Tasks

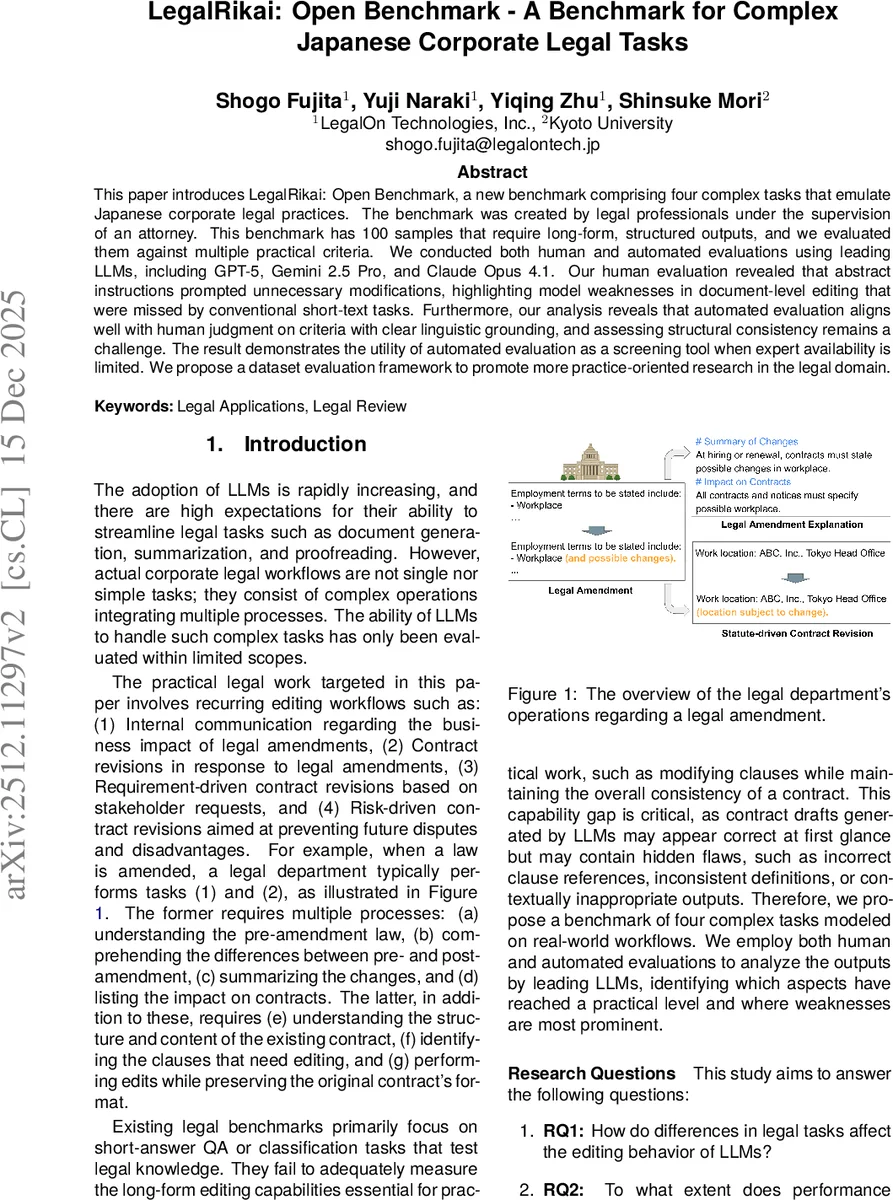

This paper introduces LegalRikai: Open Benchmark, a new benchmark comprising four complex tasks that emulate Japanese corporate legal practices. The benchmark was created by legal professionals under the supervision of an attorney. This benchmark has 100 samples that require long-form, structured outputs, and we evaluated them against multiple practical criteria. We conducted both human and automated evaluations using leading LLMs, including GPT-5, Gemini 2.5 Pro, and Claude Opus 4.1. Our human evaluation revealed that abstract instructions prompted unnecessary modifications, highlighting model weaknesses in document-level editing that were missed by conventional short-text tasks. Furthermore, our analysis reveals that automated evaluation aligns well with human judgment on criteria with clear linguistic grounding, and assessing structural consistency remains a challenge. The result demonstrates the utility of automated evaluation as a screening tool when expert availability is limited. We propose a dataset evaluation framework to promote more practice-oriented research in the legal domain.

💡 Research Summary

This paper introduces “LegalRikai: Open Benchmark,” a novel benchmark designed to evaluate Large Language Models (LLMs) on complex tasks that emulate real-world Japanese corporate legal practice. Moving beyond conventional short-answer legal QA tests, this benchmark focuses on long-form, structured output generation and document-level editing, which are critical for practical legal work. The benchmark comprises four distinct tasks: Legal Amendment Explanation (AmendExp), Statute-Driven Contract Revision (StatRev), Requirement-Driven Contract Revision (ReqRev), and Risk-Driven Contract Revision (RiskRev). These tasks simulate common workflows such as analyzing the impact of new laws, updating contracts to comply with statutes, incorporating specific client requests, and mitigating legal risks. The dataset consists of 100 samples meticulously created by legal professionals under attorney supervision, ensuring practical relevance and high quality.

The study conducted comprehensive evaluations using three leading LLMs: GPT-5, Gemini 2.5 Pro, and Claude Opus 4.1. Both human evaluation (by legal experts) and automated evaluation (using LLMs as judges) were performed based on task-specific metrics like accuracy, coverage, relevance, structural consistency, and terminology appropriateness.

Key findings from the experiments reveal significant insights. First, human evaluation uncovered that models tend to make unnecessary or excessive modifications when given abstract, high-level instructions (as in RiskRev), highlighting a weakness in holistic document editing and contextual judgment that is not captured by simpler tasks. Second, the comparison between human and automated evaluations showed a promising alignment for criteria with clear linguistic grounding, such as “Accuracy of Amendments.” This suggests that automated evaluation can serve as a viable and efficient screening tool when expert human evaluators are scarce. However, assessing more subjective or holistic aspects like “Structural Consistency” remains a significant challenge for automated methods, indicating an area for future improvement in evaluation techniques.

In terms of model performance, Gemini 2.5 Pro demonstrated the strongest overall results, excelling particularly in the accuracy of amendment summaries and in following revision instructions for statute-driven edits. GPT-5 showed strength in providing comprehensive coverage of information but was weaker in judging relevance, sometimes including extraneous content. The performance varied across different task types, underscoring that the suitability of an LLM for legal work depends heavily on the specific nature of the task.

The paper concludes by proposing a dataset evaluation framework aimed at fostering more practice-oriented research in the legal AI domain. The LegalRikai benchmark and the accompanying analysis provide valuable resources and insights for researchers and practitioners seeking to understand and advance the capabilities of LLMs in handling sophisticated, real-world legal tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment