Light-X: Generative 4D Video Rendering with Camera and Illumination Control

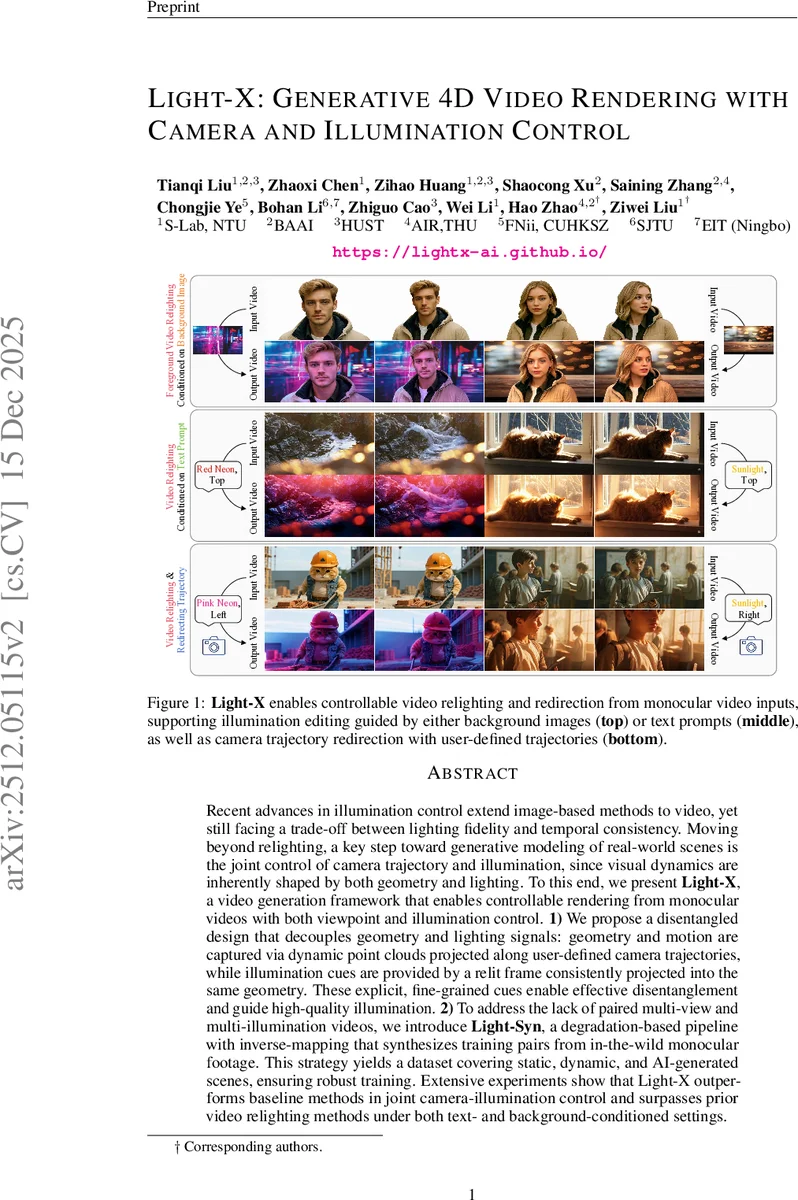

Recent advances in illumination control extend image-based methods to video, yet still facing a trade-off between lighting fidelity and temporal consistency. Moving beyond relighting, a key step toward generative modeling of real-world scenes is the joint control of camera trajectory and illumination, since visual dynamics are inherently shaped by both geometry and lighting. To this end, we present Light-X, a video generation framework that enables controllable rendering from monocular videos with both viewpoint and illumination control. 1) We propose a disentangled design that decouples geometry and lighting signals: geometry and motion are captured via dynamic point clouds projected along user-defined camera trajectories, while illumination cues are provided by a relit frame consistently projected into the same geometry. These explicit, fine-grained cues enable effective disentanglement and guide high-quality illumination. 2) To address the lack of paired multi-view and multi-illumination videos, we introduce Light-Syn, a degradation-based pipeline with inverse-mapping that synthesizes training pairs from in-the-wild monocular footage. This strategy yields a dataset covering static, dynamic, and AI-generated scenes, ensuring robust training. Extensive experiments show that Light-X outperforms baseline methods in joint camera-illumination control and surpasses prior video relighting methods under both text- and background-conditioned settings.

💡 Research Summary

Light‑X is a novel video generation framework that enables simultaneous control of camera trajectory and illumination from a single‑view input video. The core idea is to explicitly decouple geometry/motion from lighting by constructing two parallel point‑cloud pipelines. First, depth maps are estimated for each frame of the source video and back‑projected to a dynamic point cloud. This cloud is re‑projected along a user‑specified camera trajectory, producing geometry‑aligned renders and visibility masks that serve as strong geometric priors. Second, a single frame is relit using the IC‑Light model conditioned on a textual prompt, an HDR environment map, or a reference image. The relit frame is lifted into a “relit point cloud” using the same depth maps, ensuring perfect geometric alignment with the original cloud. Projecting this relit cloud along the same trajectory yields relit renders and corresponding masks that indicate where lighting information is present.

These six cues (original renders, original masks, relit renders, relit masks, plus optional illumination tokens) are encoded by a VAE, concatenated with diffusion noise, and tokenized into vision tokens. Textual tokens derived from video captions are fused with the vision tokens and fed into a diffusion transformer (DiT) backbone. To preserve illumination strength across frames, Light‑X introduces a Light‑DiT layer: a Q‑Former extracts global illumination tokens from the relit frame, which are injected into the vision tokens via cross‑attention. The architecture also retains Ref‑DiT and standard DiT blocks to aggregate text‑vision information and enforce 4‑D temporal consistency.

Training data for such a model are scarce because paired multi‑view, multi‑illumination videos are virtually nonexistent. Light‑X addresses this with Light‑Syn, a degradation‑based data synthesis pipeline. Real‑world monocular videos are artificially degraded (color shifts, noise, masking) to create low‑quality “source” videos, while the original high‑quality footage serves as supervision. Depth and camera parameters from the original are reused to generate both original and relit point‑cloud renders for the degraded video, yielding paired training samples that cover static scenes, dynamic motions, and AI‑generated content.

Extensive experiments demonstrate that Light‑X outperforms state‑of‑the‑art video relighting methods (e.g., RelightVideo, Light‑Video) and camera‑controlled video generators in joint camera‑illumination tasks. Quantitatively, Light‑X achieves higher PSNR/SSIM and lower LPIPS, while reducing temporal flicker as measured by flow‑LPIPS. Qualitatively, user studies show a strong preference for the realism and consistency of Light‑X outputs. Ablation studies confirm the importance of the disentangled cues, the Light‑DiT illumination module, and the Light‑Syn data pipeline.

Limitations include dependence on accurate depth estimation—errors lead to artifacts in fast motion or reflective surfaces—and the current support for only single‑light illumination conditions. Future work may integrate more robust depth networks, multi‑light modeling, and efficient rendering to enable real‑time AR/VR applications. Overall, Light‑X provides a unified solution for controllable 4‑D video synthesis, opening new possibilities for immersive content creation, virtual cinematography, and interactive media.

Comments & Academic Discussion

Loading comments...

Leave a Comment