CapeNext: Rethinking and Refining Dynamic Support Information for Category-Agnostic Pose Estimation

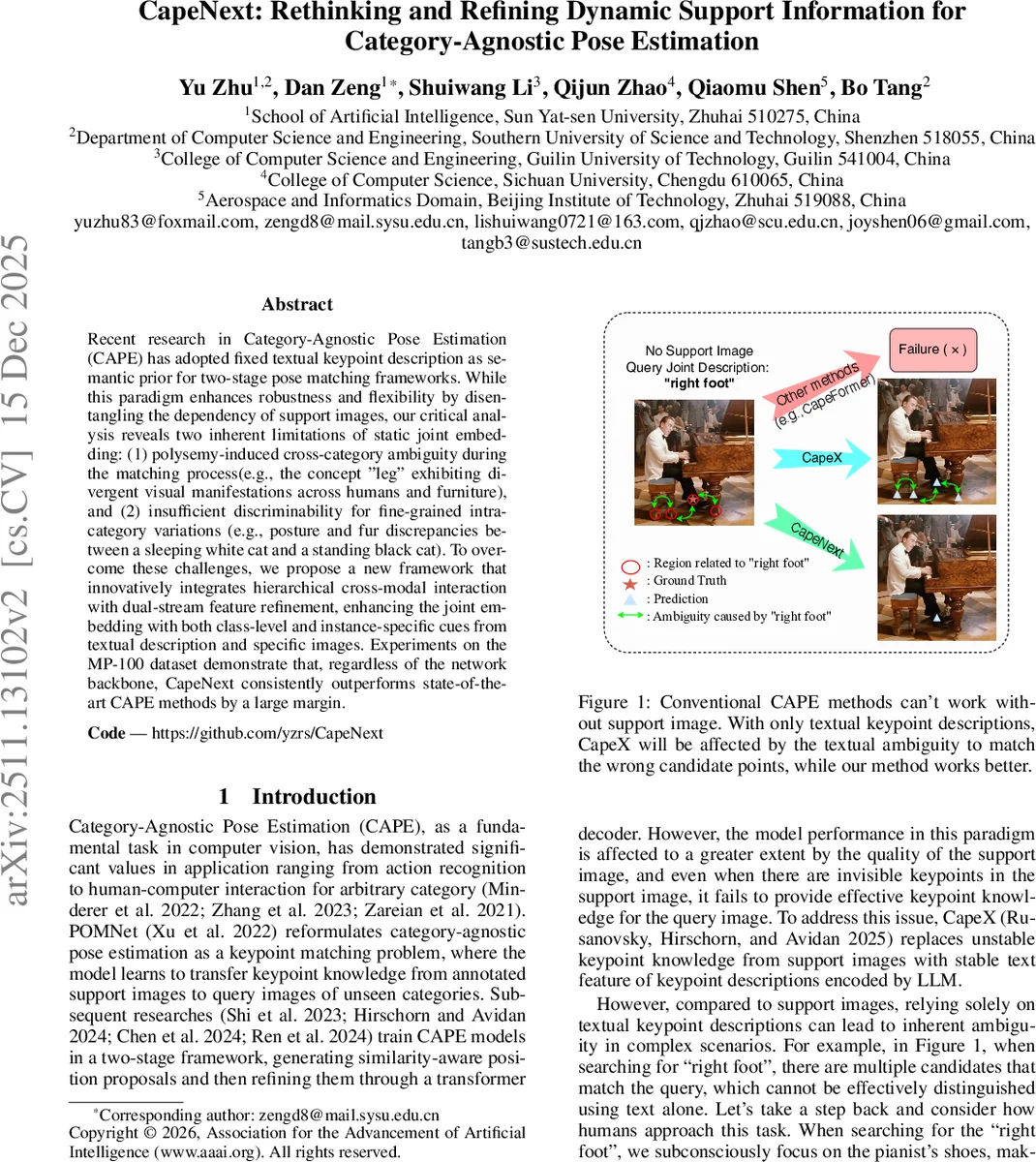

Recent research in Category-Agnostic Pose Estimation (CAPE) has adopted fixed textual keypoint description as semantic prior for two-stage pose matching frameworks. While this paradigm enhances robustness and flexibility by disentangling the dependency of support images, our critical analysis reveals two inherent limitations of static joint embedding: (1) polysemy-induced cross-category ambiguity during the matching process(e.g., the concept “leg” exhibiting divergent visual manifestations across humans and furniture), and (2) insufficient discriminability for fine-grained intra-category variations (e.g., posture and fur discrepancies between a sleeping white cat and a standing black cat). To overcome these challenges, we propose a new framework that innovatively integrates hierarchical cross-modal interaction with dual-stream feature refinement, enhancing the joint embedding with both class-level and instance-specific cues from textual description and specific images. Experiments on the MP-100 dataset demonstrate that, regardless of the network backbone, CapeNext consistently outperforms state-of-the-art CAPE methods by a large margin.

💡 Research Summary

CapeNext tackles two fundamental shortcomings of recent category‑agnostic pose estimation (CAPE) methods that rely exclusively on fixed textual keypoint descriptions as semantic priors. First, static text embeddings suffer from polysemy: identical words such as “leg” can denote anatomically very different parts across categories (human leg vs. chair leg), causing cross‑category ambiguity during the matching stage. Second, a single textual description cannot capture fine‑grained intra‑category variations; for example, the phrase “right front paw” does not distinguish a standing black cat from a curled white cat, leading to reduced discriminability.

To address these issues, the authors propose a novel framework that enriches the joint embedding with both class‑level and instance‑specific cues. The key idea is to treat the query image itself as an additional “support” source, thereby injecting visual details that are missing from pure text. Alongside the query image, an easily obtainable textual class description (e.g., “cat”, “chair”) is used to filter out irrelevant regions and mitigate polysemy‑induced confusion. Both modalities are encoded with a pretrained CLIP model, yielding an image embedding (e_img), a class embedding (e_cls), and the original keypoint text embedding (e_joint).

Two dedicated modules fuse these representations:

-

Hierarchical Cross‑Modal Interaction (HCMI) – e_img and e_cls are concatenated and passed through a self‑attention layer followed by an MLP. This operation lets the image embedding absorb high‑level semantic guidance from the class embedding, while the class embedding receives instance‑specific visual details from the image. The output is a refined image embedding (e′_img) and a refined class embedding (e′_cls) with reduced modality gap.

-

Dual‑Stream Feature Refinement (DSFR) – The original e_joint is cross‑attended separately with e′_img and e′_cls. The resulting attention features are summed as a residual to e_joint, producing an enhanced joint embedding (e′_joint) that now carries both global class semantics and fine‑grained visual cues.

The enhanced joint embedding is fed into the same transformer encoder‑decoder and graph‑transformer decoder used in prior CAPE works. Graph reasoning propagates structural relationships among keypoints, and parallel CNN heads predict similarity heatmaps and offset maps. Peaks in the heatmaps, corrected by the offsets, yield the final keypoint coordinates.

Experiments on the MP‑100 benchmark (a large‑scale, category‑agnostic pose dataset) demonstrate that CapeNext consistently outperforms state‑of‑the‑art methods such as CapeX, CapeFormer, X‑Pose, and SDPNet across multiple backbones (ResNet‑50, Swin‑Transformer, etc.). Average Precision (AP) improvements range from 6 % to 9 % absolute, with especially large gains on categories prone to polysemy (e.g., furniture vs. animals) and on categories with high intra‑class visual diversity (e.g., cats of different colors and poses).

Ablation studies confirm that both HCMI and DSFR contribute independently to performance gains; removing either module degrades AP. Moreover, using only the image embedding or only the class text (instead of both) also leads to noticeable drops, underscoring the necessity of the hierarchical, dual‑stream fusion.

In summary, CapeNext introduces a paradigm shift for CAPE: rather than relying solely on static textual priors, it dynamically incorporates the query image and class description to produce a context‑aware joint embedding. The hierarchical cross‑modal interaction bridges the semantic‑visual gap, while dual‑stream refinement injects instance‑specific details without overwhelming the model with noise. This combination yields a more robust, discriminative representation that resolves polysemy‑induced ambiguity and captures fine‑grained intra‑category variations. The paper opens avenues for future work such as multi‑support fusion, richer language prompts from large language models, and adaptive prompt engineering to further enhance category‑agnostic pose estimation.

Comments & Academic Discussion

Loading comments...

Leave a Comment