HTMformer: Hybrid Time and Multivariate Transformer for Time Series Forecasting

Transformer-based methods have achieved impressive results in time series forecasting. However, existing Transformers still exhibit limitations in sequence modeling as they tend to overemphasize temporal dependencies. This incurs additional computational overhead without yielding corresponding performance gains. We find that the performance of Transformers is highly dependent on the embedding method used to learn effective representations. To address this issue, we extract multivariate features to augment the effective information captured in the embedding layer, yielding multidimensional embeddings that convey richer and more meaningful sequence representations. These representations enable Transformer-based forecasters to better understand the series. Specifically, we introduce Hybrid Temporal and Multivariate Embeddings (HTME). The HTME extractor integrates a lightweight temporal feature extraction module with a carefully designed multivariate feature extraction module to provide complementary features, thereby achieving a balance between model complexity and performance. By combining HTME with the Transformer architecture, we present HTMformer, leveraging the enhanced feature extraction capability of the HTME extractor to build a lightweight forecaster. Experiments conducted on eight real-world datasets demonstrate that our approach outperforms existing baselines in both accuracy and efficiency.

💡 Research Summary

The paper “HTMformer: Hybrid Time and Multivariate Transformer for Time Series Forecasting” addresses key limitations in current Transformer-based approaches for multivariate long-term time series forecasting. The authors identify that existing models tend to over-prioritize temporal dependency modeling, incurring significant computational cost without proportional performance gains, or they neglect multivariate correlations altogether, limiting their ability to capture complex patterns.

To solve this, the paper proposes a novel embedding strategy called Hybrid Temporal and Multivariate Embedding (HTME). The core innovation of HTME lies in its parallel and independent feature extraction architecture. It consists of two separate modules: a Temporal Feature Extractor and a Multivariate Feature Extractor. The temporal module uses patching, multi-scale convolutions, and linear layers to hierarchically capture both short-term and long-term temporal dependencies. The multivariate module processes the same patched input across the variable dimension, employing linear layers, convolutions, and a GRU network to extract correlations between different variables.

A critical design principle is that these two modules operate in disjoint feature spaces. This containment strategy ensures that any noise introduced during multivariate feature extraction is confined to its own space and does not pollute the cleaner temporal feature space. The extracted multivariate features are then projected into the temporal feature space and fused with the original temporal features via a learnable weighting parameter (α). This process yields semantically rich, multidimensional embeddings that allow subsequent model components to focus on a single dimension (e.g., time) while still being informed by multivariate relationships, all with minimal added computational overhead.

The authors integrate this HTME strategy into a Transformer-based framework to create the HTMformer model. HTMformer adopts the inverted input design from iTransformer, which allows the self-attention mechanism to directly model global correlations across variables. The overall pipeline involves: 1) applying RevIN normalization, 2) processing the input through the HTME extractor to obtain enhanced embeddings, 3) encoding these embeddings with a standard Transformer encoder utilizing the inverted structure, and 4) generating final predictions via a linear projection layer.

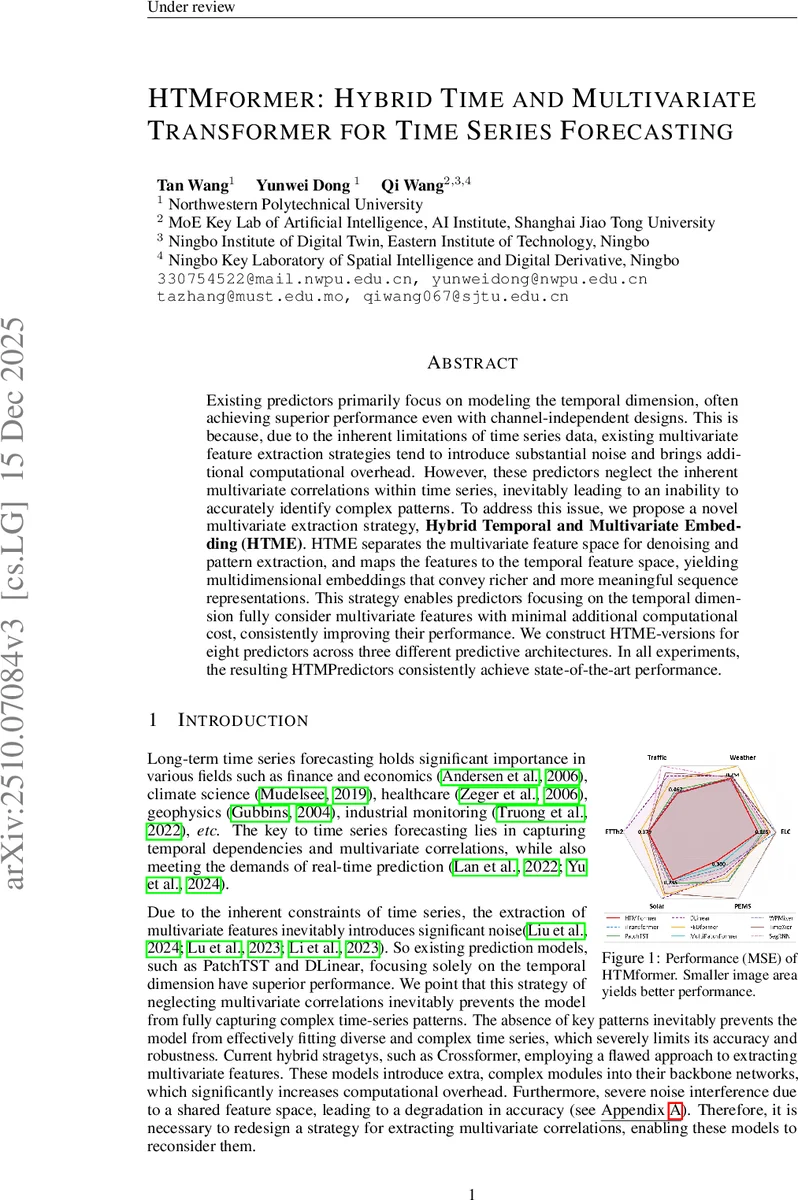

Extensive experiments validate the effectiveness and generalizability of the proposed approach. The HTME strategy was applied to eight different forecasting backbones (including Transformer, Informer, DLinear, NLinear) across three real-world datasets (ECL, Weather, Traffic). Results show consistent performance improvements, with an average reduction in Mean Squared Error (MSE) ranging from 35.8% for Transformers to 1.7% for simpler linear models, demonstrating that HTME provides the most significant boost to architectures that originally struggled with multivariate information. Furthermore, the standalone HTMformer model achieves state-of-the-art performance on multiple benchmarks, outperforming existing baselines in both accuracy and efficiency. Complexity analysis confirms that the HTME module itself adds only linear overhead, preserving the model’s scalability.

In summary, this work introduces a powerful and lightweight embedding enhancement strategy (HTME) that enables diverse forecasting models to effectively leverage multivariate correlations without structural complexity or noise interference. The resulting HTMformer framework sets a new benchmark for accurate and efficient multivariate time series forecasting.

Comments & Academic Discussion

Loading comments...

Leave a Comment