Efficiently Seeking Flat Minima for Better Generalization in Fine-Tuning Large Language Models and Beyond

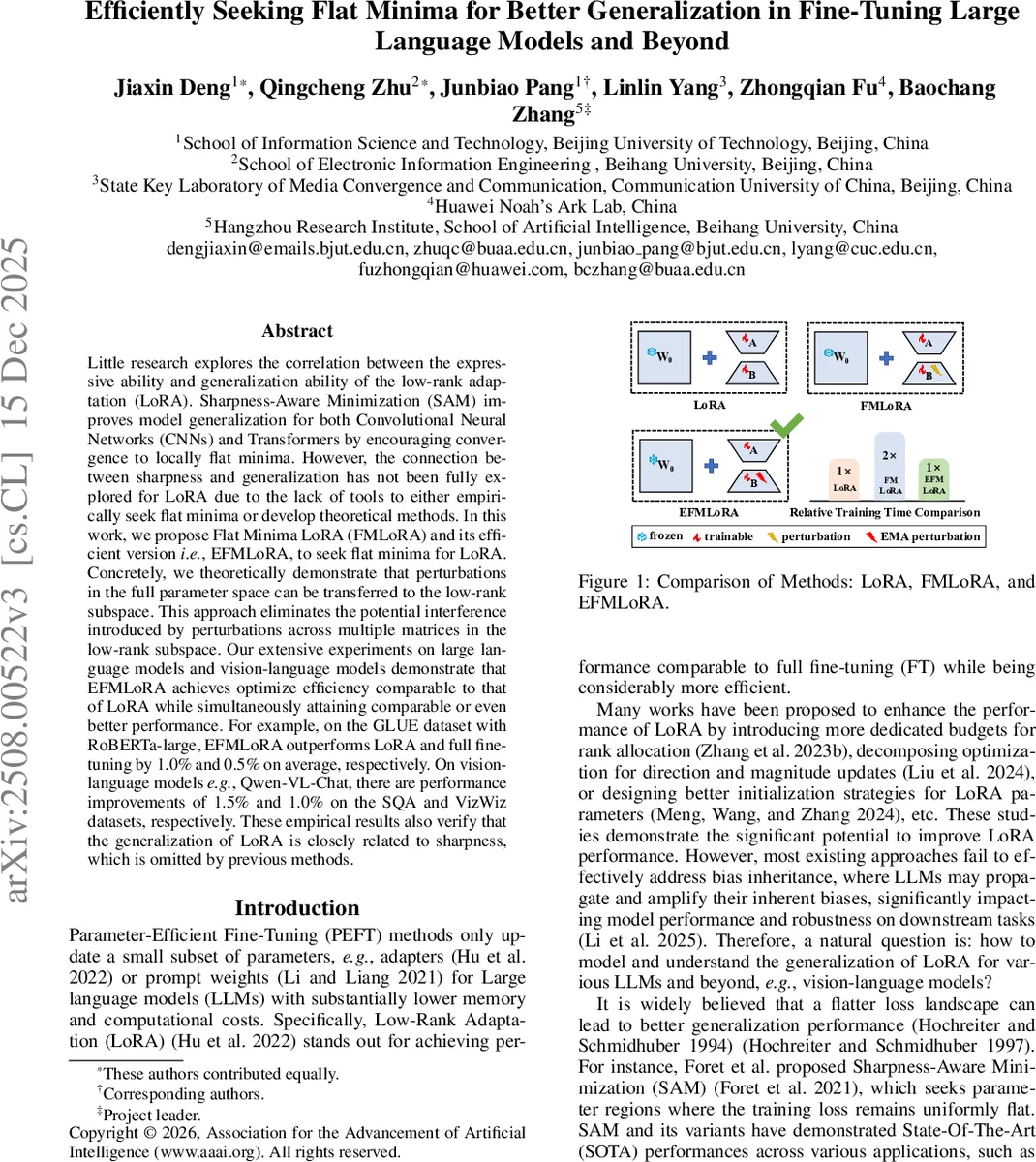

Little research explores the correlation between the expressive ability and generalization ability of the low-rank adaptation (LoRA). Sharpness-Aware Minimization (SAM) improves model generalization for both Convolutional Neural Networks (CNNs) and Transformers by encouraging convergence to locally flat minima. However, the connection between sharpness and generalization has not been fully explored for LoRA due to the lack of tools to either empirically seek flat minima or develop theoretical methods. In this work, we propose Flat Minima LoRA (FMLoRA) and its efficient version, i.e., EFMLoRA, to seek flat minima for LoRA. Concretely, we theoretically demonstrate that perturbations in the full parameter space can be transferred to the low-rank subspace. This approach eliminates the potential interference introduced by perturbations across multiple matrices in the low-rank subspace. Our extensive experiments on large language models and vision-language models demonstrate that EFMLoRA achieves optimize efficiency comparable to that of LoRA while simultaneously attaining comparable or even better performance. For example, on the GLUE dataset with RoBERTa-large, EFMLoRA outperforms LoRA and full fine-tuning by 1.0% and 0.5% on average, respectively. On vision-language models, e.g., Qwen-VL-Chat, there are performance improvements of 1.5% and 1.0% on the SQA and VizWiz datasets, respectively. These empirical results also verify that the generalization of LoRA is closely related to sharpness, which is omitted by previous methods.

💡 Research Summary

This paper presents a novel approach to improving the generalization performance of Low-Rank Adaptation (LoRA), a widely-used Parameter-Efficient Fine-Tuning (PEFT) method for large language and vision-language models. The core premise is that the generalization ability of LoRA is closely linked to the sharpness (flatness) of the loss landscape at the optimized parameters, a relationship previously underexplored in PEFT literature.

The authors identify two key challenges in directly applying Sharpness-Aware Minimization (SAM)—a successful sharpness-reduction technique—to LoRA. First, applying independent perturbations to LoRA’s two low-rank matrices (A and B) may not align with the maximum loss perturbation in the full parameter space, leading to suboptimal flatness seeking. Second, SAM inherently requires double the computational cost (two gradient computations per step), undermining LoRA’s efficiency advantage.

To address these issues, the paper introduces FMLoRA (Flat Minima LoRA). Its theoretical foundation is the reparameterization of the SAM objective. The authors prove that the perturbation defined in the full weight matrix (W) space can be equivalently transferred to a perturbation within just one of the low-rank subspaces—specifically, the B matrix. They derive formulas to approximate the unknown gradient of the full weights (∇L_W) using the computable gradients of the LoRA matrices (∇L_A, ∇L_B) and the pseudo-inverses of A and B (Eq. 12). This allows for calculating the optimal full-space perturbation and then transforming it precisely into a single perturbation for matrix B (Eq. 15), eliminating interference between perturbations on A and B.

While FMLoRA solves the alignment problem, it retains SAM’s double computational cost. Therefore, the authors propose EFMLoRA (Efficient FMLoRA), which incorporates an Exponential Moving Average (EMA) strategy. EFMLoRA reuses perturbation information from the previous optimization step via EMA, enabling effective sharpness minimization with only one gradient computation per update. This makes its training time virtually identical to standard LoRA (Figure 1).

Comprehensive experiments validate the proposed methods across diverse models and tasks. For natural language understanding, using RoBERTa-large and GPT-2 on the GLUE benchmark and other datasets, EFMLoRA consistently matches or surpasses the performance of both standard LoRA and full fine-tuning. On average across GLUE tasks, EFMLoRA outperforms LoRA by 1.0% and full fine-tuning by 0.5%. For vision-language tasks using models like CLIP and Qwen-VL-Chat, EFMLoRA achieves performance improvements of 1.5% on ScienceQA (SQA) and 1.0% on VizWiz, respectively, compared to baseline LoRA.

The empirical success of EFMLoRA provides strong evidence that the generalization capability of LoRA is indeed correlated with the sharpness of its solution, a factor overlooked by prior PEFT methods. In summary, this work makes significant contributions by: 1) establishing a theoretical link between full-space sharpness and low-rank subspace optimization for LoRA, 2) proposing FMLoRA to correctly seek flat minima within the LoRA framework, and 3) designing EFMLoRA, an efficient variant that maintains LoRA’s training efficiency while delivering superior generalization performance on a wide range of tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment