In-context Learning of Evolving Data Streams with Tabular Foundational Models

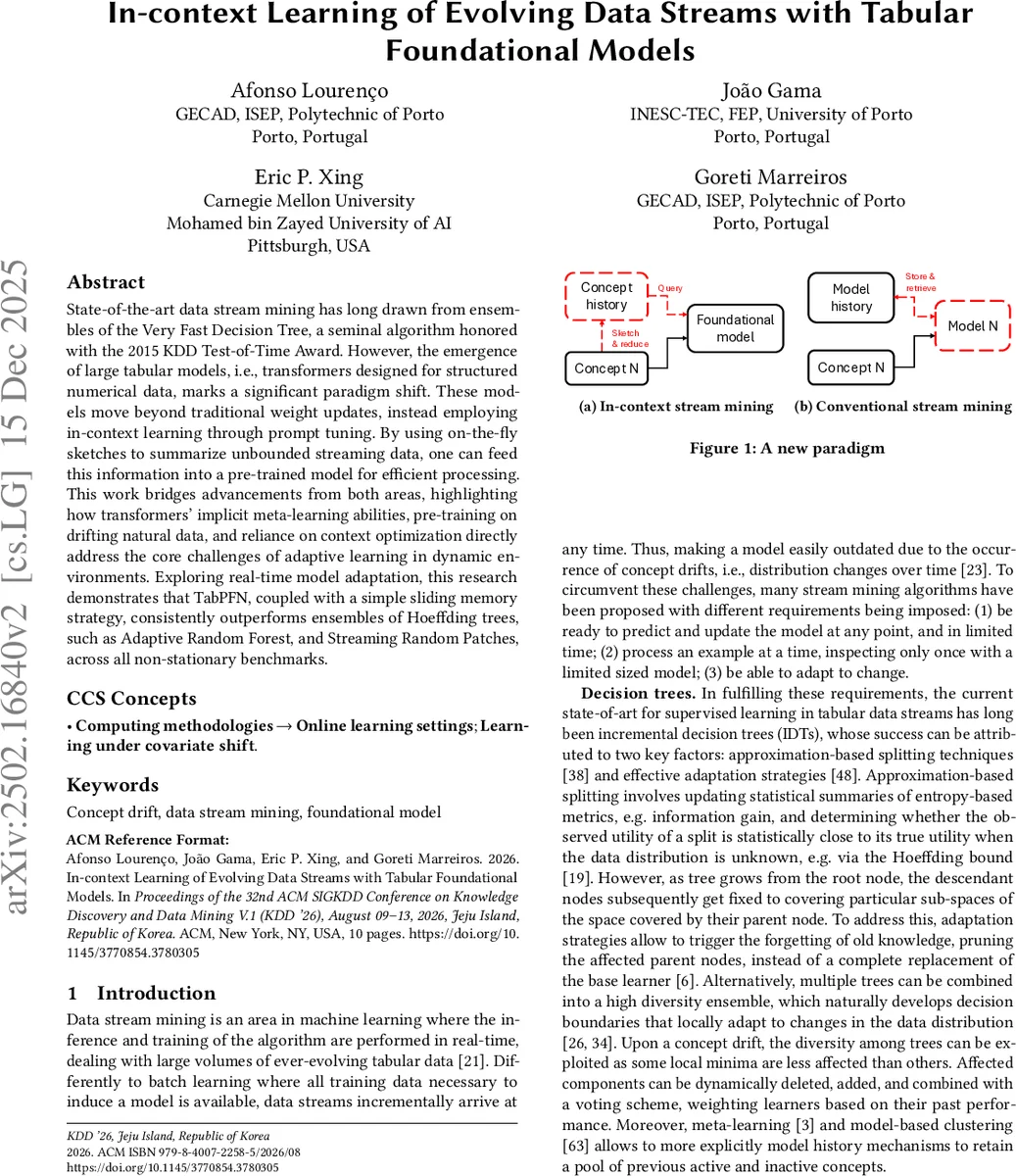

State-of-the-art data stream mining has long drawn from ensembles of the Very Fast Decision Tree, a seminal algorithm honored with the 2015 KDD Test-of-Time Award. However, the emergence of large tabular models, i.e., transformers designed for structured numerical data, marks a significant paradigm shift. These models move beyond traditional weight updates, instead employing in-context learning through prompt tuning. By using on-the-fly sketches to summarize unbounded streaming data, one can feed this information into a pre-trained model for efficient processing. This work bridges advancements from both areas, highlighting how transformers’ implicit meta-learning abilities, pre-training on drifting natural data, and reliance on context optimization directly address the core challenges of adaptive learning in dynamic environments. Exploring real-time model adaptation, this research demonstrates that TabPFN, coupled with a simple sliding memory strategy, consistently outperforms ensembles of Hoeffding trees, such as Adaptive Random Forest, and Streaming Random Patches, across all non-stationary benchmarks.

💡 Research Summary

The paper introduces a novel paradigm for data‑stream mining that replaces traditional weight‑updating models (e.g., Hoeffding trees and their ensembles such as Adaptive Random Forest or Streaming Random Patches) with a parameter‑free, in‑context learning approach based on a large tabular foundation model, TabPFN. TabPFN is a 12‑layer decoder‑only transformer pre‑trained on 18,000 synthetic tabular datasets, each containing 512 samples generated by random neural networks. This pre‑training endows the model with rich meta‑features, causal relationships, and an implicit ability to perform few‑shot learning through in‑context examples.

To make TabPFN usable on an unbounded stream, the authors propose an on‑the‑fly sketching mechanism that continuously summarizes incoming instances. The sketch consists of two FIFO buffers: a short‑term buffer that holds the most recent examples (capturing abrupt drifts) and a long‑term buffer that retains a balanced, class‑wise sample set (preserving recurring concepts and overall distribution). The total sketch size is fixed (e.g., 128–256 samples), ensuring that memory and computational costs remain constant regardless of stream length.

During inference, each example in the sketch is linearly embedded into a token of dimension dtoken, concatenated with its label token, and fed to TabPFN as a prompt. Because TabPFN’s parameters are frozen, the model does not undergo any gradient updates; instead, it implicitly constructs a predictor from the provided context, a phenomenon the authors connect to mesa‑optimization and emergent meta‑learning in transformers. The paper argues that the distributional properties of TabPFN’s pre‑training data (temporally bursty, context‑dependent concepts, and highly skewed class frequencies) align closely with the characteristics of real‑world data streams, allowing the model to adapt “for free” at inference time.

Empirical evaluation spans seven widely used stream benchmarks (SEA, Rotating Hyperplane, LED, Hyperplane, Random RBF, etc.) and four drift types (gradual, abrupt, recurring, mixed). Across all settings, TabPFN combined with the dual‑memory sketch consistently outperforms Adaptive Random Forest and Streaming Random Patches, achieving 3–7 % higher classification accuracy. Notably, performance remains robust even when the sketch is limited to as few as 64 examples, and latency stays within 10–20 ms per prediction, satisfying real‑time constraints. The authors also provide a bias‑variance analysis showing that increasing the number of in‑context examples reduces variance, while the dual‑buffer design mitigates context‑induced bias caused by concept drift.

The contributions are threefold: (1) a formal definition of an in‑context learning paradigm for tabular streams, highlighting how pre‑training distribution and architectural biases address adaptive learning challenges; (2) an extension of TabPFN with an inference‑time sketching mechanism that preserves predictive information without altering model weights; and (3) a theoretical perspective linking the observed capabilities to mesa‑optimization and meta‑learning, offering a new lens on why transformers can act as “learners‑within‑learners.”

In conclusion, the work demonstrates that large tabular foundation models, when paired with lightweight, streaming‑compatible sketches, can replace traditional incremental learners, delivering superior accuracy, lower computational overhead, and true parameter‑free adaptation. This opens avenues for future research on larger LTM families, multimodal streams, and online meta‑learning strategies that further exploit the emergent abilities of foundation models in non‑stationary environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment