📝 Original Info

- Title: Towards Unified Co-Speech Gesture Generation via Hierarchical Implicit Periodicity Learning

- ArXiv ID: 2512.13131

- Date: 2025-12-15

- Authors: Researchers from original ArXiv paper

📝 Abstract

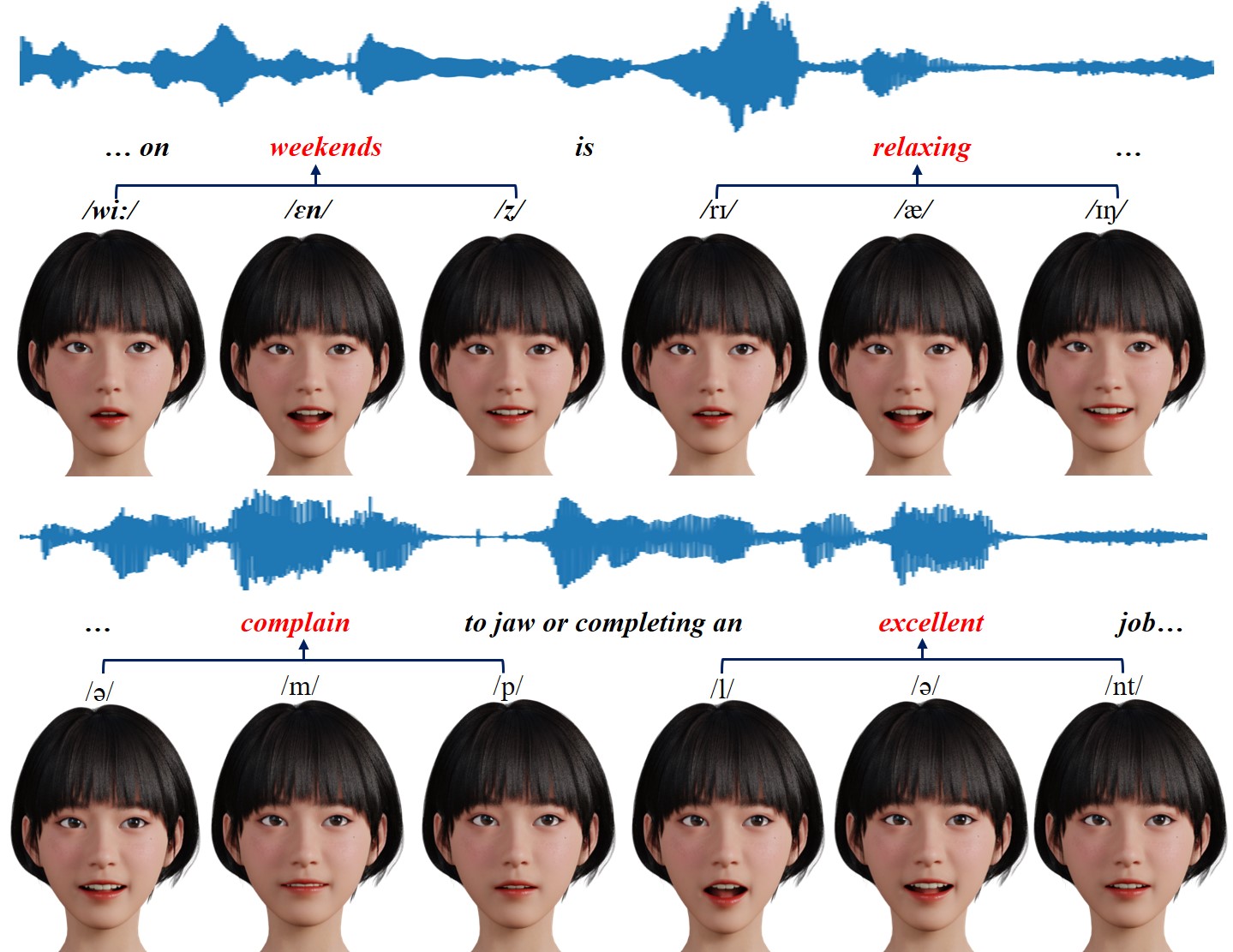

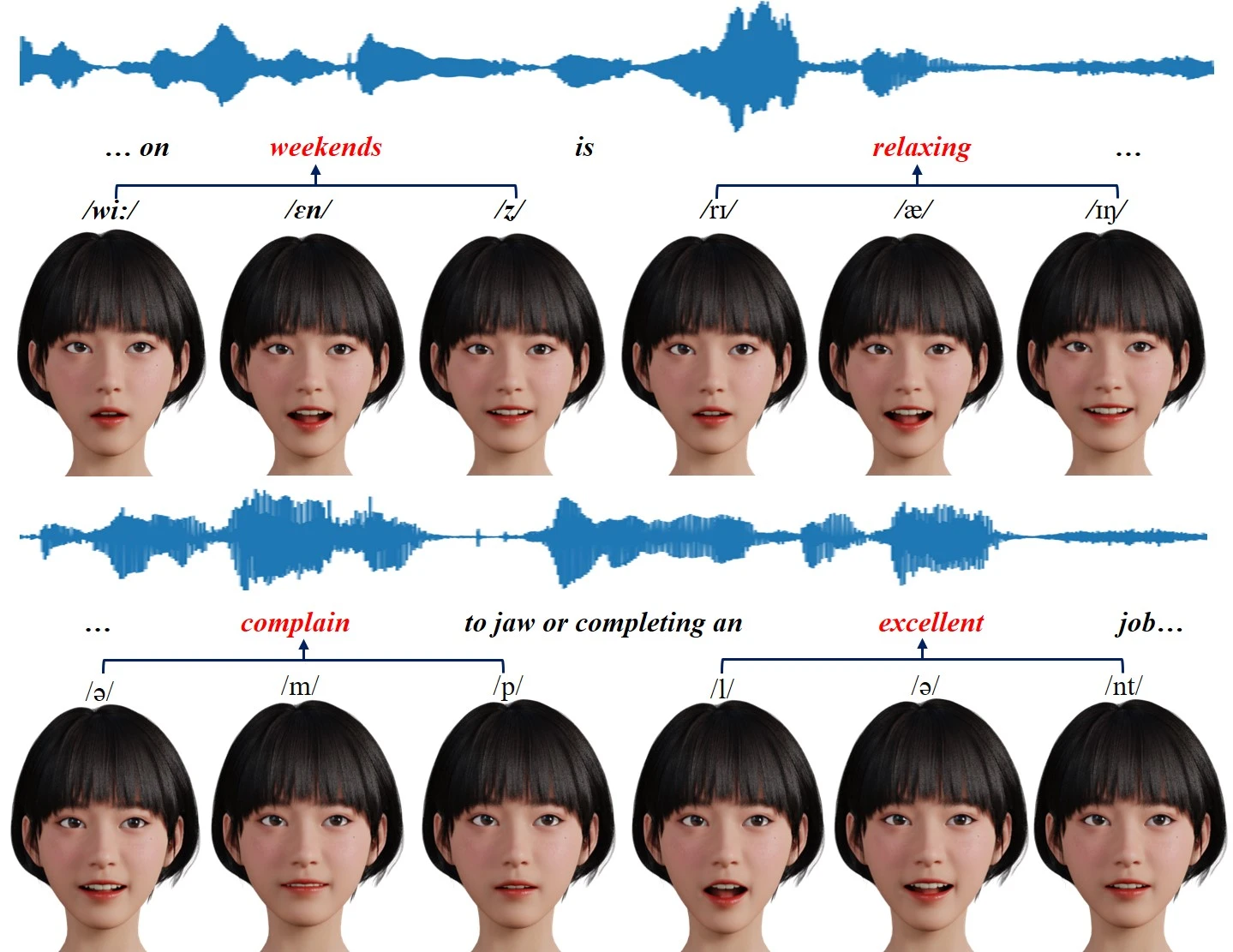

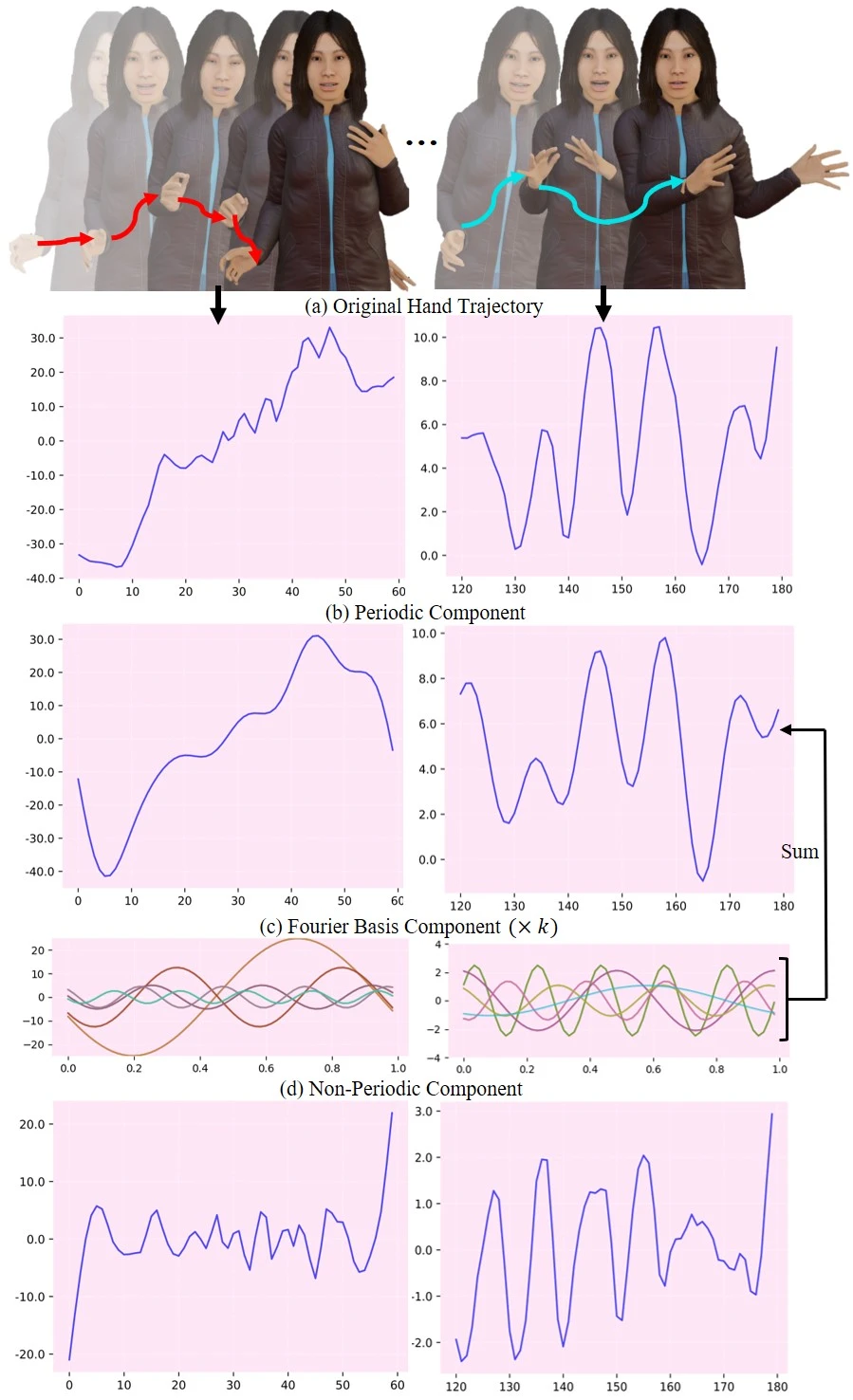

Generating 3D-based body movements from speech shows great potential in extensive downstream applications, while it still suffers challenges in imitating realistic human movements. Predominant research efforts focus on end-to-end generation schemes to generate co-speech gestures, spanning GANs, VQ-VAE, and recent diffusion models. As an ill-posed problem, in this paper, we argue that these prevailing learning schemes fail to model crucial inter-and intra-correlations across different motion units, i.e.head, body, and hands, thus leading to unnatural movements and poor coordination. To delve into these intrinsic correlations, we propose a unified Hierarchical Implicit Periodicity (HIP) learning approach for audio-inspired 3D gesture generation. Different from predominant research, our approach models this multi-modal implicit relationship by two explicit technique insights: i) To disentangle the complicated gesture movements, we first explore the gesture motion phase manifolds with periodic autoencoders to imitate human natures from realistic distributions while incorporating non-period ones from current latent states for instance-level diversities. ii) To model the hierarchical relationship of face motions, body gestures, and hand movements, driving the animation with cascaded guidance during learning. We exhibit our proposed approach on 3D avatars and extensive experiments show our method outperforms the state-of-the-art co-speech gesture generation methods by both quantitative and qualitative evaluations. Code and models will be publicly available.

💡 Deep Analysis

Deep Dive into Towards Unified Co-Speech Gesture Generation via Hierarchical Implicit Periodicity Learning.

Generating 3D-based body movements from speech shows great potential in extensive downstream applications, while it still suffers challenges in imitating realistic human movements. Predominant research efforts focus on end-to-end generation schemes to generate co-speech gestures, spanning GANs, VQ-VAE, and recent diffusion models. As an ill-posed problem, in this paper, we argue that these prevailing learning schemes fail to model crucial inter-and intra-correlations across different motion units, i.e.head, body, and hands, thus leading to unnatural movements and poor coordination. To delve into these intrinsic correlations, we propose a unified Hierarchical Implicit Periodicity (HIP) learning approach for audio-inspired 3D gesture generation. Different from predominant research, our approach models this multi-modal implicit relationship by two explicit technique insights: i) To disentangle the complicated gesture movements, we first explore the gesture motion phase manifolds with peri

📄 Full Content

1

Towards Unified Co-Speech Gesture Generation via

Hierarchical Implicit Periodicity Learning

Xin Guo, Yifan Zhao, Member, IEEE, Jia Li, Senior Member, IEEE

Abstract—Generating 3D-based body movements from speech

shows great potential in extensive downstream applications, while

it still suffers challenges in imitating realistic human movements.

Predominant research efforts focus on end-to-end generation

schemes to generate co-speech gestures, spanning GANs, VQ-

VAE, and recent diffusion models. As an ill-posed problem, in

this paper, we argue that these prevailing learning schemes fail

to model crucial inter- and intra-correlations across different

motion units, i.e.head, body, and hands, thus leading to unnatural

movements and poor coordination. To delve into these intrinsic

correlations, we propose a unified Hierarchical Implicit Periodicity

(HIP) learning approach for audio-inspired 3D gesture generation.

Different from predominant research, our approach models

this multi-modal implicit relationship by two explicit technique

insights: i) To disentangle the complicated gesture movements,

we first explore the gesture motion phase manifolds with periodic

autoencoders to imitate human natures from realistic distributions

while incorporating non-period ones from current latent states for

instance-level diversities. ii) To model the hierarchical relationship

of face motions, body gestures, and hand movements, driving the

animation with cascaded guidance during learning. We exhibit

our proposed approach on 3D avatars and extensive experiments

show our method outperforms the state-of-the-art co-speech

gesture generation methods by both quantitative and qualitative

evaluations. Code and models will be publicly available.

Index Terms—3D-based body movements, Hierarchical implicit

periodicity, Phase manifolds, Multi-modal implicit relationship

I. INTRODUCTION

When a person attempts to articulate his thoughts, two

distinct modalities are employed: speech and physical gestures.

Verbal communication serves as the principal means for

expressing one’s ideas, while gestures offer a complementary

way to concretize content and emotional expressions, thereby

enhancing the comprehensibility of the conveyed message

to others. For instance, during greetings or interpersonal

interactions, people employ a repertoire of gestures alongside

their verbal communication, with these gestures indirectly

revealing their emotional disposition towards the interlocutor.

Facial expressions and body gestures also exhibit a degree of

coordination, such as the discernible divergence in gestures

when individuals experience varying degrees of happiness.

Consequently, the exploration of speech-driven human body

gesture generation has emerged as a promising research avenue.

Co-speech gesture generation, an ill-posed one-to-many

mapping problem, requires modeling both intra-unit (within

face, body, hands) and inter-unit correlations for coherent

X. Guo, Y. Zhao, and J. Li are with the State Key Laboratory of Virtual

Reality Technology and Systems, School of Computer Science and Engineering

&Qingdao Research Institute, Beihang University, Beijing, 100191, China.

Y.

Zhao

and

J.

Li

are

the

corresponding

authors.

(E-mail:

zhaoyf@buaa.edu.cn, jiali@buaa.edu.cn).

gesture production. The methods include: retrieval-based [1],

[2], [3], [4] which decomposes gestures into action units,

extracts conditional features, and retrieves similar motions from

databases, achieving high controllability but limited to database

content. End-to-end models [5], [6], [7], [8] use architectures

like RNNs and Transformers to directly map audio to gestures,

supporting complex cross-modal mappings and generating

diverse gestures. Two-stage methods [9], [10] map audio to

intermediate latent codes before decoding them into motion

sequences. Although they enhance gesture diversity, both end-

to-end and two-stage approaches often treat body movements

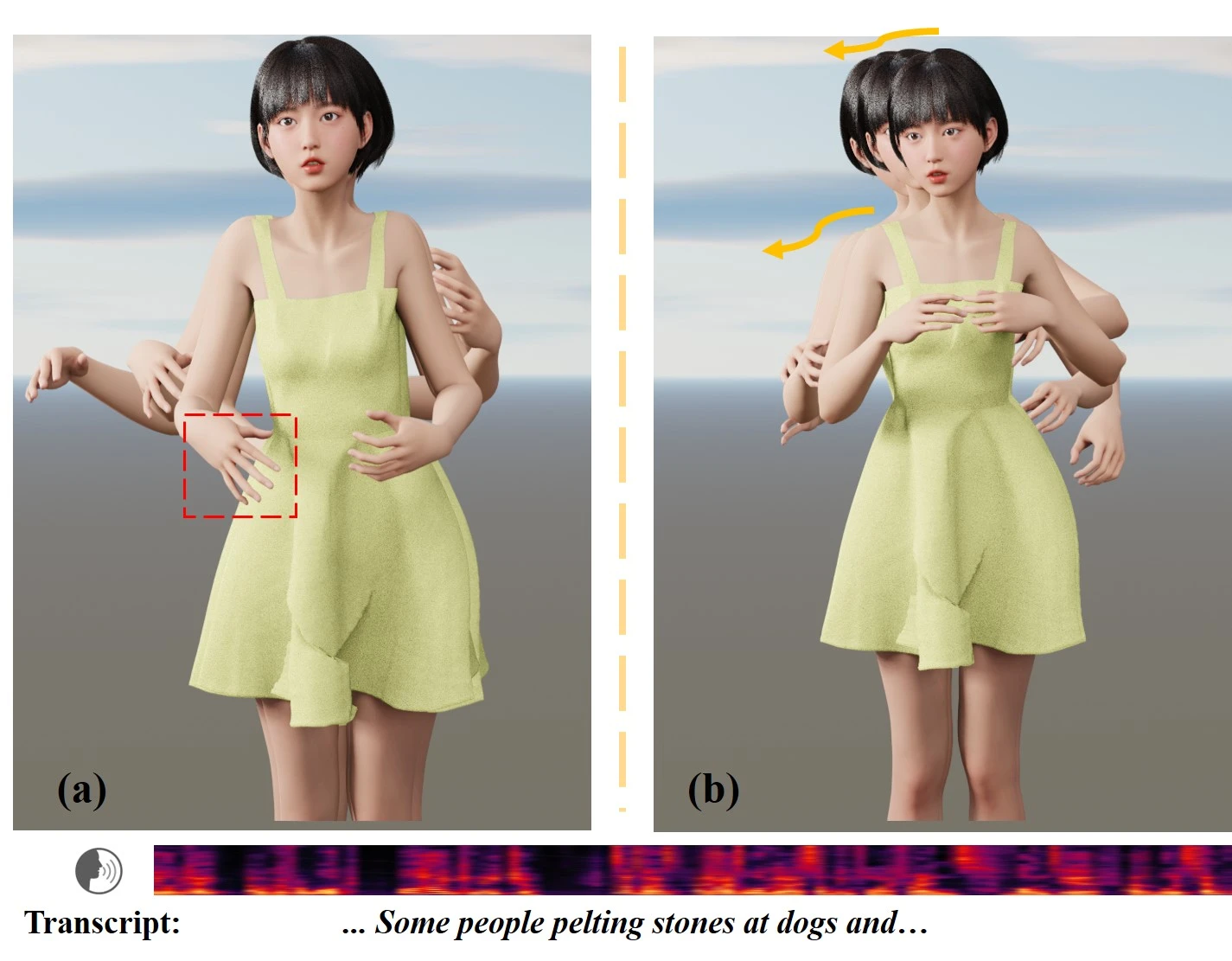

as a single aggregated signal, overlooking structured inter

and intra-correlations, leading to unstable and semantically

misaligned gesture generation. Hierarchical methods [10],

[11], [12], [13], [14] attempt to model different body parts

separately. However, they still lack a clear correlation hierarchy:

Habibie [11] and TALKSHOW [10] independently generate

facial and body motions without cross-part coordination;

EMAGE [12] predicts masked body parts simultaneously to

allow mutual influence but lacks a dependency order; DiffSHEG

[13] first generates facial motions and then predicts the entire

body as a single signal, ignoring structured relationships within

the body itself; and HA2G [14] generates body parts in a

stepwise manner but omits facial information entirely.

While existing methods have advanced co-speech gesture

generation, they often neglect the dependencies among different

body parts. Human motions follow certain morphological

and physical rules, and the movement of one human part

often influences the motion of other parts.

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.