Dexterous manipulation is challenging because it requires understanding how subtle hand motion influences the environment through contact with objects. We introduce DexWM, a Dexterous Manipulation World Model that predicts the next latent state of the environment conditioned on past states and dexterous actions. To overcome the scarcity of dexterous manipulation datasets, DexWM is trained on over 900 hours of human and non-dexterous robot videos. To enable fine-grained dexterity, we find that predicting visual features alone is insufficient; therefore, we introduce an auxiliary hand consistency loss that enforces accurate hand configurations. DexWM outperforms prior world models conditioned on text, navigation, and full-body actions, achieving more accurate predictions of future states. DexWM also demonstrates strong zero-shot generalization to unseen manipulation skills when deployed on a Franka Panda arm equipped with an Allegro gripper, outperforming Diffusion Policy by over 50% on average in grasping, placing, and reaching tasks.

Deep Dive into World Models Can Leverage Human Videos for Dexterous Manipulation.

Dexterous manipulation is challenging because it requires understanding how subtle hand motion influences the environment through contact with objects. We introduce DexWM, a Dexterous Manipulation World Model that predicts the next latent state of the environment conditioned on past states and dexterous actions. To overcome the scarcity of dexterous manipulation datasets, DexWM is trained on over 900 hours of human and non-dexterous robot videos. To enable fine-grained dexterity, we find that predicting visual features alone is insufficient; therefore, we introduce an auxiliary hand consistency loss that enforces accurate hand configurations. DexWM outperforms prior world models conditioned on text, navigation, and full-body actions, achieving more accurate predictions of future states. DexWM also demonstrates strong zero-shot generalization to unseen manipulation skills when deployed on a Franka Panda arm equipped with an Allegro gripper, outperforming Diffusion Policy by over 50% on

World Models Can Leverage Human Videos for

Dexterous Manipulation

Raktim Gautam Goswami1,2,∗, Amir Bar1, David Fan1, Tsung-Yen Yang1, Gaoyue Zhou1,2, Prashanth

Krishnamurthy2, Michael Rabbat1, Farshad Khorrami2, Yann LeCun1,2

1FAIR at Meta, 2New York University

∗Work done during internship at Meta

Dexterous manipulation is challenging because it requires understanding how subtle hand motion

influences the environment through contact with objects.

We introduce DexWM, a Dexterous

Manipulation World Model that predicts the next latent state of the environment conditioned on past

states and dexterous actions. To overcome the scarcity of dexterous manipulation datasets, DexWM is

trained on over 900 hours of human and non-dexterous robot videos. To enable fine-grained dexterity,

we find that predicting visual features alone is insufficient; therefore, we introduce an auxiliary hand

consistency loss that enforces accurate hand configurations. DexWM outperforms prior world models

conditioned on text, navigation, and full-body actions, achieving more accurate predictions of future

states. DexWM also demonstrates strong zero-shot generalization to unseen manipulation skills when

deployed on a Franka Panda arm equipped with an Allegro gripper, outperforming Diffusion Policy by

over 50% on average in grasping, placing, and reaching tasks.

Project Page: https://raktimgg.github.io/dexwm/

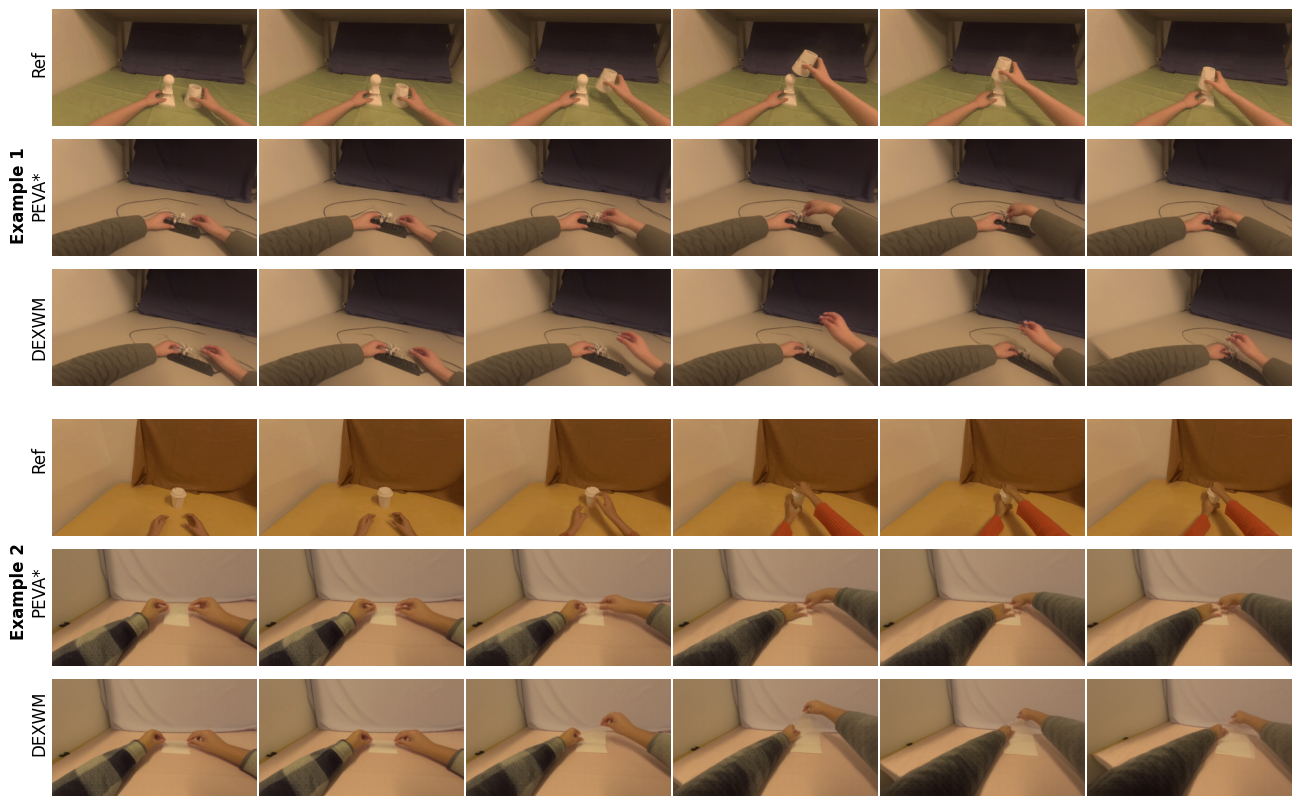

Figure 1 We introduce DexWM, a Dexterous Manipulation World Model which predicts future latent states of the

environment based on past states and dexterous actions. Trained on large-scale human and non-dexterous robot video

data, DexWM learns to simulate complex manipulation trajectories in the latent space. With minimal fine-tuning on a

small exploratory robot simulation dataset, DexWM enables robust planning for novel reaching, grasping, and placing

tasks in simulation, and achieves zero-shot transfer to real-world robot tasks.

1

arXiv:2512.13644v1 [cs.RO] 15 Dec 2025

1

Introduction

As embodied agents become increasingly integrated

into daily lives, dexterous manipulation emerges as

a critical capability for achieving human-like inter-

action with the physical world.

Everyday tasks

like cooking, as well as high-stakes applications like

surgery, demand a level of dexterity that is infeasible

with commonly used parallel-jaw grippers. Dexterous

grippers are modeled after the human hand and can

unlock advanced human skills, including handling

complex tools, performing fine-grained movements,

and executing in-hand manipulation [39, 63, 69, 93].

Recent advances in deep learning have enabled the

development of computer vision based policies for

robotic manipulation [22, 23, 37, 56, 59]. However,

these approaches face challenges in generalizing to

unseen tasks and in planning and executing policies

in physical environments [15, 32, 101]. Successful

execution requires models to reason about how their

actions affect objects and their surroundings; for

example, recognizing that opening the gripper when

holding an object will cause it to drop.

World models can learn environmental dynamics

from observation and action [58], and thus offer a

promising solution.

Early work on learned world

models [48, 74, 102] has primarily focused on small-

scale tasks with constrained environments and lim-

ited action spaces. More recent efforts have extended

these approaches to handle complex actions, such as

text [2], navigation [10] and whole-body motion [8].

However, the action spaces in these methods are often

too coarse to capture the fine-grained information

required for precise dexterous control.

Moreover,

building world models for dexterous manipulation is

challenging as there are no large-scale robotic datasets

with dexterous grippers.

To address these challenges, we propose DexWM

(Figure 1), a latent space world model that learns

from human data to predict future latent states based

on past states and dexterous hand actions. Inspired

by recent work [29, 80] that leverages human train-

ing data, we pre-train DexWM on EgoDex [47], a

large-scale egocentric human interaction dataset, and

further incorporate DROID [54] sequences, consisting

of non-dexterous robot manipulation, to reduce the

embodiment gap. DexWM’s actions are represented

as differences in 3D hand keypoints and camera poses,

capturing detailed hand configurations and enabling

the model to learn how hand posture changes affect

the environment.

We find that accurately simulating hand locations

using the next latent state prediction objective alone

is difficult. Therefore, we train DexWM to jointly

optimize both the future environment state and the

hand configuration, providing a richer learning signal

for dexterity. With this auxiliary hand consistency

loss, DexWM outperforms existing world models [2,

8, 10] in open-loop trajectory simulation.

Furthermore, DexWM enables strong zero-shot trans-

fer to dexterous robot manipulation tasks by opti-

mizing actions at test time within an MPC frame-

wo

…(Full text truncated)…

This content is AI-processed based on ArXiv data.