Generative Spatiotemporal Data Augmentation

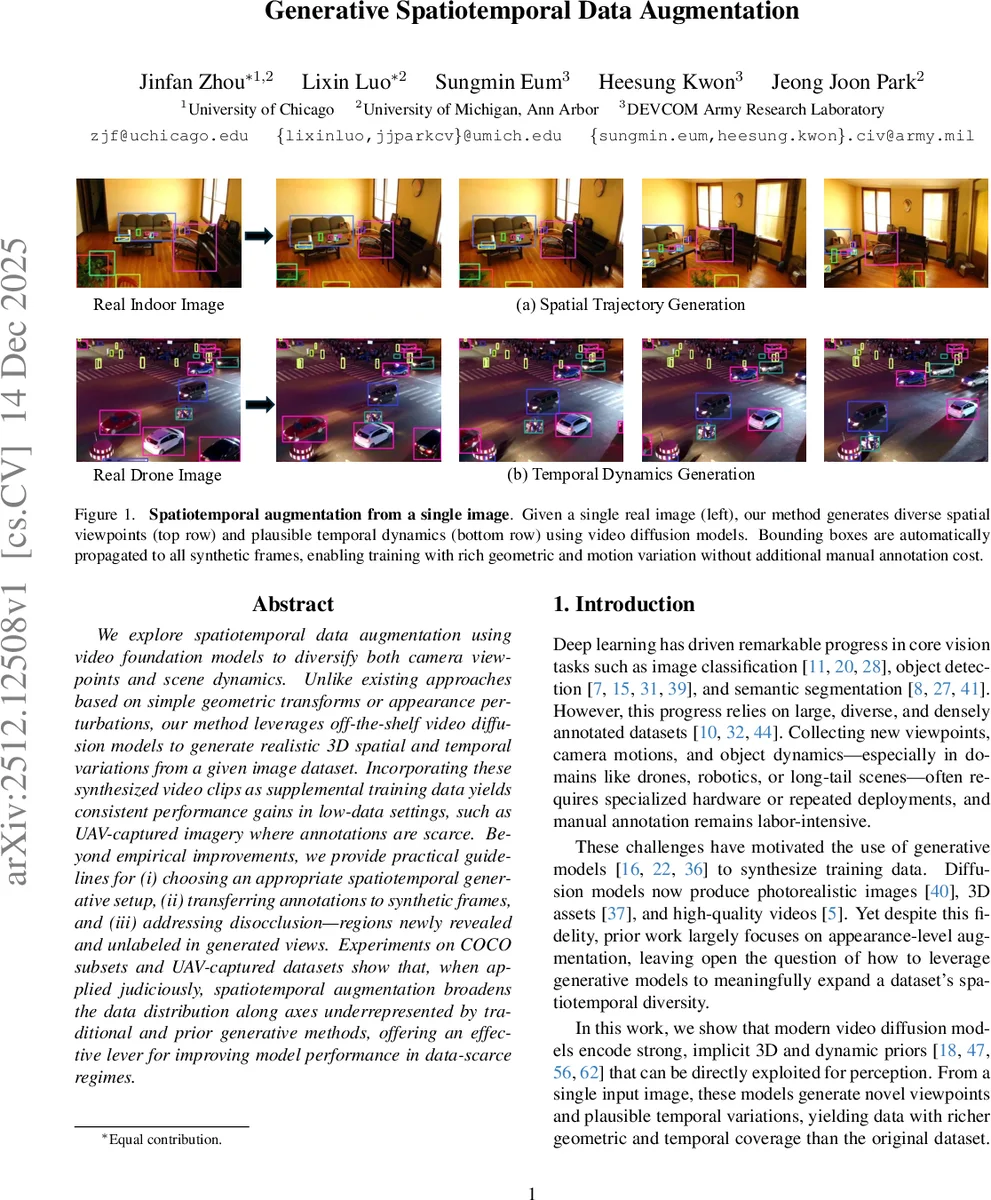

We explore spatiotemporal data augmentation using video foundation models to diversify both camera viewpoints and scene dynamics. Unlike existing approaches based on simple geometric transforms or appearance perturbations, our method leverages off-the-shelf video diffusion models to generate realistic 3D spatial and temporal variations from a given image dataset. Incorporating these synthesized video clips as supplemental training data yields consistent performance gains in low-data settings, such as UAV-captured imagery where annotations are scarce. Beyond empirical improvements, we provide practical guidelines for (i) choosing an appropriate spatiotemporal generative setup, (ii) transferring annotations to synthetic frames, and (iii) addressing disocclusion - regions newly revealed and unlabeled in generated views. Experiments on COCO subsets and UAV-captured datasets show that, when applied judiciously, spatiotemporal augmentation broadens the data distribution along axes underrepresented by traditional and prior generative methods, offering an effective lever for improving model performance in data-scarce regimes.

💡 Research Summary

The paper introduces a novel data‑augmentation framework that leverages off‑the‑shelf video diffusion models to generate both novel camera viewpoints and plausible temporal dynamics from a single static image. Traditional augmentation techniques (flips, crops, color jitter) only perturb appearance locally and cannot emulate the geometric and motion variations encountered in real‑world scenarios such as aerial imaging. By feeding an original image I₀ together with either a prescribed camera trajectory C (a sequence of SE(3) poses) or a temporal control signal τ into a pretrained image‑to‑video diffusion model G, the authors synthesize a short video clip {Iₜ}ₜ=1ᵀ that depicts the same scene from multiple viewpoints or with dynamic changes. From this video, a subset of frames is selected (typically 8–10 per original image) to form the synthetic dataset D_new, which is then merged with the original data D_orig to obtain the augmented set D_aug.

A critical challenge is providing bounding‑box annotations for every generated frame without manual effort. The authors solve this by employing a state‑of‑the‑art video segmentation model (SAM 2). Given the original bounding boxes B₀, SAM 2 tracks and segments each object across the generated video, producing binary masks M_{j,t} for object j at frame t. Tight axis‑aligned bounding boxes B_{j,t} are then derived from these masks, yielding fully automatic annotations for all synthetic frames.

The paper conducts a thorough quantitative analysis of how this spatiotemporal augmentation expands the effective coverage of a limited training set. Using a VGG‑16 feature encoder and a k‑nearest‑neighbor recall metric, they show that recall (the fraction of validation samples whose features are covered by the training set) rises sharply as synthetic frames are added, saturating after roughly 30 frames per image. Simultaneously, they monitor distribution shift with the Kernel Inception Distance (KID). A 1:1 ratio of real to synthetic images provides the best trade‑off: coverage improves substantially while KID remains low, preserving realism.

Disocclusion—regions that become visible when the virtual camera moves—poses a potential source of label noise. The authors explore two mitigation strategies: (1) tracking a dense grid of points from the original image to define a validity mask for each frame, effectively masking out newly revealed pixels; (2) a two‑pass pseudo‑labeling scheme where a detector trained without masking first predicts objects in disoccluded areas, and these predictions are then used as additional supervision. Empirically, both methods yield only marginal gains (≤1 mAP), leading to the practical recommendation that disocclusion can be ignored in most settings.

Extensive experiments on three benchmarks—COCO subsets, VisDrone, and Semantic Drone—demonstrate consistent improvements over strong baselines. Compared to appearance‑only generative augmentations such as Instagen and ControlAug, the proposed spatiotemporal method achieves higher image recall (≈99 %) and object recall (≈88 %). In low‑data regimes, it boosts detection performance by +3.8 mAP on a COCO sub‑dataset, +2.8 mAP on VisDrone, and +5.9 mAP on Semantic Drone. Moreover, when combined with traditional augmentations, the gains are additive, confirming that the method explores complementary axes of variation.

The authors also provide practical guidelines for deployment: (i) use a modest camera motion (e.g., ±15° rotation, small translation) and generate 8–10 frames per image; (ii) maintain a 1:1 real‑to‑synthetic image ratio; (iii) apply CLIP‑based filtering to discard low‑quality frames; (iv) ignore disocclusion handling unless maximal performance is required; and (v) always pair the spatiotemporal augmentation with existing appearance‑based techniques for the best results.

In summary, this work demonstrates that video diffusion models, originally designed for high‑fidelity video synthesis, can be repurposed as powerful spatiotemporal data‑augmentation engines. By automatically transferring annotations via video segmentation, the approach eliminates the need for costly manual labeling while substantially enriching the training distribution along under‑represented geometric and temporal dimensions. The method is especially valuable for domains with scarce annotated data, such as UAV‑based object detection, and opens a new avenue for leveraging generative foundations to improve downstream vision tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment