ATAC: Augmentation-Based Test-Time Adversarial Correction for CLIP

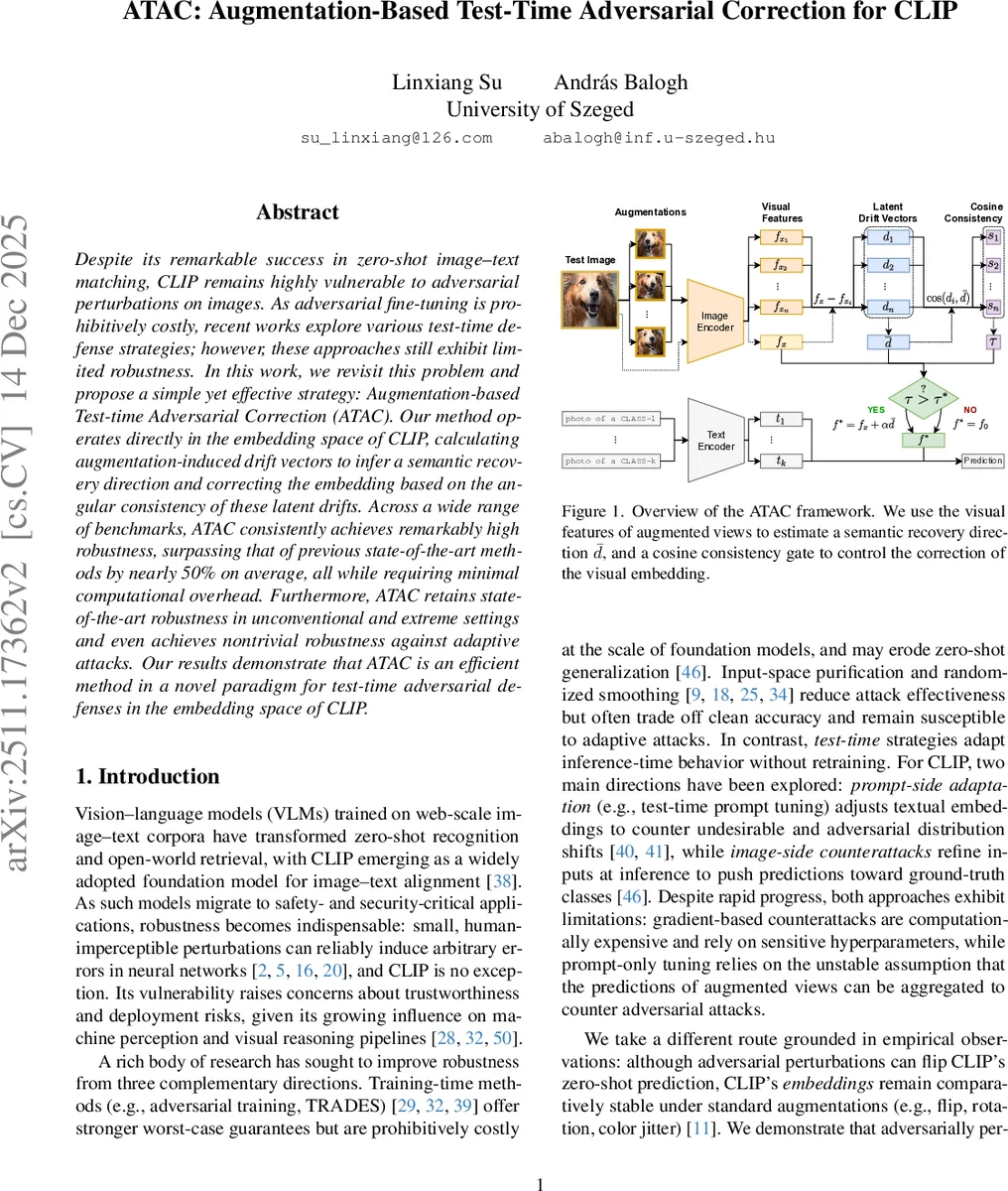

Despite its remarkable success in zero-shot image-text matching, CLIP remains highly vulnerable to adversarial perturbations on images. As adversarial fine-tuning is prohibitively costly, recent works explore various test-time defense strategies; however, these approaches still exhibit limited robustness. In this work, we revisit this problem and propose a simple yet effective strategy: Augmentation-based Test-time Adversarial Correction (ATAC). Our method operates directly in the embedding space of CLIP, calculating augmentation-induced drift vectors to infer a semantic recovery direction and correcting the embedding based on the angular consistency of these latent drifts. Across a wide range of benchmarks, ATAC consistently achieves remarkably high robustness, surpassing that of previous state-of-the-art methods by nearly 50% on average, all while requiring minimal computational overhead. Furthermore, ATAC retains state-of-the-art robustness in unconventional and extreme settings and even achieves nontrivial robustness against adaptive attacks. Our results demonstrate that ATAC is an efficient method in a novel paradigm for test-time adversarial defenses in the embedding space of CLIP.

💡 Research Summary

This paper, titled “ATAC: Augmentation-Based Test-Time Adversarial Correction for CLIP,” addresses a critical vulnerability in Vision-Language Models (VLMs), specifically CLIP. While CLIP demonstrates remarkable zero-shot image-text matching capabilities, it is highly susceptible to adversarial attacks—small, imperceptible perturbations that can cause catastrophic misclassifications. Since adversarial fine-tuning of such large foundation models is prohibitively expensive and may harm zero-shot generalization, the paper focuses on test-time defense strategies. It critiques existing approaches: image-side counterattacks (e.g., TTC) are computationally heavy, and prompt-side adaptations (e.g., R-TPT) rely on potentially unstable assumptions when aggregating predictions from augmented views.

The authors propose a novel, simple, yet highly effective method: Augmentation-based Test-time Adversarial Correction (ATAC). ATAC operates directly in CLIP’s frozen embedding space, performing a lightweight “semantic correction” on the visual feature of an input image. The core idea is that adversarial perturbations cause the image’s embedding to drift from its true semantic location in a consistent direction, which can be estimated and reversed.

The ATAC framework works as follows: For an input image (which may be clean or adversarially perturbed), the method first creates n standard augmented views (e.g., via flips and rotations). It encodes the original image and all augmented views using CLIP’s image encoder to obtain their visual embeddings: f_x for the original and f_x_i for the augmentations. It then calculates “latent drift vectors” d_i = f_x - f_x_i, representing the shift caused by each augmentation. A key observation is that for adversarially attacked images, these drift vectors tend to point in a similar direction, whereas for clean images, they scatter randomly. The mean of these drift vectors, \bar{d}, is computed and interpreted as the estimated “semantic recovery direction.”

To prevent unnecessary correction of clean samples, ATAC employs a cosine-consistency gate. It computes τ, the average cosine similarity between each drift vector d_i and the mean drift \bar{d}. A high τ indicates high directional consistency, typical of adversarial inputs. Only if τ exceeds a pre-defined threshold τ* is the correction applied: the original embedding is adjusted as f* = f_x + α\bar{d}, where α is a step size. The corrected (or original, if the gate didn’t trigger) embedding f* is then normalized and used for downstream tasks like zero-shot classification.

The experimental evaluation is extensive, covering 13 diverse classification benchmarks (e.g., CIFAR-10/100, ImageNet, fine-grained datasets) using the CLIP ViT-B/32 model. Attacks are primarily conducted using PGD under the L∞ norm with ε=4/255. The results are striking: ATAC consistently and significantly outperforms all previous state-of-the-art test-time defenses and even adversarial fine-tuning methods. On average, it improves robust accuracy by nearly 50% over the previous best method. Crucially, this superior robustness is achieved with minimal computational overhead. Inference time for ATAC is only about 5 times that of undefended CLIP (≈18.4 sec/1000 images), making it vastly more efficient than gradient-based counterattacks like TTC and especially prompt-tuning methods like R-TPT, which is orders of magnitude slower.

Further analyses show that ATAC’s effectiveness partly stems from exploiting a flaw in standard untargeted gradient-based attacks, but it maintains state-of-the-art robustness even when this flaw is mitigated. It also demonstrates non-trivial robustness against adaptive attacks designed to break it and performs well under extreme attack settings and against other attack families like Carlini-Wagner and AutoAttack.

In conclusion, ATAC establishes a new paradigm for test-time adversarial defense in VLMs: direct, training-free semantic correction in the embedding space. By cleverly leveraging the directional consistency of augmentation-induced drifts, it achieves an exceptional balance between robust accuracy and computational efficiency, offering a practical and powerful solution for securing CLIP in adversarial environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment