ArtGen: Conditional Generative Modeling of Articulated Objects in Arbitrary Part-Level States

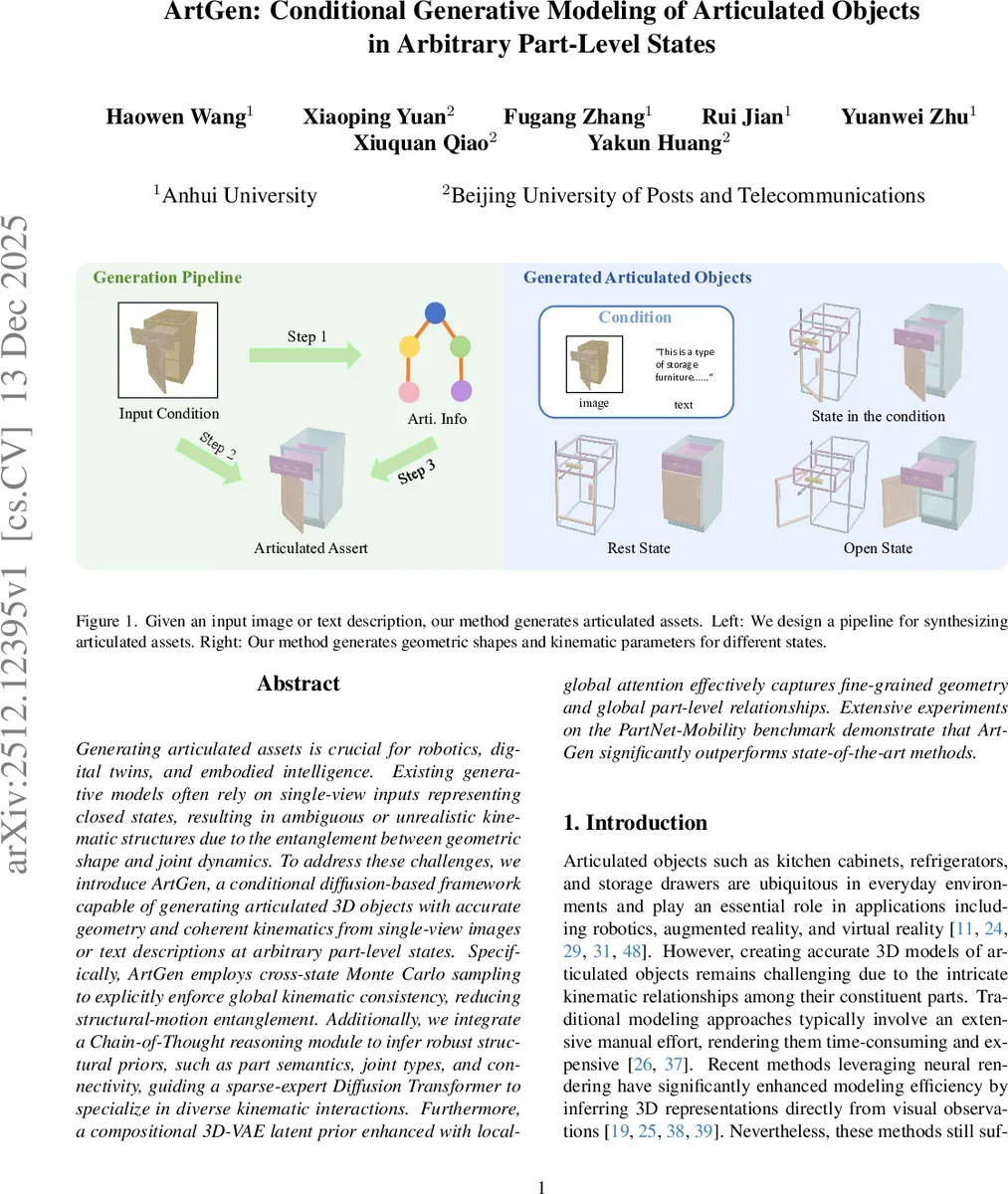

Generating articulated assets is crucial for robotics, digital twins, and embodied intelligence. Existing generative models often rely on single-view inputs representing closed states, resulting in ambiguous or unrealistic kinematic structures due to the entanglement between geometric shape and joint dynamics. To address these challenges, we introduce ArtGen, a conditional diffusion-based framework capable of generating articulated 3D objects with accurate geometry and coherent kinematics from single-view images or text descriptions at arbitrary part-level states. Specifically, ArtGen employs cross-state Monte Carlo sampling to explicitly enforce global kinematic consistency, reducing structural-motion entanglement. Additionally, we integrate a Chain-of-Thought reasoning module to infer robust structural priors, such as part semantics, joint types, and connectivity, guiding a sparse-expert Diffusion Transformer to specialize in diverse kinematic interactions. Furthermore, a compositional 3D-VAE latent prior enhanced with local-global attention effectively captures fine-grained geometry and global part-level relationships. Extensive experiments on the PartNet-Mobility benchmark demonstrate that ArtGen significantly outperforms state-of-the-art methods.

💡 Research Summary

ArtGen introduces a novel conditional diffusion framework for generating articulated 3D objects from a single image or text description, capable of handling arbitrary part‑level articulation states. The authors identify three major shortcomings in prior work: (1) reliance on closed‑state inputs that entangle geometry with joint dynamics, (2) insufficient modeling of global kinematic consistency across multiple states, and (3) dependence on retrieval‑based pipelines that limit novelty. To overcome these issues, ArtGen combines four key components.

First, cross‑state Monte Carlo sampling is employed during training. Joint parameters (axis, range, and normalized state) are sampled uniformly across their continuous intervals, exposing the model to a wide distribution of articulation poses. This forces the diffusion network to learn a representation where geometry and motion are disentangled, ensuring that predictions remain valid for any intermediate pose.

Second, a Chain‑of‑Thought (CoT) reasoning module leverages a large vision‑language model (GPT‑4o) to extract structural priors from the conditioning modality. The CoT pipeline parses the input to (a) count and label candidate parts, (b) estimate coarse spatial relationships, (c) infer connectivity, joint types, and semantic categories. The resulting adjacency matrix is used as an attention mask, while joint‑type and semantic embeddings guide a Mixture‑of‑Experts (MoE) router. This explicit graph inference dramatically reduces ambiguity in local joint predictions and improves global semantic coherence.

Third, the core generative engine is a sparse‑expert Diffusion Transformer (DiT‑MoE). Building on the DiT architecture, the standard feed‑forward layers are replaced with MoE modules, allowing different experts to specialize in distinct kinematic patterns (e.g., revolute, prismatic, screw). The routing decisions are conditioned on the CoT‑derived joint‑type embeddings, enabling the network to allocate the most appropriate expert for each part‑joint pair while keeping computational cost manageable.

Fourth, ArtGen incorporates a part‑level 3D‑VAE latent prior with local‑global attention. Each part is represented by an oriented bounding box (OBB) and a latent code produced by a pre‑trained 3D VAE. Learnable part‑identity embeddings are added to the latents, and a two‑stage attention mechanism first applies self‑attention within each part (local) and then across all parts (global). This design captures fine‑grained geometry while simultaneously modeling inter‑part relationships, leading to high‑fidelity shape synthesis without relying on external shape retrieval.

Multimodal conditioning is achieved via cross‑attention: image features are extracted with DINO V3, text features with a pretrained language encoder, and both are injected into the diffusion transformer. The training pipeline consists of (1) pre‑training the VAE and diffusion model on the PartNet‑Mobility dataset, and (2) fine‑tuning with cross‑state sampling and CoT‑guided graph masks.

Extensive experiments on PartNet‑Mobility demonstrate that ArtGen outperforms prior state‑of‑the‑art methods (NAP, CAGE, SINGAPO, ArtFormer) across several metrics: higher Shape IoU, lower joint‑parameter error, and superior motion continuity. Notably, in text‑conditioned generation, the model correctly predicts joint types and axes in 92 % of cases, and produces coherent articulated motion trajectories without axis drift or inter‑penetration.

In summary, ArtGen advances articulated object generation by (i) enforcing global kinematic consistency through cross‑state learning, (ii) extracting robust structural priors via Chain‑of‑Thought reasoning, (iii) leveraging a MoE‑enhanced diffusion transformer for diverse joint dynamics, and (iv) employing a local‑global attention VAE prior for detailed part geometry. This integrated approach enables high‑quality, controllable synthesis of articulated assets from minimal, single‑view inputs, opening new possibilities for robotics, digital twins, and embodied AI applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment