Repulsor: Accelerating Generative Modeling with a Contrastive Memory Bank

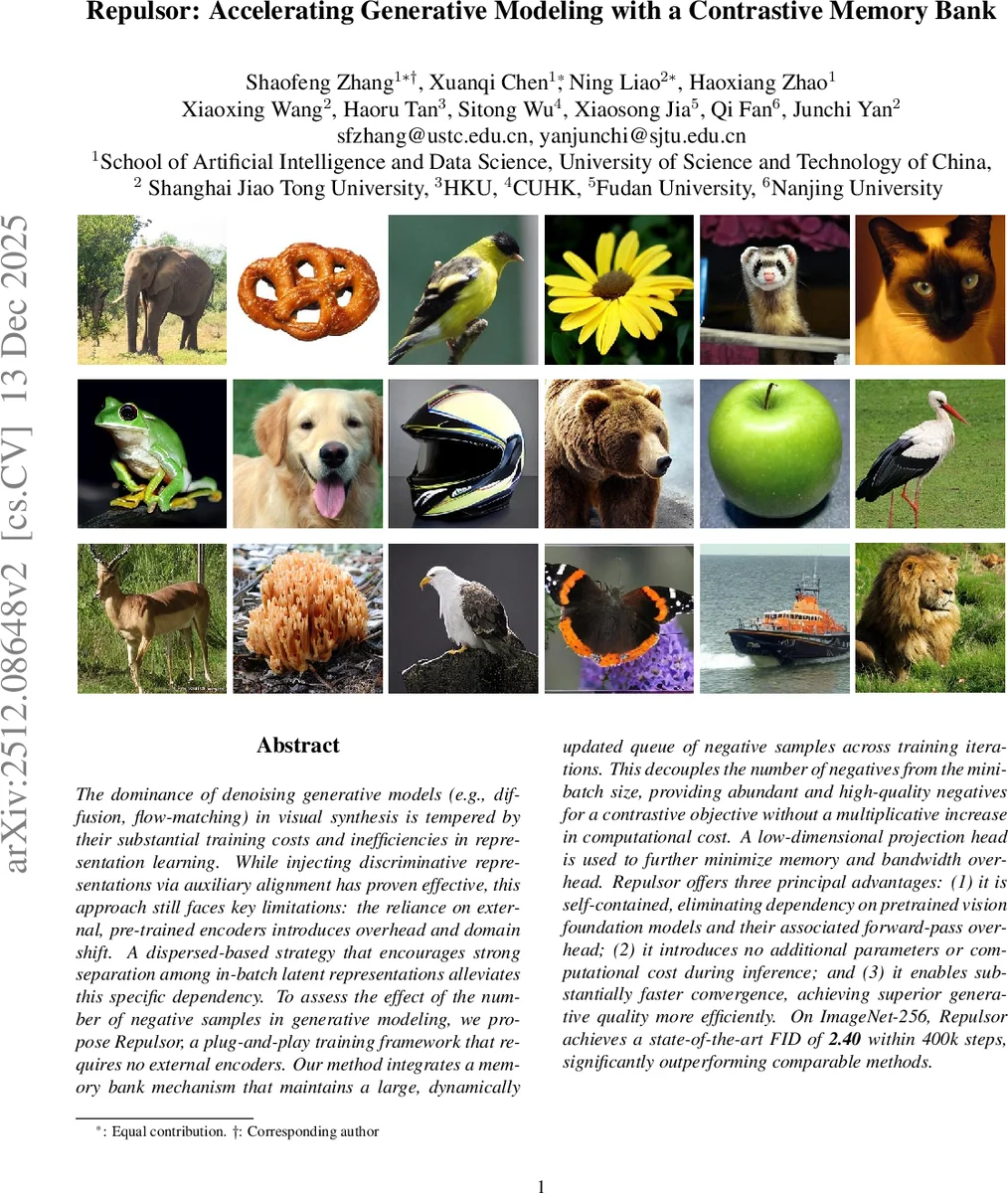

The dominance of denoising generative models (e.g., diffusion, flow-matching) in visual synthesis is tempered by their substantial training costs and inefficiencies in representation learning. While injecting discriminative representations via auxiliary alignment has proven effective, this approach still faces key limitations: the reliance on external, pre-trained encoders introduces overhead and domain shift. A dispersed-based strategy that encourages strong separation among in-batch latent representations alleviates this specific dependency. To assess the effect of the number of negative samples in generative modeling, we propose {\mname}, a plug-and-play training framework that requires no external encoders. Our method integrates a memory bank mechanism that maintains a large, dynamically updated queue of negative samples across training iterations. This decouples the number of negatives from the mini-batch size, providing abundant and high-quality negatives for a contrastive objective without a multiplicative increase in computational cost. A low-dimensional projection head is used to further minimize memory and bandwidth overhead. {\mname} offers three principal advantages: (1) it is self-contained, eliminating dependency on pretrained vision foundation models and their associated forward-pass overhead; (2) it introduces no additional parameters or computational cost during inference; and (3) it enables substantially faster convergence, achieving superior generative quality more efficiently. On ImageNet-256, {\mname} achieves a state-of-the-art FID of \textbf{2.40} within 400k steps, significantly outperforming comparable methods.

💡 Research Summary

The paper “Repulsor: Accelerating Generative Modeling with a Contrastive Memory Bank” tackles two persistent challenges in modern denoising‑based generative models such as diffusion and flow‑matching: high training cost and inefficient representation learning. Existing remedies either align intermediate generator features with a frozen, large‑scale pretrained vision encoder (e.g., REPA) or apply a positive‑free contrastive regularizer (“disperse”) that pushes apart in‑batch latent vectors. The former incurs extra forward‑pass overhead and risks domain shift, while the latter’s effectiveness is limited by the number of negative samples, which is tied to batch size.

Repulsor proposes a unified solution that eliminates the need for any external encoder and dramatically increases the pool of negative samples by introducing a memory bank. The bank stores a queue of K latent vectors (e.g., 65 k) that are updated in a first‑in‑first‑out fashion across training iterations, similar to MoCo. To keep memory and bandwidth requirements modest, a lightweight projection head (a single linear layer) maps high‑dimensional intermediate features to a low‑dimensional space (e.g., 128‑D) before they are enqueued. The vectors are L2‑normalized, and a stop‑gradient operation prevents gradients from flowing back into the bank entries.

The contrastive objective is a reformulation of the disperse loss without any positive pairs. For each current batch of B samples, the loss computes the average of exp(−‖z_i − sg(m_k)‖²/τ) over all K bank entries, takes the logarithm, and adds it to the standard diffusion loss: L = L_diff + γ·L_disp, where τ is a temperature hyperparameter and γ balances the two terms. Because K is independent of B, the effective number of negative pairs can be scaled arbitrarily without increasing per‑step computation; the only extra cost is the pairwise distance computation between the batch and the bank, which is efficiently implemented with matrix operations.

Implementation details: the authors adopt the SiT‑XL/2 transformer architecture as the backbone diffusion model, operating in a 32×32×4 latent space produced by a VAE. Training runs on eight A100 GPUs for 400 k steps with a batch size of 256, AdamW optimizer (lr = 1e‑4, β1 = 0.9, β2 = 0.95), and no weight decay. The memory bank size is set to 65 536, projection dimension to 128, temperature τ = 0.07, and γ = 0.1. Sampling uses a Heun ODE solver with 250 steps and classifier‑free guidance (ω = 1).

Empirical results on ImageNet‑256 demonstrate that Repulsor achieves an FID of 2.40 after only 400 k training steps, substantially outperforming the original disperse method (FID 5.09) and the baseline SiT model (FID 6.02). Importantly, Repulsor introduces no additional parameters or inference‑time computation; the memory bank and projection head are used solely during training and are detached at test time. The method also surpasses a broad set of recent diffusion‑based baselines—including pixel‑space models (ADM‑U, VDM++), latent transformers (DiT, SiT), and masked diffusion transformers (MaskDiT, MDTv2)—while maintaining the same model size and computational budget.

The paper’s contributions can be summarized as follows:

- Self‑contained representation learning: eliminates reliance on external pretrained vision models, avoiding domain shift and extra forward‑pass cost.

- Scalable negative sampling: decouples negative sample count from batch size via a large, dynamically updated memory bank, strengthening the contrastive signal.

- Training acceleration: achieves state‑of‑the‑art sample quality much earlier in training, with no inference overhead.

- Practical plug‑and‑play design: can be added to existing diffusion or flow‑matching pipelines without architectural changes.

Limitations noted by the authors include sensitivity to memory‑bank size and projection dimensionality, and the fact that experiments are confined to latent‑space diffusion; extending the approach to pixel‑space diffusion or multimodal (e.g., text‑to‑image) settings remains future work. Nonetheless, Repulsor offers a compelling, efficient pathway to improve the speed and quality of denoising generative models without sacrificing computational efficiency at inference time.

Comments & Academic Discussion

Loading comments...

Leave a Comment