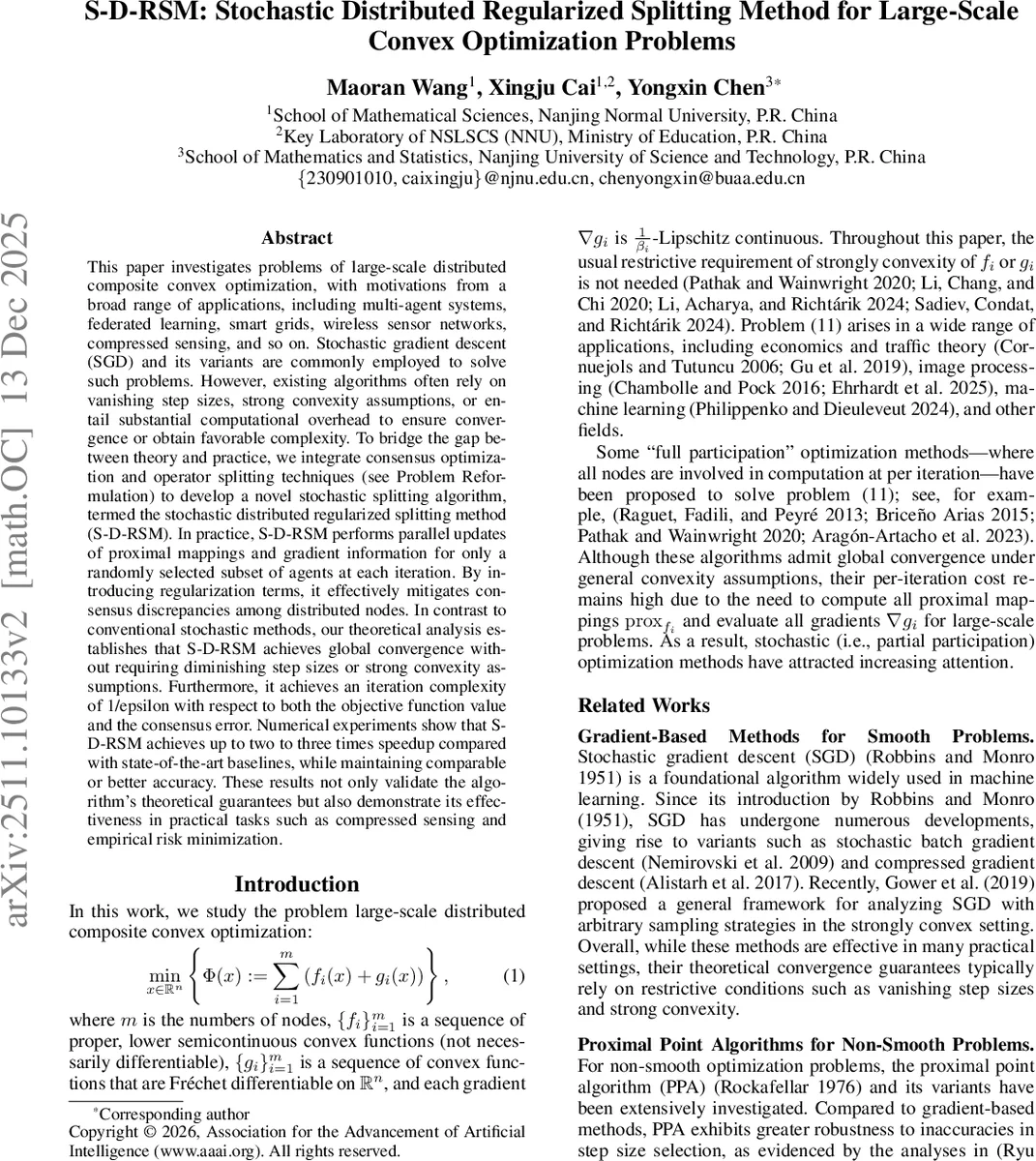

S-D-RSM: Stochastic Distributed Regularized Splitting Method for Large-Scale Convex Optimization Problems

This paper investigates the problems large-scale distributed composite convex optimization, with motivations from a broad range of applications, including multi-agent systems, federated learning, smart grids, wireless sensor networks, compressed sensing, and so on. Stochastic gradient descent (SGD) and its variants are commonly employed to solve such problems. However, existing algorithms often rely on vanishing step sizes, strong convexity assumptions, or entail substantial computational overhead to ensure convergence or obtain favorable complexity. To bridge the gap between theory and practice, we integrate consensus optimization and operator splitting techniques (see Problem Reformulation) to develop a novel stochastic splitting algorithm, termed the \emph{stochastic distributed regularized splitting method} (S-D-RSM). In practice, S-D-RSM performs parallel updates of proximal mappings and gradient information for only a randomly selected subset of agents at each iteration. By introducing regularization terms, it effectively mitigates consensus discrepancies among distributed nodes. In contrast to conventional stochastic methods, our theoretical analysis establishes that S-D-RSM achieves global convergence without requiring diminishing step sizes or strong convexity assumptions. Furthermore, it achieves an iteration complexity of $\mathcal{O}(1/ε)$ with respect to both the objective function value and the consensus error. Numerical experiments show that S-D-RSM achieves up to 2–3$\times$ speedup compared to state-of-the-art baselines, while maintaining comparable or better accuracy. These results not only validate the algorithm’s theoretical guarantees but also demonstrate its effectiveness in practical tasks such as compressed sensing and empirical risk minimization.

💡 Research Summary

This paper introduces the Stochastic Distributed Regularized Splitting Method (S-D-RSM), a novel algorithm designed to address large-scale distributed composite convex optimization problems. Such problems, where the objective function is a sum of potentially non-smooth and smooth components across multiple nodes, are prevalent in federated learning, multi-agent systems, compressed sensing, and sensor networks. The authors identify key limitations in existing stochastic methods like Stochastic Gradient Descent (SGD) and stochastic operator-splitting variants (e.g., S-FDR, S-GFB): their reliance on diminishing step-sizes for convergence guarantees, restrictive assumptions like strong convexity for favorable complexity bounds, and significant per-iteration computational overhead despite their stochastic nature.

To bridge this gap between theory and practice, S-D-RSM integrates consensus optimization with operator splitting techniques. Its core innovation lies in a partial participation scheme combined with a novel regularization strategy. At each iteration, only a randomly selected subset of nodes (agents) is activated to perform computations—specifically, evaluating their local proximal mappings and gradients. This drastically reduces computational burden compared to methods requiring full node participation per iteration. Crucially, the local subproblem for each node includes a regularization term of the form α_i(y_i - x), which proactively penalizes deviations between the local variable y_i and the global consensus variable x, effectively mitigating consensus discrepancy among distributed nodes.

The theoretical analysis of S-D-RSM is a major contribution. The authors prove that, under standard convexity and Lipschitz continuity assumptions, the algorithm achieves almost sure convergence of the iterates to an optimal solution. Remarkably, this convergence is guaranteed using constant step-sizes, eliminating the practical performance degradation associated with diminishing step-size rules. Furthermore, they establish a sublinear ergodic convergence rate of O(1/K) for both the objective function gap and the consensus violation. This translates to an iteration complexity of O(1/ε), which is optimal for this class of problems under the given general convexity assumptions. This complexity outperforms existing methods like S-PG and does not require the strong convexity or interpolation conditions needed by other O(1/ε) methods.

The paper validates the algorithm’s effectiveness through comprehensive numerical experiments on standard problems like LASSO for compressed sensing and logistic regression for empirical risk minimization. The results demonstrate that S-D-RSM achieves a speedup of 2 to 3 times compared to state-of-the-art baselines (including S-FDR, S-GFB, and FedExProx) in terms of time or iterations required to reach a given accuracy level. The experiments also confirm the algorithm’s robustness in non-IID (Independent and Identically Distributed) data settings, a critical challenge in federated learning, due to its theoretical guarantees being independent of data distribution across nodes.

In summary, S-D-RSM presents a significant advance by offering an algorithm that is simultaneously computationally efficient (via partial participation), theoretically sound (converging with constant steps and optimal complexity under general convexity), and practically robust (performing well in heterogeneous settings). It successfully addresses the persistent trade-offs between theoretical requirements and practical performance in distributed stochastic optimization.

Comments & Academic Discussion

Loading comments...

Leave a Comment