Measuring Chain of Thought Faithfulness by Unlearning Reasoning Steps

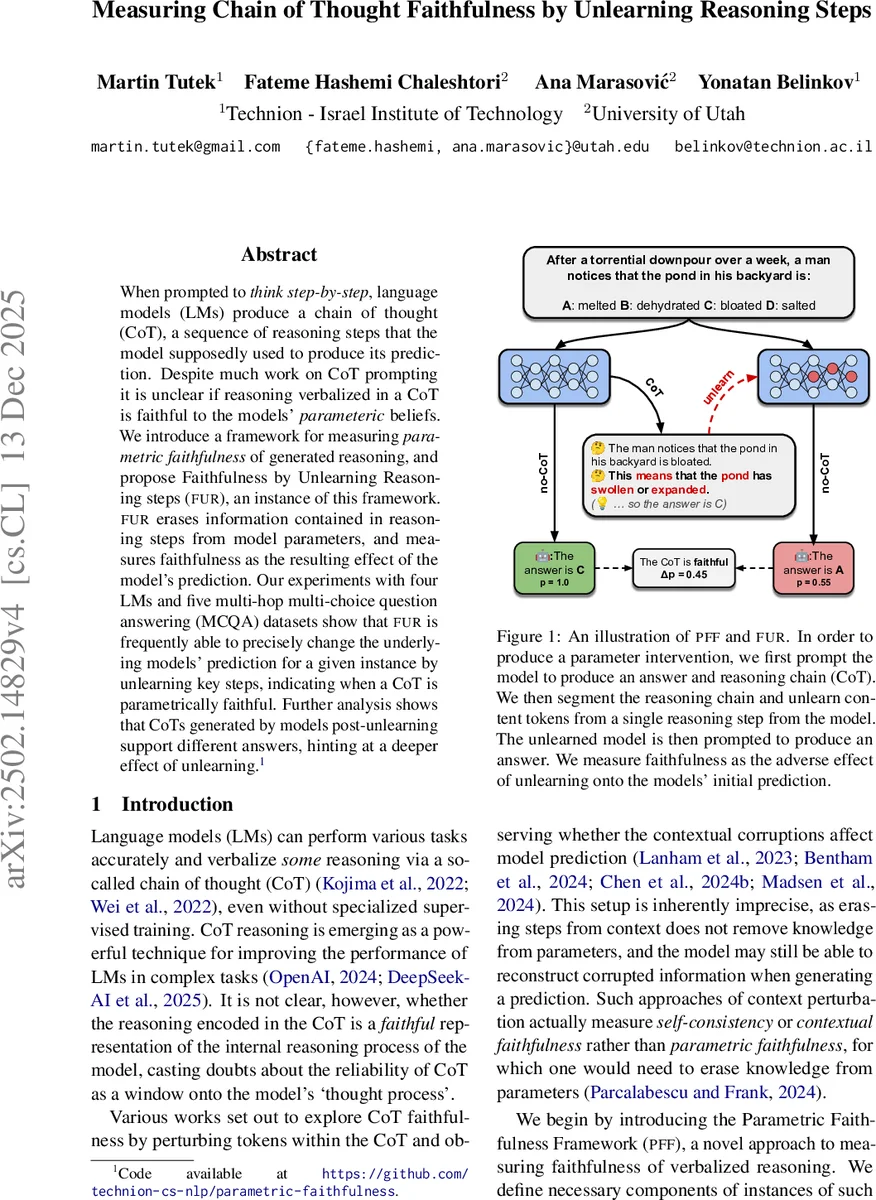

When prompted to think step-by-step, language models (LMs) produce a chain of thought (CoT), a sequence of reasoning steps that the model supposedly used to produce its prediction. Despite much work on CoT prompting, it is unclear if reasoning verbalized in a CoT is faithful to the models’ parametric beliefs. We introduce a framework for measuring parametric faithfulness of generated reasoning, and propose Faithfulness by Unlearning Reasoning steps (FUR), an instance of this framework. FUR erases information contained in reasoning steps from model parameters, and measures faithfulness as the resulting effect on the model’s prediction. Our experiments with four LMs and five multi-hop multi-choice question answering (MCQA) datasets show that FUR is frequently able to precisely change the underlying models’ prediction for a given instance by unlearning key steps, indicating when a CoT is parametrically faithful. Further analysis shows that CoTs generated by models post-unlearning support different answers, hinting at a deeper effect of unlearning.

💡 Research Summary

The paper tackles the open question of whether the chain‑of‑thought (CoT) explanations that large language models (LLMs) generate truly reflect the models’ internal reasoning, or merely serve as plausible surface text. Existing work mostly evaluates “faithfulness” by perturbing the CoT in the prompt and observing changes in the model’s answer. Such contextual perturbations, however, cannot guarantee that the model’s underlying parameters have been affected; the model may simply reconstruct the missing information from its latent knowledge. To address this gap, the authors propose a new paradigm called Parametric Faithfulness Framework (PFF), which explicitly intervenes on the model’s parameters and then measures the impact of that intervention on the model’s predictions.

PFF consists of two stages. In Stage 1, the model is prompted to produce a CoT, which is then segmented into reasoning steps of a chosen granularity (typically sentences with at least two content words). For each step, a “forget set” (D_FG) is built from input‑output pairs that force the model to predict the step’s content words given the preceding context. A “retain set” (D_RT) is also constructed from random steps of other instances to preserve general language ability. The authors apply a machine‑unlearning technique—Negative Preference Optimization (NPO) regularized with a KL‑divergence term (NPO+KL)—to update only the second feed‑forward matrix (FF2) of the transformer’s MLP layers. This operation discourages the model from generating the targeted content words while keeping its behavior on the retain set close to the original model.

In Stage 2, the altered model (M*) and the original model (M) are evaluated. Two main evaluation protocols are used: (1) direct answer comparison (Δprediction) and (2) reasoning comparison (Δreasoning) when both models are asked to generate a CoT and then answer. The authors define two quantitative metrics: FF‑HARD, which measures whether the entire CoT is faithful (i.e., does unlearning any step change the answer?), and FF‑SOFT, which identifies the most salient steps (i.e., does unlearning a specific step change the answer?). To ensure that the unlearning is both effective and precise, they introduce two control measures: efficacy (the normalized drop in the step’s length‑normalized probability after unlearning) and specificity (the proportion of unchanged performance on a held‑out set of unrelated instances).

Experiments are conducted on four LLMs (including Llama‑2‑13B, GPT‑3.5‑Turbo, Claude‑2) and five multi‑hop multiple‑choice QA datasets (ARC‑E, OpenBookQA, HotpotQA, MultiRC, and AI2‑Reasoning). For each instance, the authors generate a CoT, segment it, and apply FUR (Faithfulness by Unlearning Reasoning steps) to each step individually. Results show that unlearning a key reasoning step frequently leads to a substantial Δprediction (average 0.45–0.62), meaning the model’s answer flips after the step is erased. Conversely, unlearning peripheral steps rarely changes the answer, yielding low FF‑SOFT scores. Efficacy scores are high (0.71–0.84), indicating that the targeted step’s probability drops sharply, while specificity remains above 0.88, confirming that overall model competence is preserved.

A deeper analysis reveals that after unlearning, the model often generates a new CoT that diverges from the original reasoning and supports a different answer. This suggests that the erased knowledge was indeed stored in the parameters and that the model reconstructs a new reasoning path when that knowledge is removed. Human annotators and an LLM‑as‑judge evaluation further show that steps identified as “faithful” by FUR are not necessarily perceived as plausible or convincing by humans, highlighting a gap between parametric faithfulness and human‑centred plausibility.

The paper’s contributions are: (1) introducing the PFF paradigm for measuring parametric faithfulness, (2) instantiating it with a concrete unlearning method (NPO+KL) called FUR, (3) defining FF‑HARD and FF‑SOFT metrics for full‑chain and step‑level faithfulness, (4) providing extensive empirical evidence across models and datasets that step‑wise unlearning can reliably affect predictions, and (5) demonstrating that faithful steps may not align with human judgments, motivating future work on aligning plausibility with true internal reasoning.

In conclusion, the work establishes a rigorous, parameter‑level methodology for probing whether a model’s verbalized chain of thought truly mirrors its internal computations. It opens avenues for more trustworthy AI systems where explanations are not only plausible but also grounded in the model’s learned knowledge. Future research directions include scaling the unlearning process to longer, more complex CoTs, improving the efficiency of targeted parameter edits, and developing training or alignment strategies that simultaneously optimise for both human‑perceived plausibility and parametric faithfulness.

Comments & Academic Discussion

Loading comments...

Leave a Comment