BAgger: Backwards Aggregation for Mitigating Drift in Autoregressive Video Diffusion Models

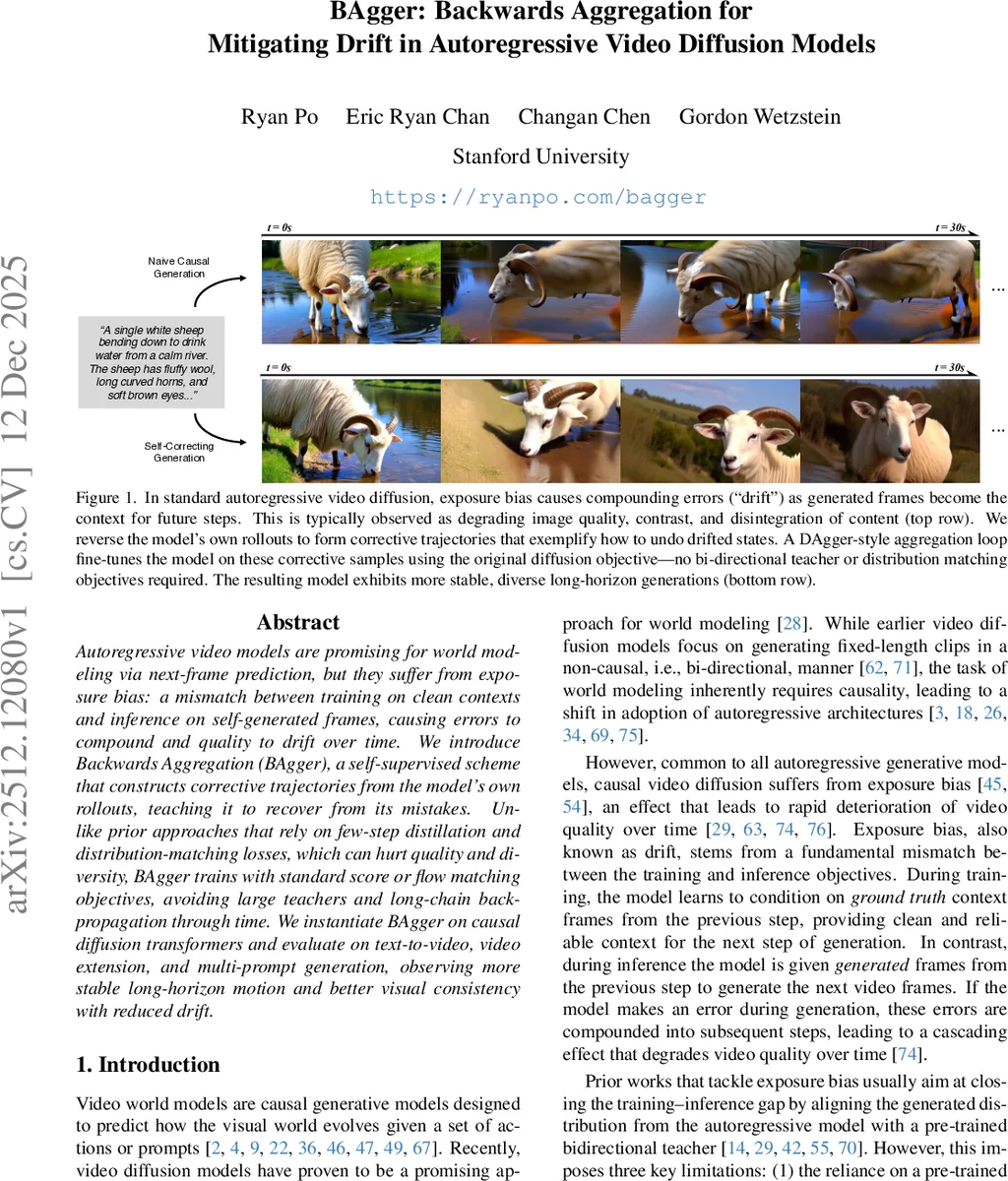

Autoregressive video models are promising for world modeling via next-frame prediction, but they suffer from exposure bias: a mismatch between training on clean contexts and inference on self-generated frames, causing errors to compound and quality to drift over time. We introduce Backwards Aggregation (BAgger), a self-supervised scheme that constructs corrective trajectories from the model’s own rollouts, teaching it to recover from its mistakes. Unlike prior approaches that rely on few-step distillation and distribution-matching losses, which can hurt quality and diversity, BAgger trains with standard score or flow matching objectives, avoiding large teachers and long-chain backpropagation through time. We instantiate BAgger on causal diffusion transformers and evaluate on text-to-video, video extension, and multi-prompt generation, observing more stable long-horizon motion and better visual consistency with reduced drift.

💡 Research Summary

The paper “BAgger: Backwards Aggregation for Mitigating Drift in Autoregressive Video Diffusion Models” addresses a fundamental challenge in sequential video generation known as exposure bias or drift. Autoregressive video diffusion models, which generate videos frame-by-frame conditioned on previous frames, are trained on pristine ground-truth data. However, during inference, they must condition on their own previously generated outputs, which may contain errors. This train-test distribution mismatch causes small errors to compound over time, leading to a gradual degradation in visual quality, loss of content coherence, and unnatural motion—a phenomenon termed “drift.”

Prior approaches to mitigate drift include techniques like Diffusion Forcing, which adds noise to context frames during training to increase robustness, and methods like Self-Forcing, which use a pre-trained bidirectional teacher model to align the distribution of the model’s own rollouts with the true data distribution via distribution-matching losses (e.g., score distillation). However, these methods have significant limitations: noise injection doesn’t fully address the core distribution shift, while teacher-dependent methods require large external models, computationally expensive backpropagation through time, and risk mode collapse, reducing output diversity.

The authors propose a novel, self-supervised training framework called Backwards Aggregation (BAgger). Inspired by the Dataset Aggregation (DAgger) algorithm from imitation learning, BAgger teaches the model to recover from its own mistakes without relying on an external expert or complex loss functions. The core insight is elegantly simple: by reversing a model’s own autoregressive rollout—which typically starts from a high-quality frame and drifts to lower quality—one can automatically create a “corrective trajectory.” This reversed sequence demonstrates how to transition from a drifted, low-quality state back to a high-quality state.

The BAgger training loop is iterative. Starting with a seed dataset of real videos, each round consists of: 1) Training an autoregressive diffusion model (using the Diffusion Forcing objective) on the current aggregated dataset. 2) Using this trained model to perform on-policy rollouts, generating videos from ground-truth starting frames. These rollouts naturally exhibit drift. 3) Reversing these generated video clips to create corrective trajectory samples. The accompanying text prompt is also updated to describe the reversed motion (e.g., “a person walking” becomes “a reversed video of a person walking”). 4) Aggregating these new corrective samples with the existing dataset. 5) Repeating the process. Over multiple rounds, the dataset progressively incorporates more examples of how to generate good frames given potentially drifted contexts, effectively bridging the train-inference gap.

A key advantage of BAgger is that it trains using the standard score matching or flow matching objectives native to diffusion models. It does not require distribution-matching losses, large teacher models, or full-chain backpropagation, making it more computationally efficient and stable while preserving output diversity.

The paper instantiates BAgger on a causal diffusion transformer architecture and evaluates it across multiple tasks: long-horizon text-to-video generation, video extension (continuing an input clip), and multi-prompt generation (where the text conditioning changes over time). Qualitative results show that BAgger-trained models produce videos with significantly improved long-term stability, maintaining subject identity, visual quality, and coherent motion for durations where baseline models (Diffusion Forcing, Self-Forcing) fail due to severe drift. Quantitative evaluations across metrics like frame consistency, text alignment, and human preference scores confirm BAgger’s superiority. The method demonstrates that a model can learn to self-correct by leveraging the inherent temporal symmetry of video data, providing a powerful and elegant solution to the persistent problem of exposure bias in autoregressive world models.

Comments & Academic Discussion

Loading comments...

Leave a Comment