V-RGBX: Video Editing with Accurate Controls over Intrinsic Properties

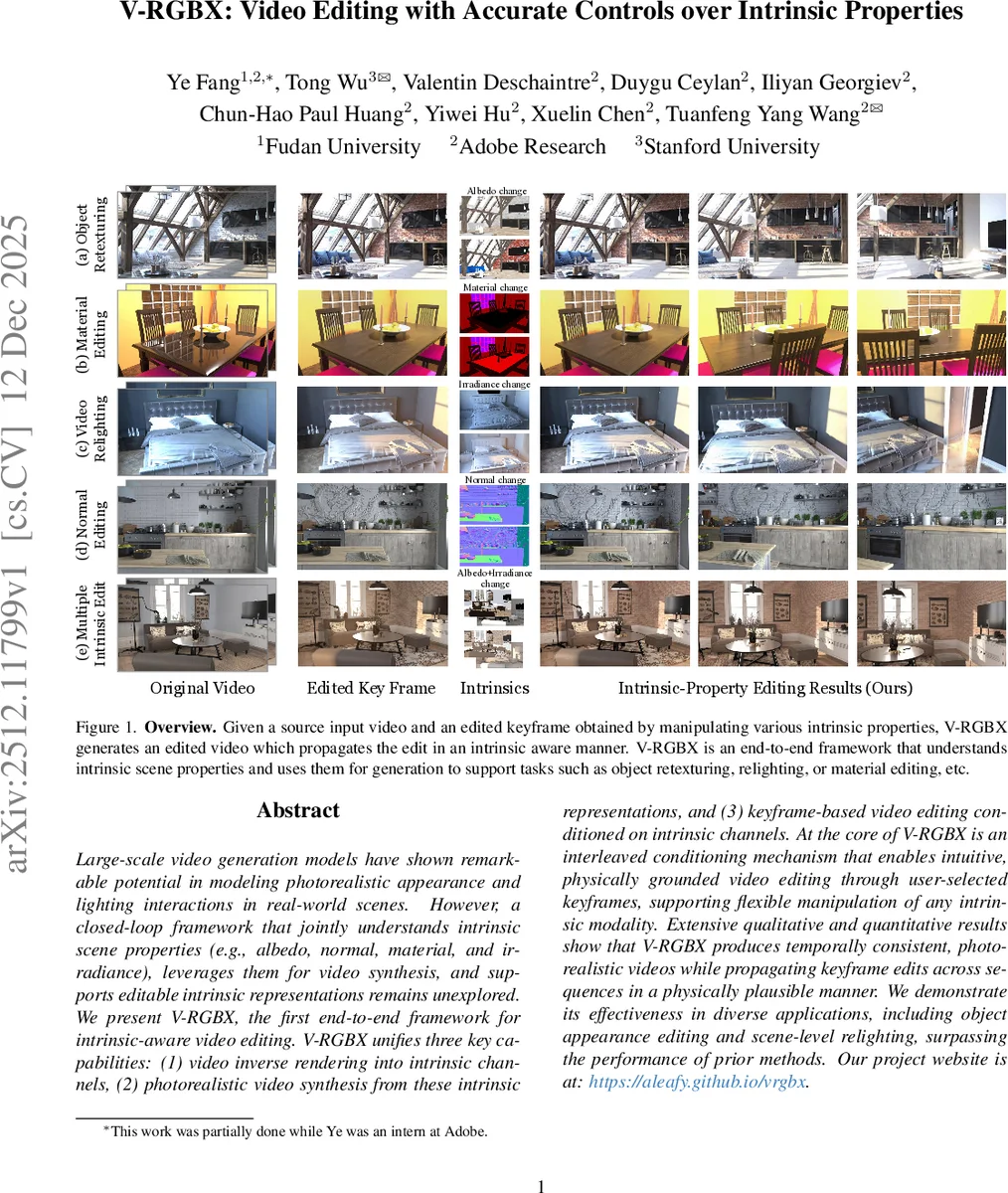

Large-scale video generation models have shown remarkable potential in modeling photorealistic appearance and lighting interactions in real-world scenes. However, a closed-loop framework that jointly understands intrinsic scene properties (e.g., albedo, normal, material, and irradiance), leverages them for video synthesis, and supports editable intrinsic representations remains unexplored. We present V-RGBX, the first end-to-end framework for intrinsic-aware video editing. V-RGBX unifies three key capabilities: (1) video inverse rendering into intrinsic channels, (2) photorealistic video synthesis from these intrinsic representations, and (3) keyframe-based video editing conditioned on intrinsic channels. At the core of V-RGBX is an interleaved conditioning mechanism that enables intuitive, physically grounded video editing through user-selected keyframes, supporting flexible manipulation of any intrinsic modality. Extensive qualitative and quantitative results show that V-RGBX produces temporally consistent, photorealistic videos while propagating keyframe edits across sequences in a physically plausible manner. We demonstrate its effectiveness in diverse applications, including object appearance editing and scene-level relighting, surpassing the performance of prior methods.

💡 Research Summary

V‑RGBX introduces a novel end‑to‑end framework for intrinsic‑aware video editing, addressing a gap in current video diffusion models that lack explicit control over physical scene properties such as albedo, surface normals, material parameters, and illumination. The system consists of three tightly coupled stages. First, an inverse‑rendering module (RGB → X) decomposes each input frame into four intrinsic channels. This module builds on a pretrained WAN‑VAE encoder and a Diffusion Transformer (DiT) backbone; a text prompt indicating the target modality (e.g., “albedo”) is concatenated with the noisy latent at each denoising step, allowing the network to learn separate decoders for each intrinsic map. A velocity‑prediction loss is added to encourage temporal smoothness across frames.

Second, after a user edits one or more keyframes (using Photoshop, a text‑to‑image model, etc.), the edited intrinsic maps are combined with unedited maps from other frames through an “interleaved conditioning” sampler. Rather than inserting empty tokens (which would waste memory), the sampler randomly selects the edited modality for the keyframe timestamps while drawing non‑conflicting modalities for the remaining frames, producing a single conditioning sequence V′_X that alternates modalities over time. This design preserves both temporal order and modality identity, enabling the model to propagate sparse edits throughout the video without introducing inconsistencies.

Third, the forward‑rendering module (X → RGB) synthesizes the final video. It reuses the WAN‑2 DiT video generator but augments its input with two new condition streams: (1) the interleaved intrinsic sequence encoded via a Temporal‑aware Intrinsic Embedding (TIE), which maps each frame’s modality index to a learnable embedding and packs these embeddings into the chunk dimension of the DiT (four frames per chunk); and (2) a reference latent derived from the edited RGB keyframes, encoded by the same WAN‑VAE encoder and concatenated with the intrinsic embeddings. The combined latent

Comments & Academic Discussion

Loading comments...

Leave a Comment