Smudged Fingerprints: A Systematic Evaluation of the Robustness of AI Image Fingerprints

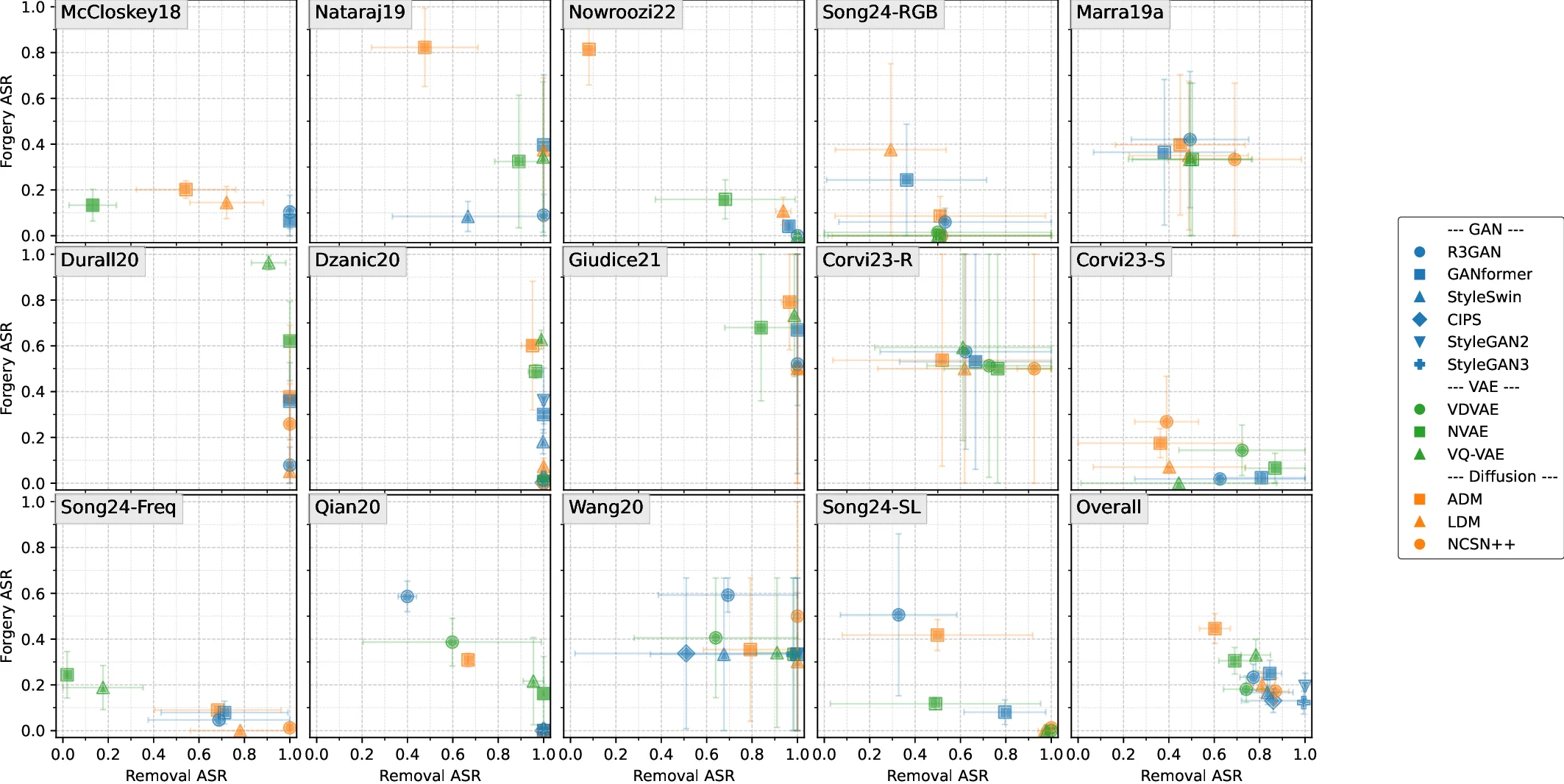

Model fingerprint detection has shown promise to trace the provenance of AI-generated images in forensic applications. However, despite the inherent adversarial nature of these applications, existing evaluations rarely consider adversarial settings. We present the first systematic security evaluation of these techniques, formalizing threat models that encompass both white- and black-box access and two attack goals: fingerprint removal, which erases identifying traces to evade attribution, and fingerprint forgery, which seeks to cause misattribution to a target model. We implement five attack strategies and evaluate 14 representative fingerprinting methods across RGB, frequency, and learned-feature domains on 12 state-of-the-art image generators. Our experiments reveal a pronounced gap between clean and adversarial performance. Removal attacks are highly effective, often achieving success rates above 80% in white-box settings and over 50% under black-box access. While forgery is more challenging than removal, its success varies significantly across targeted models. We also observe a utility-robustness trade-off: accurate attribution methods are often vulnerable to attacks and, although some techniques are robust in specific settings, none achieves robustness and accuracy across all evaluated threat models. These findings highlight the need for techniques that balance robustness and accuracy, and we identify the most promising approaches toward this goal. Code available at: https://github.com/kaikaiyao/SmudgedFingerprints.

💡 Research Summary

The paper “Smudged Fingerprints: A Systematic Evaluation of the Robustness of AI Image Fingerprints” provides the first comprehensive security assessment of model‑fingerprint detection (MFD) methods, which aim to attribute AI‑generated images to their source models. Recognizing that forensic applications are inherently adversarial, the authors formalize three threat models that capture varying attacker knowledge: a white‑box setting (full access to the detector’s architecture, parameters, and training data), a black‑box setting (only query‑based access to inputs and outputs), and an intermediate “surrogate‑model” setting. Within each threat model they consider two attacker goals: fingerprint removal (suppressing the model‑specific signal so that the image cannot be correctly attributed) and fingerprint forgery (modifying the image so that it is mis‑attributed to a target model).

To operationalize these goals, five attack strategies are implemented: (1) pixel‑level projected gradient descent (PGD) that directly minimizes the detector’s confidence in the true model; (2) frequency‑domain manipulation that attenuates or reshapes high‑frequency components identified as fingerprint carriers; (3) noise‑residual reconstruction that removes characteristic residual patterns; (4) style‑transfer‑based image transformation that changes color and texture distributions while preserving perceptual quality; and (5) adversarial generator retraining, where an attacker trains a surrogate generator to produce images that mimic the target model’s fingerprint. In white‑box attacks the loss function explicitly incorporates the detector’s output, enabling rapid convergence; in black‑box attacks a zero‑order optimizer (e.g., NES) is used with a limited query budget (500–2000 queries).

The evaluation covers 12 state‑of‑the‑art image generators (including StyleGAN2, ProGAN, BigGAN, VQ‑VAE, DDPM, etc.) and 14 representative MFD techniques drawn from three domains: RGB‑pixel statistics (e.g., per‑channel co‑occurrence matrices, color saturation anomalies), frequency‑domain features (FFT/DCT power spectra, radial‑angular averages), and learned‑feature approaches (ResNet‑50, Inception‑v3, Xception, and the cross‑modal ManiFPT framework). All methods are tested on the FFHQ face dataset, with attribution performance measured by area‑under‑the‑curve (AUC) and attack success rate (the proportion of images for which the attacker’s goal is achieved).

Results show a stark contrast between benign and adversarial conditions. In the white‑box setting, fingerprint‑removal attacks achieve an average success rate of 84 % (up to 92 % for certain RGB‑based detectors), while black‑box removal still exceeds 50 % success, indicating that even limited query access suffices to degrade fingerprint signals. Frequency‑domain attacks and pixel‑level PGD are the most effective, whereas style‑transfer attacks are less potent but still cause noticeable drops in attribution accuracy. Forgery attacks are overall more difficult: average success is around 31 %, but for specific models (e.g., StyleGAN2) and learned‑feature detectors, success can rise above 60 %. This demonstrates that high‑capacity CNN detectors can be fooled when an attacker reproduces similar high‑dimensional feature patterns.

A key observation is the utility‑robustness trade‑off. Methods that attain the highest clean‑scenario AUC (>0.95) are also the most fragile; small perturbations or adaptive attacks cause their performance to collapse dramatically. Conversely, techniques with modest clean‑scenario accuracy (0.80–0.85) often rely on coarse frequency statistics and exhibit greater resilience to generic image transformations (JPEG compression, resizing). No single method maintains both high attribution accuracy and strong robustness across all threat models and attack goals.

The authors conclude that current MFD technologies, while impressive in controlled benchmarks, are insufficiently hardened for real‑world forensic deployment. They recommend several research directions: (i) adversarial training of fingerprint detectors using the very attacks described in the paper; (ii) multi‑modal fusion of RGB, frequency, and learned features to raise the bar for single‑domain attacks; (iii) randomized ensembles of diverse detectors to thwart attacker specialization; and (iv) integration of complementary forensic evidence (metadata, capture device signatures) to form a more holistic attribution pipeline.

Overall, the paper fills a critical gap by systematically quantifying the vulnerability of AI‑image fingerprinting methods, providing a clear benchmark for future robust designs, and highlighting the urgent need for security‑aware attribution mechanisms in the era of generative AI.

Comments & Academic Discussion

Loading comments...

Leave a Comment