ECCO: Leveraging Cross-Camera Correlations for Efficient Live Video Continuous Learning

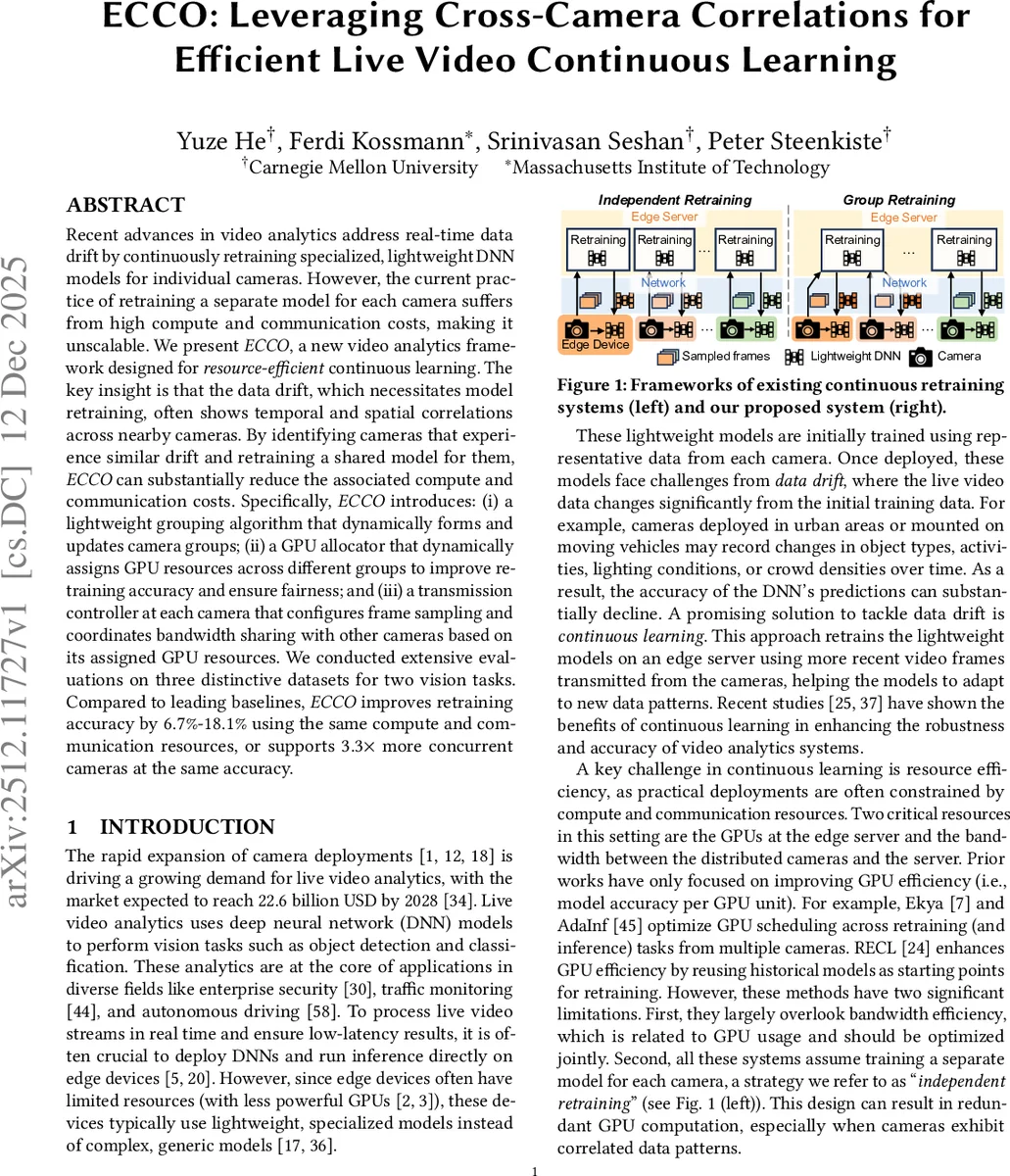

Recent advances in video analytics address real-time data drift by continuously retraining specialized, lightweight DNN models for individual cameras. However, the current practice of retraining a separate model for each camera suffers from high compute and communication costs, making it unscalable. We present ECCO, a new video analytics framework designed for resource-efficient continuous learning. The key insight is that the data drift, which necessitates model retraining, often shows temporal and spatial correlations across nearby cameras. By identifying cameras that experience similar drift and retraining a shared model for them, ECCO can substantially reduce the associated compute and communication costs. Specifically, ECCO introduces: (i) a lightweight grouping algorithm that dynamically forms and updates camera groups; (ii) a GPU allocator that dynamically assigns GPU resources across different groups to improve retraining accuracy and ensure fairness; and (iii) a transmission controller at each camera that configures frame sampling and coordinates bandwidth sharing with other cameras based on its assigned GPU resources. We conducted extensive evaluations on three distinctive datasets for two vision tasks. Compared to leading baselines, ECCO improves retraining accuracy by 6.7%-18.1% using the same compute and communication resources, or supports 3.3 times more concurrent cameras at the same accuracy.

💡 Research Summary

The paper tackles the scalability bottleneck of continuous learning for live video analytics, where each camera traditionally retrains its own lightweight DNN model on an edge server. As the number of cameras grows, GPU compute and upstream bandwidth scale linearly, making the approach infeasible for large deployments. ECCO (Efficient Cross‑Camera Continuous Learning) introduces a novel “group retraining” paradigm: cameras that experience similar data drift—often due to spatial proximity or synchronized temporal changes—are clustered together, and a single shared model is retrained using the aggregated data from the whole group.

ECCO’s architecture consists of three tightly coupled components. First, a lightweight grouping algorithm operates in two stages. When a camera detects drift, the server uses inexpensive metadata (drift timestamp, geographic location) to shortlist candidate groups, then evaluates the expected accuracy gain of adding the camera to each candidate. If the gain exceeds a threshold, the camera joins that group; otherwise a new group is created. Groups are periodically re‑evaluated during training windows to adapt to evolving drift patterns, allowing cameras to migrate between groups as needed.

Second, the GPU allocator addresses a fairness issue unique to group retraining. Traditional allocators maximize total accuracy improvement, which unintentionally biases resources toward larger groups because their aggregate accuracy gain counts multiple cameras. ECCO formulates a new objective that balances system‑wide accuracy with per‑group fairness:

max

Comments & Academic Discussion

Loading comments...

Leave a Comment