Say it or AI it: Evaluating Hands-Free Text Correction in Virtual Reality

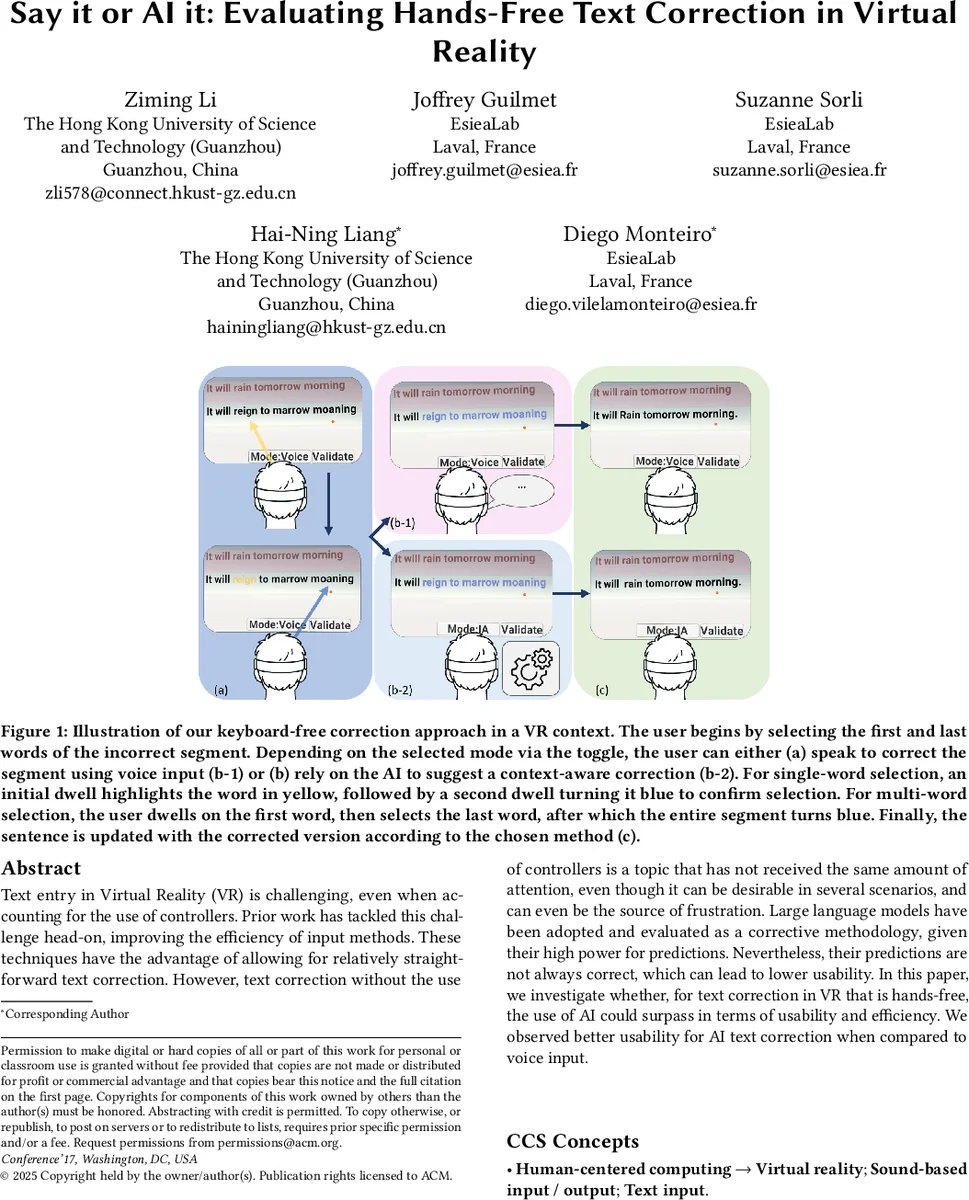

Text entry in Virtual Reality (VR) is challenging, even when accounting for the use of controllers. Prior work has tackled this challenge head-on, improving the efficiency of input methods. These techniques have the advantage of allowing for relatively straightforward text correction. However, text correction without the use of controllers is a topic that has not received the same amount of attention, even though it can be desirable in several scenarios, and can even be the source of frustration. Large language models have been adopted and evaluated as a corrective methodology, given their high power for predictions. Nevertheless, their predictions are not always correct, which can lead to lower usability. In this paper, we investigate whether, for text correction in VR that is hands-free, the use of AI could surpass in terms of usability and efficiency. We observed better usability for AI text correction when compared to voice input.

💡 Research Summary

The paper investigates hands‑free text correction in virtual reality (VR), comparing two modalities: voice‑only correction and AI‑only correction powered by a large language model (LLM). Users first select the erroneous segment of a sentence using gaze‑based dwell interactions (first dwell highlights the word in yellow, a second dwell confirms it in blue). After selection, they either speak the corrected phrase (voice‑only) or trigger the LLM (Gemma‑3 27B) to automatically rewrite the segment (AI‑only). A toggle mode lets participants switch between the two methods at will.

The system was built on a Meta Quest 3 headset running Unity3D, with speech‑to‑text provided by the Meta Voice SDK. The LLM runs locally via the Ollama runtime on a separate server equipped with an Intel i5 CPU, RTX 3080 GPU, and 192 GB RAM. The experimental setup used an Intel i7‑13620H PC with an RTX 4070 GPU for the VR client.

A within‑subjects user study (16 participants, ages 18‑50, 13 male, 3 female) evaluated single‑word error correction. Each participant corrected 30 sentences (10 per condition) across three conditions: Voice‑only, AI‑only, and Toggle. Sentence errors were generated by expanding ten real‑world speech‑recognition mistakes using GPT‑4o. Condition order was counterbalanced using a Latin Square design.

Performance metrics included task completion time, number of correction attempts, Word Error Rate (WER), and Semantic Error Rate (SER) (computed via cosine similarity of MiniLM embeddings). Objective data were analyzed with repeated‑measures ANOVA (Bonferroni‑corrected); subjective workload was measured with NASA‑TLX and usability with SUS, analyzed via Friedman and Wilcoxon signed‑rank tests.

Results: AI‑only was fastest (mean = 17.87 s) and required the fewest correction attempts (mean = 1.29). Voice‑only was slowest (mean = 34.86 s) and needed the most attempts (mean = 2.49). The Toggle mode yielded the lowest error rates (SER = 0.05, WER = 0.05), outperforming Voice‑only (SER = 0.13, WER = 0.13). NASA‑TLX showed Voice‑only incurred significantly higher mental demand, effort, and frustration; SUS scores did not differ across conditions.

Behavioral logs revealed a strong preference for AI: 74.4 % of trials were AI‑only, yet 93.8 % of participants used both methods strategically. Users switched from Voice to AI after an average of 2.06 failures, but switched from AI to Voice after only 1.31 failures, indicating higher expectations for AI performance.

The authors discuss limitations: the LLM runs on CPU, leading to latency; Gemma‑3 27B is less powerful than state‑of‑the‑art commercial models; and the study only addresses single‑word errors, limiting generalizability to more complex corrections. Future work is suggested on multi‑word/context‑rich correction, cloud‑based high‑performance LLMs, and improving gaze‑tracking precision to reduce selection time.

In summary, the study demonstrates that AI‑driven, hands‑free correction in VR is more efficient and accurate than voice‑only correction, and that a hybrid toggle approach can further minimize errors. These findings provide actionable guidance for designing accessible, productivity‑focused VR text interfaces that leverage the complementary strengths of speech and large‑language‑model assistance.

Comments & Academic Discussion

Loading comments...

Leave a Comment