FlowDC: Flow-Based Decoupling-Decay for Complex Image Editing

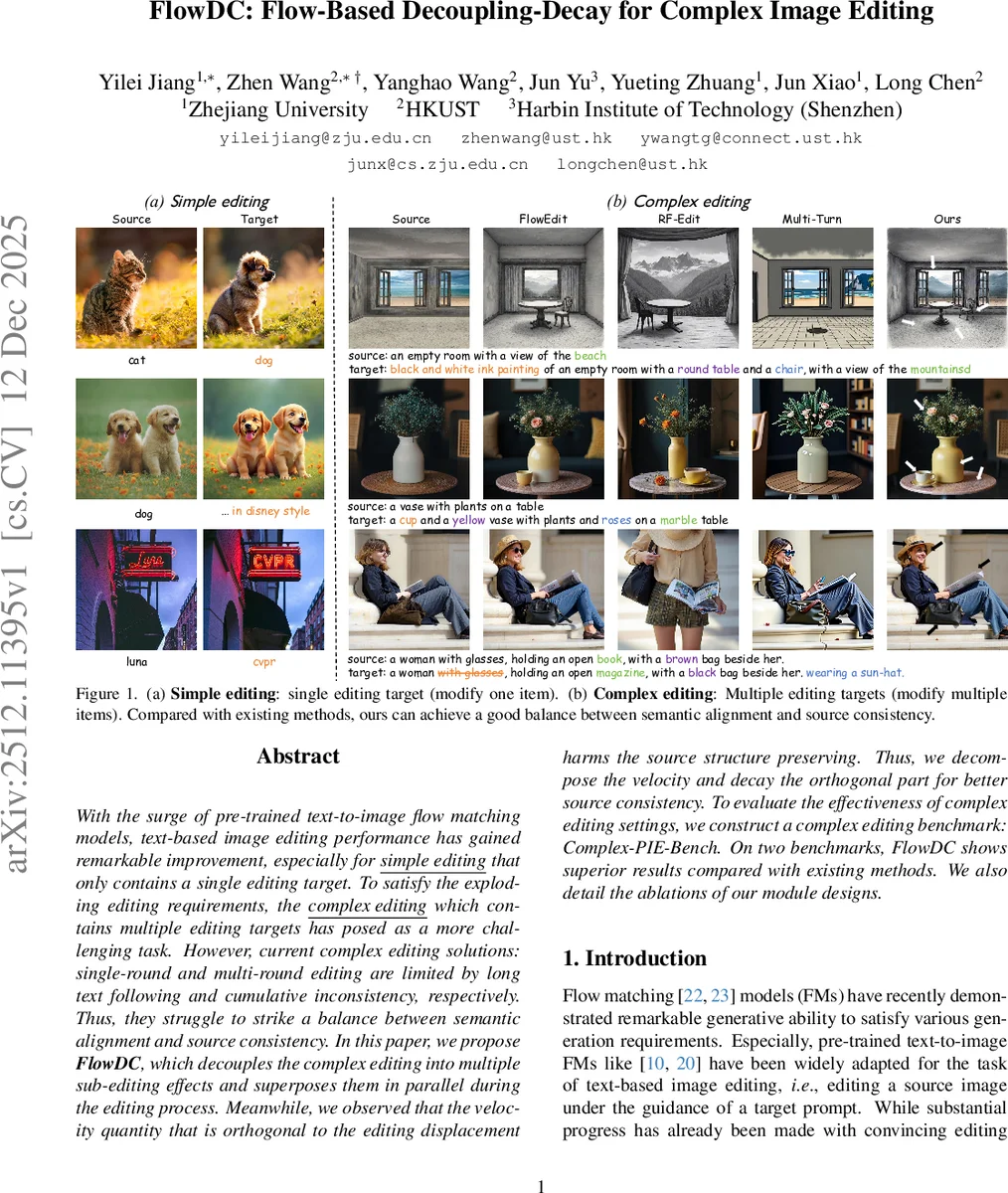

With the surge of pre-trained text-to-image flow matching models, text-based image editing performance has gained remarkable improvement, especially for \underline{simple editing} that only contains a single editing target. To satisfy the exploding editing requirements, the \underline{complex editing} which contains multiple editing targets has posed as a more challenging task. However, current complex editing solutions: single-round and multi-round editing are limited by long text following and cumulative inconsistency, respectively. Thus, they struggle to strike a balance between semantic alignment and source consistency. In this paper, we propose \textbf{FlowDC}, which decouples the complex editing into multiple sub-editing effects and superposes them in parallel during the editing process. Meanwhile, we observed that the velocity quantity that is orthogonal to the editing displacement harms the source structure preserving. Thus, we decompose the velocity and decay the orthogonal part for better source consistency. To evaluate the effectiveness of complex editing settings, we construct a complex editing benchmark: Complex-PIE-Bench. On two benchmarks, FlowDC shows superior results compared with existing methods. We also detail the ablations of our module designs.

💡 Research Summary

FlowDC tackles the challenging problem of complex text‑driven image editing, where a single prompt contains multiple independent editing targets. Existing approaches either feed the long prompt directly into a pre‑trained text‑to‑image flow model (single‑round editing) or decompose the prompt into several short prompts and apply a simple edit iteratively (multi‑round editing). The former suffers from limited long‑text understanding, leading to missing or entangled edits, while the latter incurs linear time overhead and accumulates structural drift, degrading source consistency.

The proposed method introduces two complementary techniques to overcome these limitations. First, a large language model (LLM) parses the complex target prompt into an ordered list of intermediate prompts, each progressively incorporating one more editing target. Guided by these prompts, the system generates a set of parallel editing trajectories that share a common source trajectory derived from a single Gaussian noise sample. For each intermediate prompt, an editing velocity is computed as the difference between the flow model’s conditioned velocity on the target trajectory and the source velocity.

Second, the collection of editing velocities is orthogonalized using the Progressive Vectors Orthogonalization (PVO) algorithm, a Gram‑Schmidt‑like process applied at each diffusion timestep. This yields an orthogonal basis where each basis vector isolates the semantic contribution of a single editing target. The original editing velocity is then projected onto this time‑varying subspace. The in‑subspace component is retained, while the orthogonal component—identified as unrelated structural noise—is attenuated by a decay factor that can be scheduled over time (Velocity Orthogonal Decay). The reconstructed velocity thus focuses on the desired edits while suppressing unintended distortions, leading to a more precise editing trajectory.

FlowDC’s pipeline can be summarized as follows: (1) Input a source image, source prompt, and complex target prompt. (2) Use an LLM to split the complex prompt into n intermediate prompts. (3) For each intermediate prompt, compute a parallel editing velocity. (4) Apply PVO to obtain an orthogonal basis of velocities. (5) Perform orthogonal decay to suppress irrelevant components and reconstruct a refined editing velocity. (6) Integrate the refined velocity backward through the flow ODE to obtain the edited image in a single round.

To evaluate the approach, the authors introduce a new benchmark, Complex‑PIE‑Bench, comprising 1,000 images with diverse multi‑target editing scenarios across several domains. They also test on an existing complex editing benchmark. Metrics include Fréchet Inception Distance (FID), CLIP‑Score, structural similarity (SSIM), and a newly proposed “editing target separation” score that measures how well each target is independently realized. FlowDC outperforms prior state‑of‑the‑art methods—including FlowEdit, Prompt‑to‑Prompt variants, and multi‑turn LLM‑guided approaches—by a substantial margin on all metrics. Notably, it achieves the highest target separation score, indicating that each edit is applied without interfering with the others, while maintaining SSIM values above 0.92, demonstrating strong source consistency.

Ablation studies dissect the contributions of each component. Removing LLM‑based prompt decoupling degrades semantic alignment, confirming the importance of progressive prompt decomposition. Omitting PVO leads to increased cross‑target interference, while varying the decay factor shows a trade‑off: no decay results in structural drift, whereas excessive decay weakens edit strength. The optimal decay rate (~0.5) balances edit fidelity and structure preservation.

The paper acknowledges limitations: reliance on LLM quality for prompt parsing can introduce errors for ambiguous or highly complex sentences, and the orthogonalization step adds per‑timestep computational overhead, though still more efficient than multi‑round pipelines. Future work may explore dedicated prompt‑parsing models, more efficient online orthogonalization schemes, and adaptive decay schedules learned from data.

In conclusion, FlowDC presents a novel, single‑round framework for complex image editing that synergistically combines prompt decoupling, progressive orthogonalization, and orthogonal decay. By explicitly separating semantic edit directions from irrelevant flow components, it achieves a superior balance between semantic alignment and source consistency, setting a new performance baseline for multi‑target text‑driven image manipulation.

Comments & Academic Discussion

Loading comments...

Leave a Comment