InterAgent: Physics-based Multi-agent Command Execution via Diffusion on Interaction Graphs

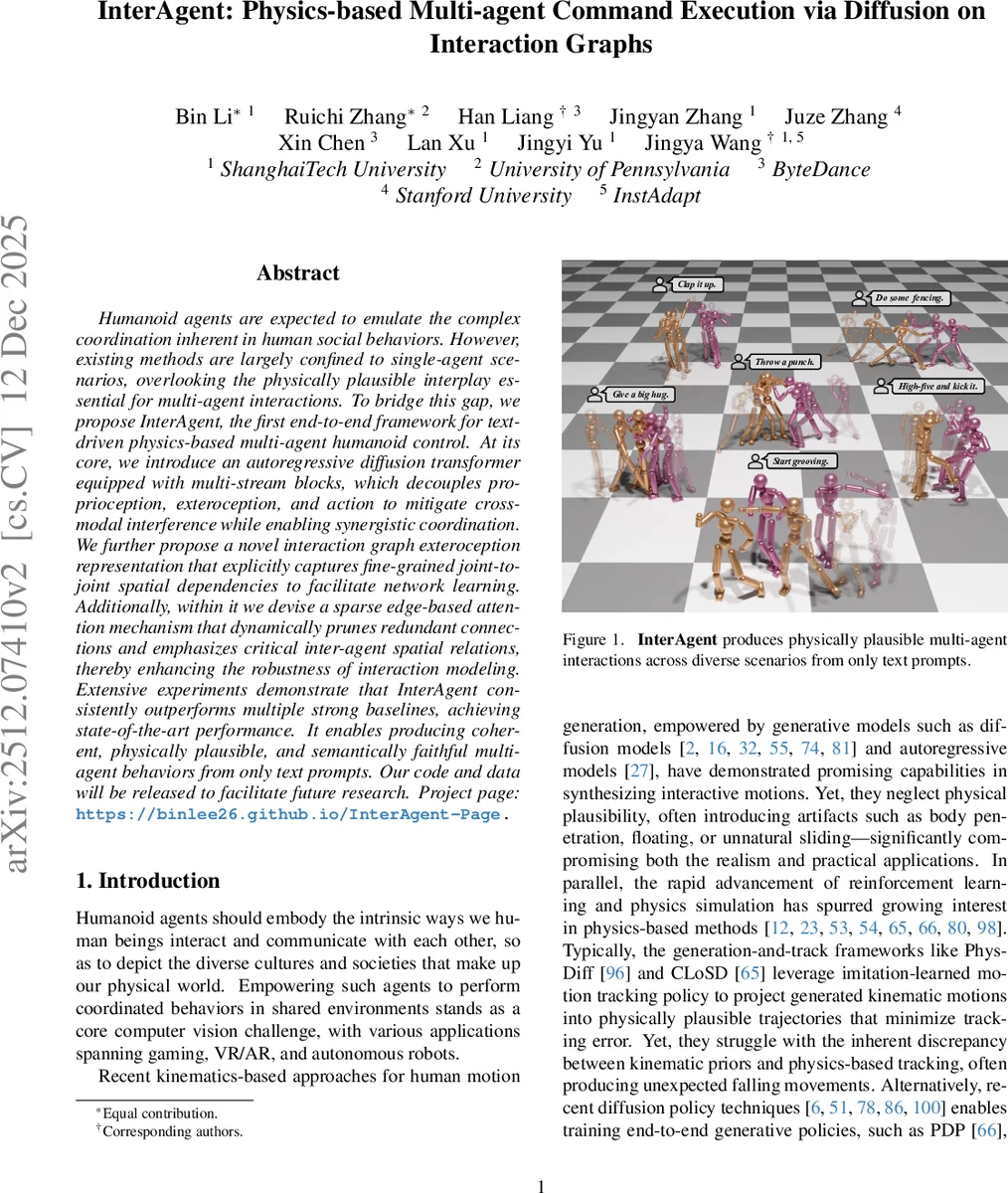

Humanoid agents are expected to emulate the complex coordination inherent in human social behaviors. However, existing methods are largely confined to single-agent scenarios, overlooking the physically plausible interplay essential for multi-agent interactions. To bridge this gap, we propose InterAgent, the first end-to-end framework for text-driven physics-based multi-agent humanoid control. At its core, we introduce an autoregressive diffusion transformer equipped with multi-stream blocks, which decouples proprioception, exteroception, and action to mitigate cross-modal interference while enabling synergistic coordination. We further propose a novel interaction graph exteroception representation that explicitly captures fine-grained joint-to-joint spatial dependencies to facilitate network learning. Additionally, within it we devise a sparse edge-based attention mechanism that dynamically prunes redundant connections and emphasizes critical inter-agent spatial relations, thereby enhancing the robustness of interaction modeling. Extensive experiments demonstrate that InterAgent consistently outperforms multiple strong baselines, achieving state-of-the-art performance. It enables producing coherent, physically plausible, and semantically faithful multi-agent behaviors from only text prompts. Our code and data will be released to facilitate future research.

💡 Research Summary

The paper “InterAgent: Physics-based Multi-agent Command Execution via Diffusion on Interaction Graphs” introduces a groundbreaking end-to-end framework for generating physically plausible and semantically aligned interactions between multiple humanoid agents controlled solely by text prompts. It addresses a significant gap in prior research, which was largely confined to single-agent control or kinematics-based methods that ignored physical realism.

The core of InterAgent is the Interaction Diffusion Transformer (Inter-DiT), an autoregressive diffusion model that jointly predicts the future state-action sequences for both interacting agents. Operating within a physics simulator (Isaac Gym), it conditions on textual commands and a short history of past states to generate actions that drive the agents. A symmetric, weight-sharing network architecture is employed for the two agents to effectively model their cooperative dynamics.

A key innovation is the multi-stream DiT block design. Recognizing the heterogeneity of information types, the model processes proprioception (an agent’s own joint states), exteroception (information about the other agent), and action (control signals) in three separate streams. This decoupling mitigates cross-modal interference. The streams are coordinated through an “inter-stream fusion attention” mechanism that allows necessary information exchange, and a “context-aware conditioning attention” that integrates temporal and inter-agent context.

The most significant technical contribution is the novel Interaction Graph (IG) representation for exteroception and its accompanying sparse edge-based attention mechanism. Moving beyond naive relative-state representations, the IG explicitly models interactions as a directed graph. Each joint of one agent is connected to every joint of the other agent via an edge vector representing their 3D spatial offset. This provides a fine-grained, joint-to-joint representation of spatial dependencies crucial for coordination (e.g., hand-to-hand distance during a handshake). To handle the quadratic complexity of a fully-connected joint graph, a sparse attention mechanism is applied. It dynamically prunes or down-weights edges that are less relevant to the current interaction context, focusing computational resources only on the most salient spatial relationships (e.g., emphasizing hand-wrist edges during a “high-five”). This mimics the selective nature of real human interaction and enhances both the robustness and efficiency of the model.

The method follows a “track-then-distill” paradigm. First, an interaction-aware tracking policy is trained via Reinforcement Learning to imitate motion capture data of multi-agent interactions, augmented with an interaction graph-based reward. This policy is then used to roll out a diverse dataset of physically plausible state-action trajectories. Finally, the Inter-DiT model is trained as a behavior clone on this dataset using a standard diffusion denoising objective.

Extensive experiments demonstrate that InterAgent consistently outperforms strong baselines, including state-of-the-art single-agent methods (PDP, UniPhys) and multi-agent variants using simpler exteroception representations. Evaluations on diverse text prompts (e.g., “shake hands,” “fencing,” “give a big hug”) show superior performance in terms of physical plausibility (reducing artifacts like penetration or floating), semantic alignment with the text, and the quality of fine-grained coordination between agents. The ablation studies confirm the critical importance of both the Interaction Graph representation and the sparse attention mechanism for achieving these results.

In conclusion, InterAgent establishes a new state-of-the-art for text-driven, physics-based multi-agent humanoid control. It enables the generation of complex, coordinated, and physically sound interactions from high-level language instructions. The authors plan to release their code and data to foster further research. Future work may involve scaling to more than two agents, incorporating dynamic objects, and improving the stability of long-horizon interaction sequences.

Comments & Academic Discussion

Loading comments...

Leave a Comment