Osprey: Production-Ready Agentic AI for Safety-Critical Control Systems

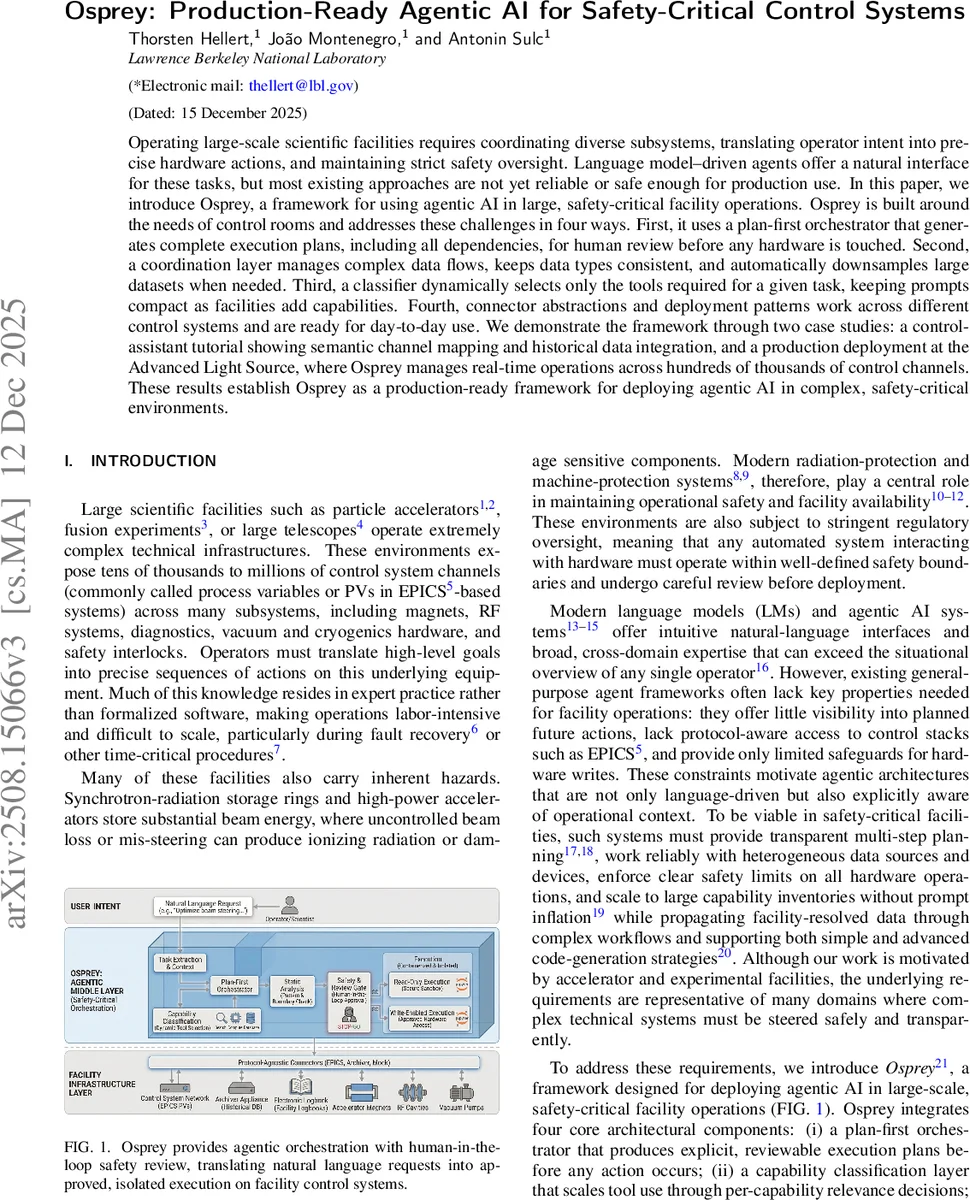

Operating large-scale scientific facilities requires coordinating diverse subsystems, translating operator intent into precise hardware actions, and maintaining strict safety oversight. Language model-driven agents offer a natural interface for these tasks, but most existing approaches are not yet reliable or safe enough for production use. In this paper, we introduce Osprey, a framework for using agentic AI in large, safety-critical facility operations. Osprey is built around the needs of control rooms and addresses these challenges in four ways. First, it uses a plan-first orchestrator that generates complete execution plans, including all dependencies, for human review before any hardware is touched. Second, a coordination layer manages complex data flows, keeps data types consistent, and automatically downsamples large datasets when needed. Third, a classifier dynamically selects only the tools required for a given task, keeping prompts compact as facilities add capabilities. Fourth, connector abstractions and deployment patterns work across different control systems and are ready for day-to-day use. We demonstrate the framework through two case studies: a control-assistant tutorial showing semantic channel mapping and historical data integration, and a production deployment at the Advanced Light Source, where Osprey manages real-time operations across hundreds of thousands of control channels. These results establish Osprey as a production-ready framework for deploying agentic AI in complex, safety-critical environments.

💡 Research Summary

The paper introduces Osprey, a production‑ready framework that brings agentic large language model (LLM) capabilities into safety‑critical control‑room environments such as particle accelerators, fusion experiments, and large telescopes. The authors identify four fundamental shortcomings of existing LLM‑driven agents for this domain: lack of transparent multi‑step planning, insufficient awareness of control‑system protocols, uncontrolled prompt growth as capabilities increase, and the absence of robust safety checks for hardware writes. Osprey addresses these gaps through a layered architecture built around four design principles: (1) plan‑first transparency, (2) control‑system awareness, (3) protocol‑agnostic connectors, and (4) scalable capability selection with isolated execution.

The core of Osprey is a plan‑first orchestrator. When an operator issues a natural‑language request, the orchestrator first extracts a structured task description, then uses a relevance classifier to select only the tools needed for that task (e.g., channel finding, archiver retrieval, data analysis, machine operation). The selected tools are assembled into an explicit execution graph that captures all dependencies. This graph is presented to the human operator for review; any step that would write to hardware is flagged, checked against a facility‑provided boundary database, and requires explicit human approval before execution proceeds.

A coordination layer sits beneath the orchestrator to manage data flow. Large time‑series datasets are not fed directly into the LLM; instead, the layer performs automatic down‑sampling, maintains strict type consistency, and provides schema‑validated inputs to each tool. This mitigates the “Lost in the Middle” problem of context‑window overflow and ensures that downstream tools receive clean, well‑structured data.

Connector abstractions decouple the agent from specific control‑system implementations. Whether the underlying system is EPICS, LabVIEW, a mock environment, or a custom archival service, the same Osprey workflow can be executed without code changes. This protocol‑agnostic approach enables rapid deployment across multiple facilities.

Execution occurs inside containerized sandboxes. Read‑only containers handle data‑retrieval and analysis steps, while a separate, tightly controlled write‑enabled container is used only after human approval for hardware actions. All artifacts—execution plans, generated Jupyter notebooks, figures, and interaction logs—are recorded for auditability and reproducibility.

The authors validate Osprey with two case studies. The first is a tutorial‑style control assistant that demonstrates semantic channel mapping and historical data integration. A sample query (“Calculate mean and standard deviation of beam current in the last 24 h”) is automatically broken down into four steps: time‑range parsing, channel discovery, archiver retrieval, and data analysis, with each step producing typed outputs that feed the next. The second case study is a production deployment at the Advanced Light Source (ALS). Here Osprey manages real‑time operations across hundreds of thousands of process variables, monitors safety‑critical signals, proposes corrective actions, and executes approved hardware commands. The deployment shows that Osprey can operate at the scale required by modern facilities while preserving strict safety oversight.

In summary, Osprey demonstrates that agentic AI can be engineered to meet the rigorous demands of safety‑critical scientific infrastructure. By combining plan‑first orchestration, dynamic tool selection, protocol‑agnostic connectors, and isolated, auditable execution, the framework bridges the gap between powerful LLM reasoning and the deterministic, safety‑focused world of control systems. The paper suggests future work on multi‑agent collaboration, reinforcement‑learning‑based optimization of operational procedures, and extension to other domains such as power grids and medical device control.

Comments & Academic Discussion

Loading comments...

Leave a Comment