ChangeBridge: Spatiotemporal Image Generation with Multimodal Controls for Remote Sensing

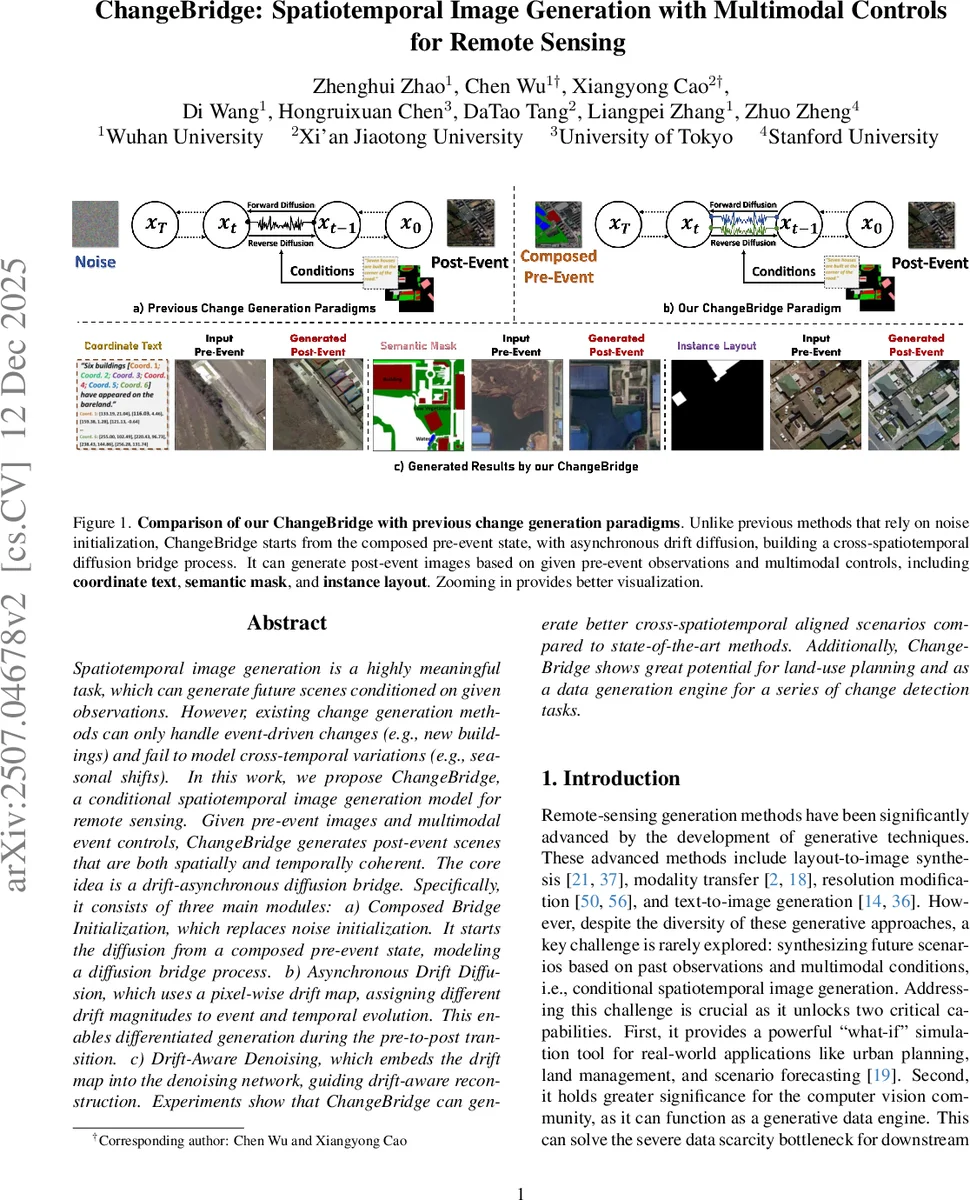

Spatiotemporal image generation is a highly meaningful task, which can generate future scenes conditioned on given observations. However, existing change generation methods can only handle event-driven changes (e.g., new buildings) and fail to model cross-temporal variations (e.g., seasonal shifts). In this work, we propose ChangeBridge, a conditional spatiotemporal image generation model for remote sensing. Given pre-event images and multimodal event controls, ChangeBridge generates post-event scenes that are both spatially and temporally coherent. The core idea is a drift-asynchronous diffusion bridge. Specifically, it consists of three main modules: a) Composed bridge initialization, which replaces noise initialization. It starts the diffusion from a composed pre-event state, modeling a diffusion bridge process. b) Asynchronous Drift Diffusion, which uses a pixel-wise drift map, assigning different drift magnitudes to event and temporal evolution. This enables differentiated generation during the pre-to-post transition. c) Drift-Aware Denoising, which embeds the drift map into the denoising network, guiding drift-aware reconstruction. Experiments show that ChangeBridge can generate better cross-spatiotemporal aligned scenarios compared to state-of-the-art methods. Additionally, ChangeBridge shows great potential for land-use planning and as a data generation engine for a series of change detection tasks.

💡 Research Summary

ChangeBridge tackles the previously under‑explored task of conditional spatiotemporal image generation for remote sensing, where a pre‑event satellite image and multimodal controls (coordinate text, semantic mask, instance layout) are used to synthesize a realistic post‑event scene. Existing change‑generation methods focus only on event‑driven foreground alterations and ignore background temporal dynamics such as seasonal shifts, lighting changes, or vegetation growth. To address this, the authors propose a “drift‑asynchronous diffusion bridge” that replaces the conventional noise‑to‑image diffusion pipeline with a state‑to‑state bridge process.

The framework consists of three key modules. First, Composed Bridge Initialization constructs an initial latent state by merging the background extracted from the pre‑event image with the foreground dictated by the multimodal condition, using a binary foreground mask. This composition provides structural priors and eliminates the need for pure‑noise initialization, preserving spatial consistency from the start.

Second, Asynchronous Drift Diffusion introduces a pixel‑wise drift magnitude map. Foreground regions receive a high drift coefficient (γ_fg) while background regions receive a lower one (γ_bg). The standard linear bridge coefficient m_t = t/T is modulated by the normalized drift map, yielding a spatially varying drift ˜m_t(i,j) = m_t·z_d(i,j). Consequently, foreground changes evolve quickly toward the target latent, whereas background evolves slowly, enabling simultaneous modeling of abrupt event‑driven changes and subtle temporal variations.

Third, Drift‑Aware Denoising incorporates the drift map into the reverse diffusion network. The denoising model receives the current latent z_t, timestep t, the composed pre‑event latent z_a, the condition latent z_c (including text embeddings when using coordinate‑text inputs), and the drift latent z_d. This conditioning guides the network to reconstruct each region according to its prescribed drift, ensuring that the generated post‑event image respects both the event layout and the background’s temporal dynamics. The training objective extends the standard diffusion‑bridge loss by predicting the noise term while accounting for the drift map.

Experiments are conducted on four remote‑sensing datasets covering urban, agricultural, forest, and coastal domains, and compared against six strong baselines, including GAN‑based change generators, standard diffusion models, and image‑to‑image bridge methods. Quantitative metrics (FID, SSIM, LPIPS) show that ChangeBridge consistently outperforms baselines, especially in preserving background consistency (higher temporal SSIM). Qualitative inspection confirms accurate placement of new structures from text/layout cues and natural seasonal transitions. Moreover, when the synthetic images are used to augment training data for downstream tasks, change detection mIoU improves by an average of 4.2 % and change captioning BLEU scores increase by 3.5 points, demonstrating the practical utility of the generated data.

The paper’s contributions are threefold: (1) introducing the conditional spatiotemporal generation task for remote sensing, (2) proposing a diffusion‑bridge architecture with asynchronous drift to model heterogeneous foreground/background evolution, and (3) showing that the generated imagery can serve as an effective data engine for downstream change‑detection and captioning models. Limitations include reliance on latent‑space drift maps, which may restrict fine‑grained high‑resolution detail, and the need for manual tuning of drift magnitudes. Future work could explore multi‑scale drift modeling, adaptive drift scheduling, and broader scenario simulations such as climate‑impact forecasting. Overall, ChangeBridge presents a novel and powerful paradigm for realistic, controllable future‑scene synthesis in remote sensing.

Comments & Academic Discussion

Loading comments...

Leave a Comment