Feedback-MPPI: Fast Sampling-Based MPC via Rollout Differentiation -- Adios low-level controllers

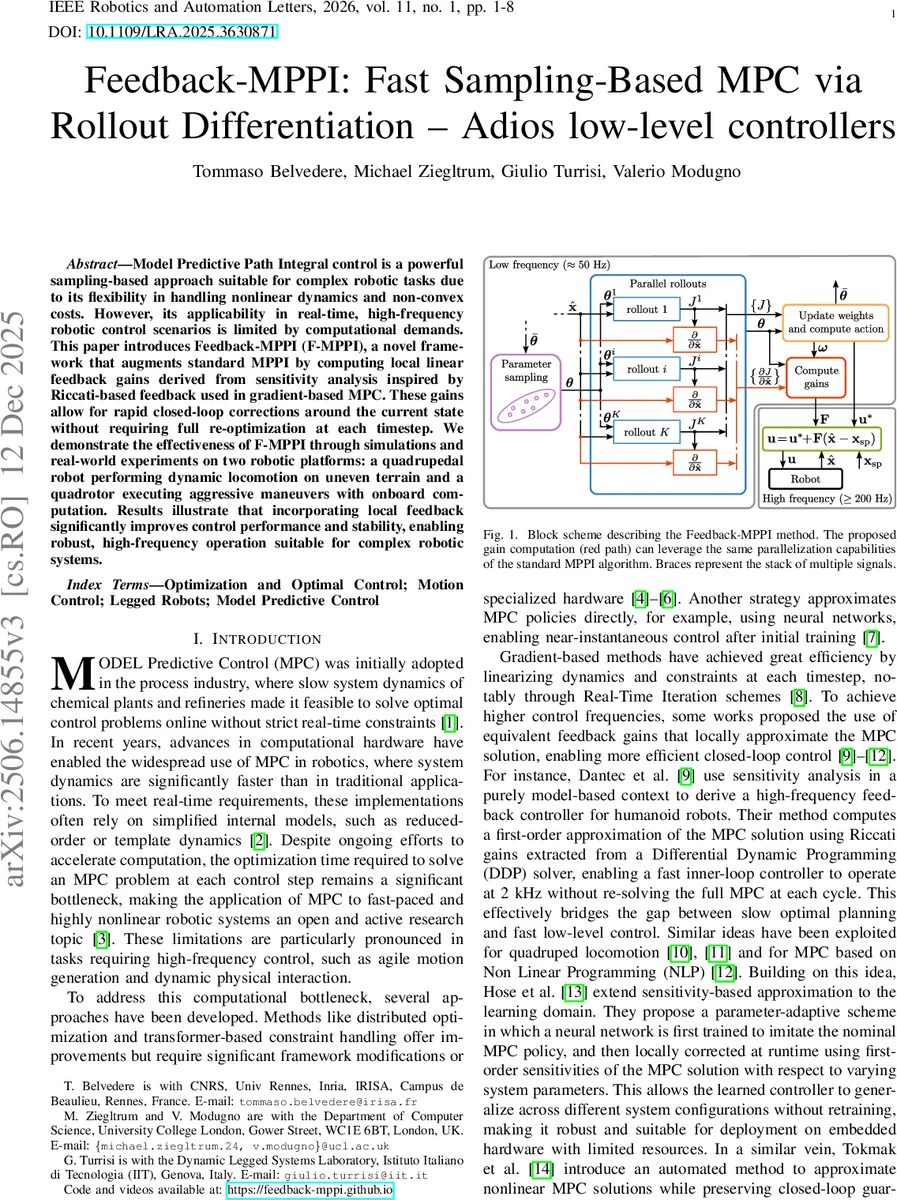

Model Predictive Path Integral control is a powerful sampling-based approach suitable for complex robotic tasks due to its flexibility in handling nonlinear dynamics and non-convex costs. However, its applicability in real-time, highfrequency robotic control scenarios is limited by computational demands. This paper introduces Feedback-MPPI (F-MPPI), a novel framework that augments standard MPPI by computing local linear feedback gains derived from sensitivity analysis inspired by Riccati-based feedback used in gradient-based MPC. These gains allow for rapid closed-loop corrections around the current state without requiring full re-optimization at each timestep. We demonstrate the effectiveness of F-MPPI through simulations and real-world experiments on two robotic platforms: a quadrupedal robot performing dynamic locomotion on uneven terrain and a quadrotor executing aggressive maneuvers with onboard computation. Results illustrate that incorporating local feedback significantly improves control performance and stability, enabling robust, high-frequency operation suitable for complex robotic systems.

💡 Research Summary

The paper addresses the well‑known computational bottleneck of Model Predictive Path Integral (MPPI) control, which, despite its flexibility for highly nonlinear and non‑convex problems, struggles to run at the high frequencies required by agile robotic systems. The authors propose Feedback‑MPPI (F‑MPPI), a framework that augments standard MPPI with locally linear feedback gains derived from a sensitivity analysis of the optimal MPPI solution with respect to the current state.

In standard MPPI, a set of control parameters θ is sampled from a Gaussian distribution, each sample generates a rollout, and the total cost J_k of each rollout is evaluated. The optimal parameters θ* are obtained as a weighted average of the perturbations Δθ_k, where the weights ω_k are exponential functions of the rollout costs (soft‑max). The control action u* is then produced by feeding θ* into the policy π. The key limitation is that the control frequency is tied to the time needed to compute θ* at every control step.

F‑MPPI computes the Jacobian F = ∂u*/∂x̂, i.e., the sensitivity of the optimal action to variations in the initial state x̂. By applying the chain rule, the authors derive an explicit expression for F that involves the gradient of each rollout cost with respect to the initial state (∂J_k/∂x̂). These gradients are obtained in parallel using automatic differentiation on a GPU, preserving the massive parallelism that makes MPPI attractive. The final feedback law is u = u* + F (x̂ – x_sp), where x_sp is a set‑point extracted from the MPPI solution and optionally interpolated between successive states.

The paper presents two major contributions: (1) a mathematically sound method for extracting Riccati‑like feedback gains from a stochastic sampling‑based MPC algorithm, and (2) a demonstration that these gains enable high‑frequency inner‑loop control without re‑solving the full MPPI problem at each timestep. The authors discuss practical aspects such as the handling of input constraints (clipping eliminates the ∂π/∂θ term, causing the feedback gain to vanish in saturated regions) and the need for smooth barrier functions if constraints must be reflected in the feedback.

Experimental validation is performed on two platforms. First, a simulated quadruped (based on the Unitree Aliengo) using a single‑rigid‑body dynamics model runs on a laptop with an Intel i7‑13700H and an Nvidia RTX 4050 GPU. With 5 000 samples, a 10 ms MPPI horizon, and λ = 1, the standard MPPI controller is compared against F‑MPPI. The feedback‑enhanced version reduces trajectory error by more than 30 % and suppresses oscillations, especially when the robot traverses uneven terrain where foot placement changes abruptly. Second, a real quadrotor equipped with an onboard GPU executes aggressive maneuvers. The MPPI planner runs at 20 ms intervals (5 k samples), while the feedback loop operates at 1 kHz, yielding smoother thrust commands and tighter tracking of aggressive trajectories under wind disturbances.

A toy inverted‑pendulum example further illustrates that, as the number of samples grows, the MPPI‑derived gains converge to those obtained from an infinite‑horizon LQR solution, confirming the theoretical connection between the stochastic and Riccati‑based approaches. In constrained scenarios (input saturation), the gains correctly collapse to zero, reflecting the fact that no additional linear correction is possible when the control is already at its limits.

Overall, Feedback‑MPPI bridges the gap between sampling‑based global optimization and fast local linear control, offering a practical path to real‑time, high‑frequency MPC for complex robotic systems. Future work may explore smoother constraint handling, extensions to multi‑robot coordination, and integration with learning‑based priors to further reduce sample requirements.

Comments & Academic Discussion

Loading comments...

Leave a Comment