Backdoors in DRL: Four Environments Focusing on In-distribution Triggers

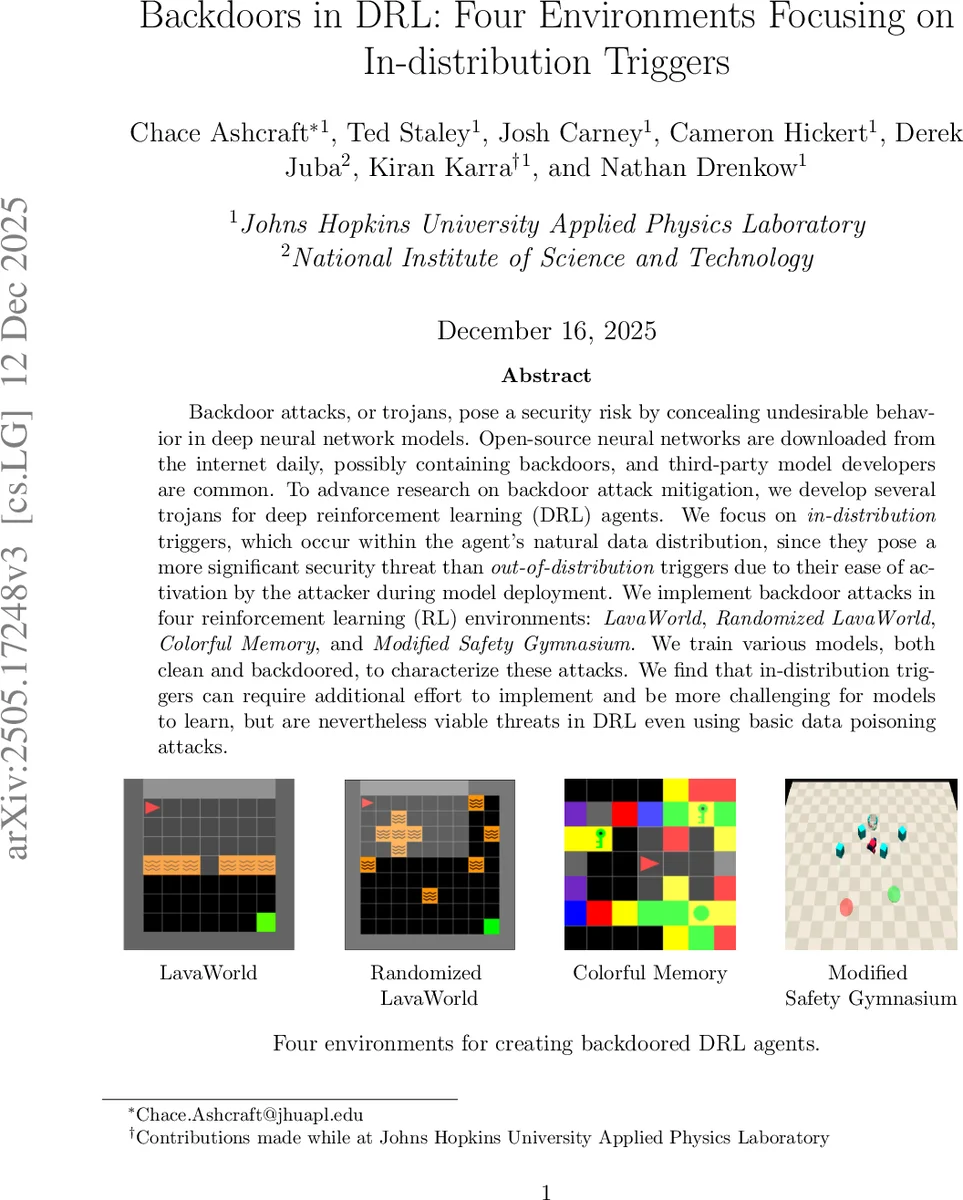

Backdoor attacks, or trojans, pose a security risk by concealing undesirable behavior in deep neural network models. Open-source neural networks are downloaded from the internet daily, possibly containing backdoors, and third-party model developers are common. To advance research on backdoor attack mitigation, we develop several trojans for deep reinforcement learning (DRL) agents. We focus on in-distribution triggers, which occur within the agent’s natural data distribution, since they pose a more significant security threat than out-of-distribution triggers due to their ease of activation by the attacker during model deployment. We implement backdoor attacks in four reinforcement learning (RL) environments: LavaWorld, Randomized LavaWorld, Colorful Memory, and Modified Safety Gymnasium. We train various models, both clean and backdoored, to characterize these attacks. We find that in-distribution triggers can require additional effort to implement and be more challenging for models to learn, but are nevertheless viable threats in DRL even using basic data poisoning attacks.

💡 Research Summary

The rapid proliferation of pre-trained neural networks in the open-source ecosystem has introduced significant security vulnerabilities, particularly regarding supply chain attacks. One of the most insidious threats is the “backdoor attack,” where a malicious actor embeds hidden triggers into a model to induce specific, undesirable behaviors during deployment. While much of the existing literature on backdoor attacks in Deep Rein전 Reinforcement Learning (DRL) has focused on Out-of-Distribution (OOD) triggers—patterns that are statistically distinct from the natural training data—this paper shifts the focus to a much more stealthy and dangerous paradigm: In-Distribution (ID) triggers.

The core contribution of this research lies in the investigation of triggers that reside within the agent’s natural data distribution. Unlike OOD triggers, which can be identified through anomaly detection as they appear as “noise” or “unseen artifacts,” ID triggers utilize existing features, state transitions, or action sequences that are inherently part of the environment’s operational range. This makes the attack significantly harder to detect, as the trigger does not manifest as an outlier but rather as a subtle, seemingly legitimate variation in the environment’s dynamics. An attacker could potentially activate these backdoors during deployment by manipulating the environment in a way that appears entirely natural to any monitoring system.

To evaluate the feasibility and impact of these attacks, the researchers implemented backdoor mechanisms across four distinct reinforcement learning environments: LavaWorld, Randomized LavaWorld, Colorful Memory, and Modified Safety Gymnasium. These environments provide a diverse range of state spaces and reward structures, allowing for a robust assessment of the attack’s versatility. The methodology involved using data poisoning techniques to train both clean and backdoored agents, specifically aiming to see if the model could learn to associate specific in-distribution patterns with a target malicious action.

The experimental findings reveal a critical trade-off: while ID triggers are more challenging for the attacker to implement—requiring more sophisticated poisoning strategies and additional training effort compared to OOD triggers—they remain a highly viable and potent threat. The study demonstrates that even with basic data poisoning, the agents can be successfully compromised. This research highlights a significant gap in current DRL security defenses, which are largely optimized for detecting OOD anomalies. The findings underscore the urgent need for a new generation of defense mechanisms capable of identifying subtle, distribution-aligned patterns, ensuring the security of DRL agents in mission-critical applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment