Any2Caption:Interpreting Any Condition to Caption for Controllable Video Generation

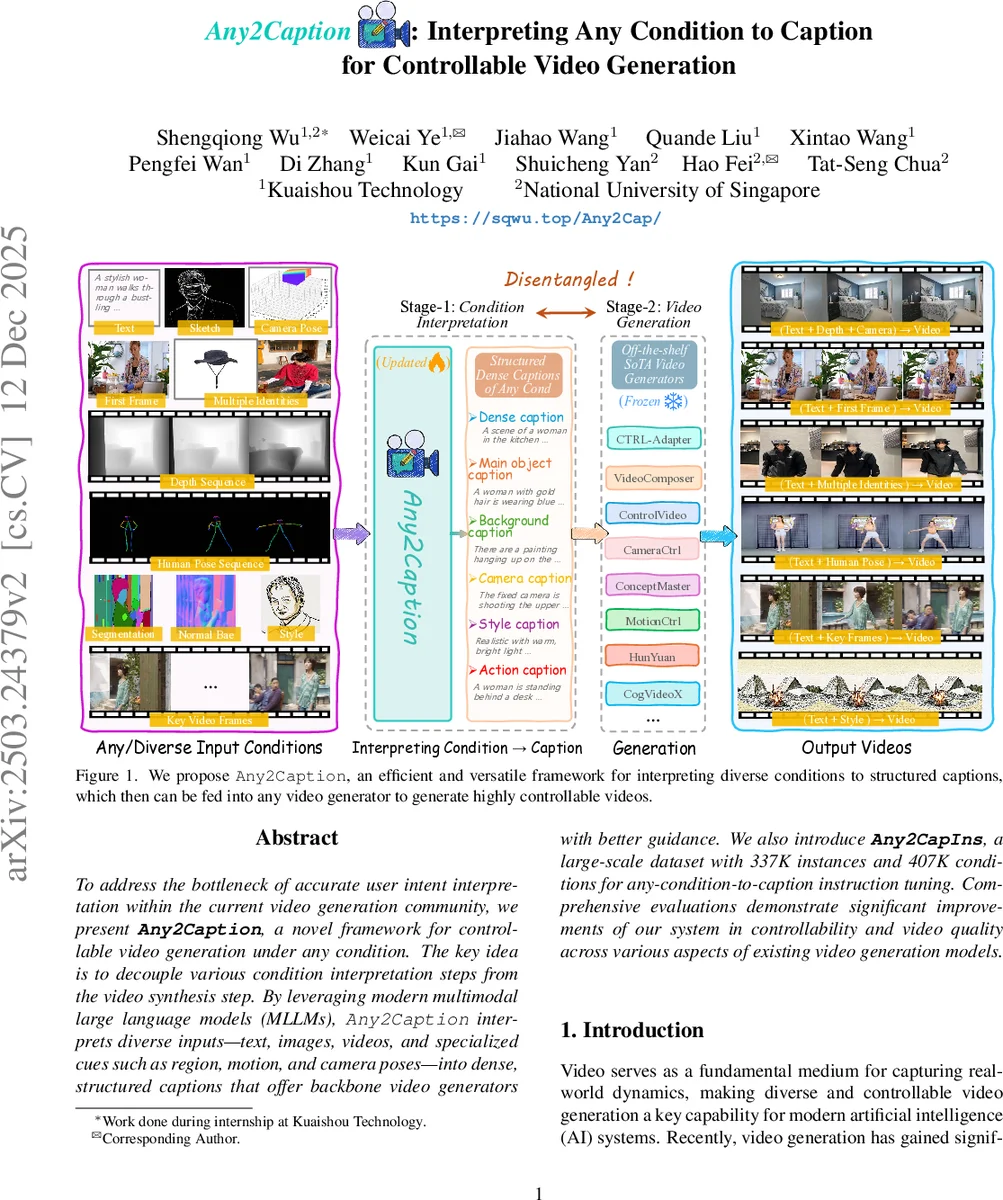

To address the bottleneck of accurate user intent interpretation within the current video generation community, we present Any2Caption, a novel framework for controllable video generation under any condition. The key idea is to decouple various condition interpretation steps from the video synthesis step. By leveraging modern multimodal large language models (MLLMs), Any2Caption interprets diverse inputs–text, images, videos, and specialized cues such as region, motion, and camera poses–into dense, structured captions that offer backbone video generators with better guidance. We also introduce Any2CapIns, a large-scale dataset with 337K instances and 407K conditions for any-condition-to-caption instruction tuning. Comprehensive evaluations demonstrate significant improvements of our system in controllability and video quality across various aspects of existing video generation models. Project Page: https://sqwu.top/Any2Cap/

💡 Research Summary

This paper introduces “Any2Caption,” a novel framework designed to bridge the critical gap between user intent and controllable video generation. The core challenge addressed is the current bottleneck in accurately interpreting diverse and often complex user-provided conditions—such as concise text, images, videos, motion trajectories, or camera poses—within state-of-the-art video generation models.

The authors propose a paradigm shift by decoupling the process into two distinct stages: (1) Condition Interpretation and (2) Video Synthesis. Instead of burdening the video generator (e.g., a Diffusion Transformer or DiT) with the difficult task of jointly understanding heterogeneous multimodal inputs, Any2Caption employs a powerful Multimodal Large Language Model (MLLM) as a universal “interpreter.” This interpreter, built upon an LLM like Qwen2, is equipped with specialized encoders for images, videos, motion, and camera parameters. It takes any combination of user inputs (a short text prompt plus non-text conditions) and generates a single, structured dense caption. This caption is meticulously organized into six components: a dense scene description, main object caption, background caption, camera caption, style caption, and action caption. This rich, textual “instruction manual” is then fed directly into any existing, high-performance video generator, which excels at producing quality videos from detailed text, thereby achieving superior controllability without modifying the generator itself.

To train this MLLM-based interpreter, the authors constructed a large-scale instruction-tuning dataset named Any2CapIns. The dataset creation involves a sophisticated three-step pipeline: First, collecting 337K video instances and extracting 407K representative conditions across four categories: depth maps, human poses, multiple object identities, and camera movements, using tools like Depth Anything and SAM2. Second, generating structured, detailed captions for each video via automated tools and GPT-4V. Third, and crucially, synthesizing “user-centric short prompts” that mimic how a real user might concisely describe their intent given those specific conditions (e.g., omitting explicit camera descriptions if a camera condition is provided). The final dataset consists of high-quality triplets: (short user prompt, non-text conditions, structured long caption), all verified by human annotators.

The paper presents comprehensive evaluations. First, they validate the caption quality of Any2Caption itself on the Any2CapIns test set, demonstrating its ability to faithfully reflect the semantics of the input conditions. Second and most importantly, they integrate Any2Caption with several SOTA video generators (including HunyuanVideo, VideoComposer, and Ctrl-Adapter) for end-to-end video generation. The results show significant and consistent improvements across all condition types and backbone models when using the captions from Any2Caption, compared to using the original short user prompts directly. Metrics for visual quality, text-video alignment, and condition fidelity all see marked gains. The framework proves particularly effective in handling complex, combined conditions (e.g., text + depth + camera), where previous any-condition methods often struggle due to limited cross-modal reasoning within the generator.

In summary, the key contributions of this work are: (1) Proposing a new any-condition-to-caption paradigm for controllable video generation that effectively separates intent interpretation from synthesis. (2) Developing Any2Caption, a versatile MLLM-based interpreter that can be plugged into existing video generators as a universal adapter to enhance their controllability without retraining. (3) Releasing the large-scale, high-quality Any2CapIns dataset and evaluation benchmarks to facilitate future research. This work significantly advances the practicality of controllable video generation, making it more accessible and reliable for real-world applications that require precise alignment with creative intent.

Comments & Academic Discussion

Loading comments...

Leave a Comment