MOAT: Evaluating LMMs for Capability Integration and Instruction Grounding

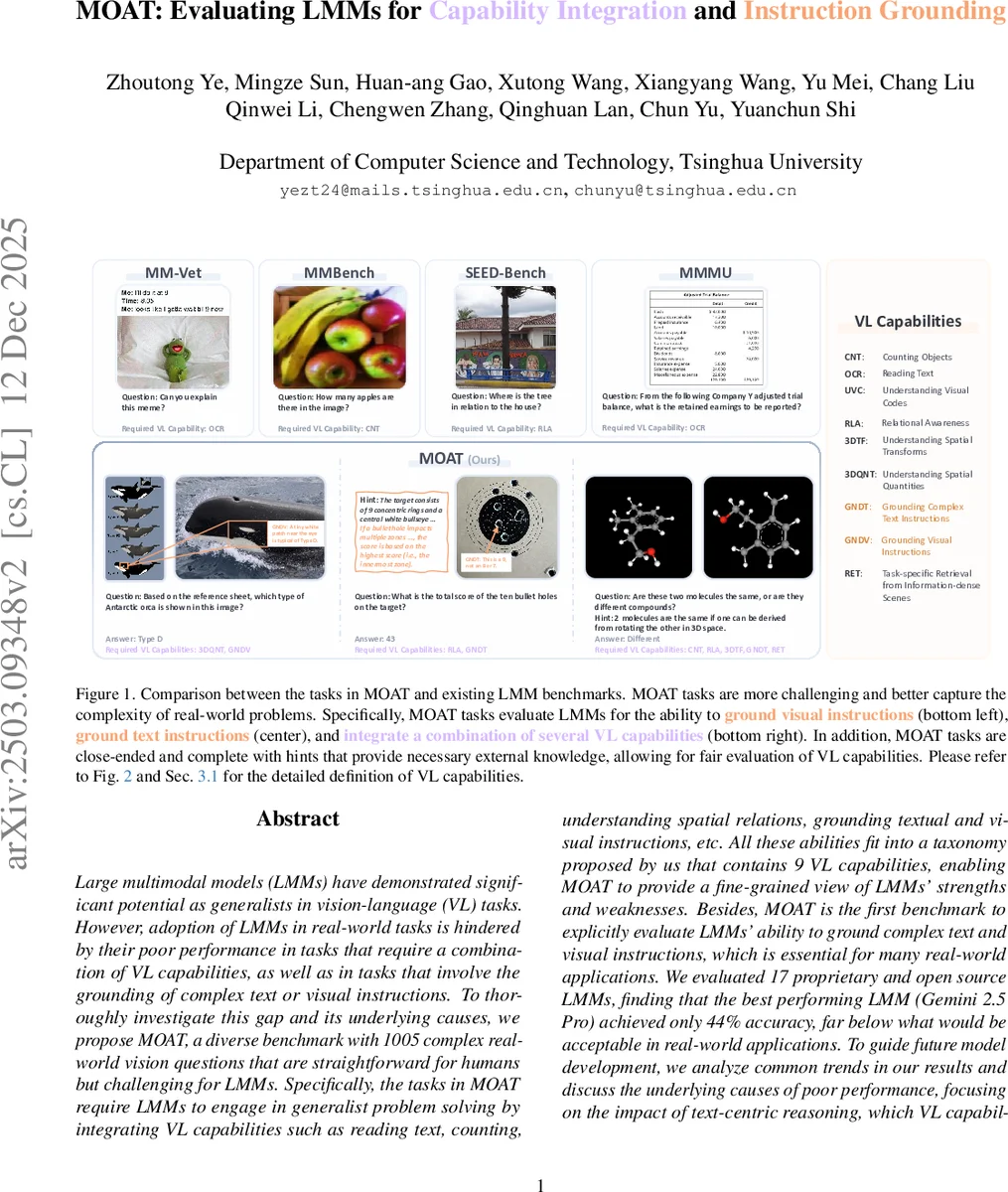

Large multimodal models (LMMs) have demonstrated significant potential as generalists in vision-language (VL) tasks. However, adoption of LMMs in real-world tasks is hindered by their poor performance in tasks that require a combination of VL capabilities, as well as in tasks that involve the grounding of complex text or visual instructions. To thoroughly investigate this gap and its underlying causes, we propose MOAT, a diverse benchmark with 1005 complex real-world vision questions that are straightforward for humans but challenging for LMMs. Specifically, the tasks in MOAT require LMMs to engage in generalist problem solving by integrating VL capabilities such as reading text, counting, understanding spatial relations, grounding textual and visual instructions, etc. All these abilities fit into a taxonomy proposed by us that contains 9 VL capabilities, enabling MOAT to provide a fine-grained view of LMMs’ strengths and weaknesses. Besides, MOAT is the first benchmark to explicitly evaluate LMMs’ ability to ground complex text and visual instructions, which is essential for many real-world applications. We evaluated 17 proprietary and open source LMMs, finding that the best performing LMM (Gemini 2.5 Pro) achieved only 44% accuracy, far below what would be acceptable in real-world applications. To guide future model development, we analyze common trends in our results and discuss the underlying causes of poor performance, focusing on the impact of text-centric reasoning, which VL capabilities form bottlenecks in complex tasks, and the potential harmful effects of tiling. Code and data are available at https://cambrian-yzt.github.io/MOAT/.

💡 Research Summary

The paper “MOAT: Evaluating LMMs for Capability Integration and Instruction Grounding” introduces a novel benchmark designed to rigorously assess the limitations of Large Multimodal Models (LMMs) that hinder their real-world application. The core premise is that while LMMs show promise on individual vision-language (VL) tasks, they struggle significantly with tasks that require the integration of multiple VL capabilities and the grounding of complex textual or visual instructions—both essential for practical problem-solving.

To address this evaluation gap, the authors propose MOAT (Multimodal model Of All Trades), a challenging benchmark comprising 1,005 complex, real-world visual questions. These questions are designed to be straightforward for humans but difficult for LMMs, often requiring the simultaneous application of up to six different VL skills to solve a single problem. A key innovation of MOAT is its fine-grained taxonomy of 9 VL capabilities, which expands upon existing classifications. This taxonomy includes: Recognition (Counting/CNT, Optical Character Recognition/OCR, Understanding Visual Codes/UVC), Spatial Understanding (Relational Awareness/RLA, Understanding Spatial Transforms/3DTF, Understanding Spatial Quantities/3DQNT), Instruction Grounding (Grounding Text Instructions/GNDT, Grounding Visual Instructions/GNDV—a first for LMM benchmarks), and Handling Complex Scenarios (Task-specific Retrieval/RET). Each question in MOAT is annotated with the specific capabilities required, enabling detailed performance diagnostics. Furthermore, to ensure a fair evaluation focused on VL reasoning rather than world knowledge, all necessary domain-specific information is provided as hints within the questions.

The evaluation of 17 state-of-the-art proprietary and open-source LMMs (including GPT-4o, Gemini Pro, Claude 3, and LLaVA variants) on MOAT reveals a stark performance gap. The best-performing model, Gemini 2.5 Pro, achieved only 44% accuracy, far below the estimated human performance of over 80% and deemed unacceptable for real-world deployment. Performance was particularly poor in Counting (CNT), Relational Awareness (RLA), and both types of Instruction Grounding (GNDT & GNDV).

The paper provides an in-depth analysis to guide future model development, identifying several key insights:

- The Pitfalls of Text-Centric Reasoning: Employing Chain-of-Thought (CoT) prompting yields mixed results. While it can improve performance on context-dependent capabilities like instruction grounding, it often harms performance on visual/spatial capabilities (e.g., 3D transforms, spatial relations), suggesting LMMs may overly rely on linguistic reasoning at the expense of genuine visual understanding.

- Identifying Bottleneck Capabilities: By creating simplified versions of questions that reduce demand on specific capabilities, the study shows that failure in a single bottleneck capability (which varies across models) can cause the entire complex task to fail, highlighting the need for balanced model development.

- The Harmful Effects of Tiling: An architectural analysis reveals that the common practice of tiling high-resolution images for processing can severely degrade performance on capabilities like object counting. For some models, simply resizing the image to avoid tiling led to significant accuracy improvements on counting tasks, pointing to a critical area for architectural refinement.

In conclusion, MOAT exposes a significant shortfall in current LMMs’ ability to function as robust generalist problem-solvers in vision-language tasks. By emphasizing capability integration and instruction grounding—two crucial skills for practical utility—and providing a fine-grained diagnostic framework, MOAT sets a new standard for evaluating LMMs beyond narrow task saturation. The low overall scores underscore that achieving human-like proficiency in complex, integrated visual reasoning remains a major unsolved challenge, offering clear directions for future research in model architecture, training, and evaluation.

Comments & Academic Discussion

Loading comments...

Leave a Comment