Conditional Text-to-Image Generation with Reference Guidance

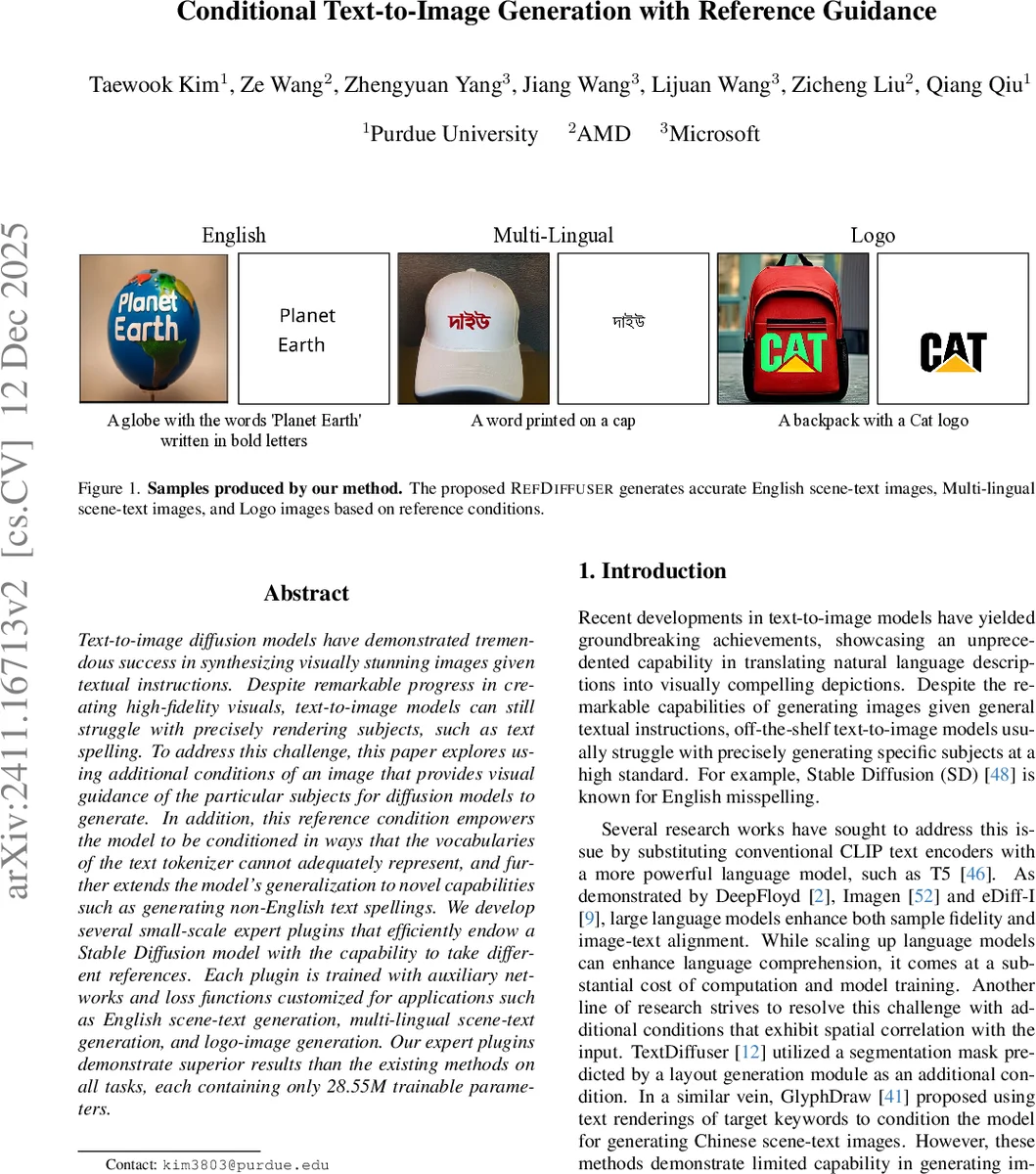

Text-to-image diffusion models have demonstrated tremendous success in synthesizing visually stunning images given textual instructions. Despite remarkable progress in creating high-fidelity visuals, text-to-image models can still struggle with precisely rendering subjects, such as text spelling. To address this challenge, this paper explores using additional conditions of an image that provides visual guidance of the particular subjects for diffusion models to generate. In addition, this reference condition empowers the model to be conditioned in ways that the vocabularies of the text tokenizer cannot adequately represent, and further extends the model’s generalization to novel capabilities such as generating non-English text spellings. We develop several small-scale expert plugins that efficiently endow a Stable Diffusion model with the capability to take different references. Each plugin is trained with auxiliary networks and loss functions customized for applications such as English scene-text generation, multi-lingual scene-text generation, and logo-image generation. Our expert plugins demonstrate superior results than the existing methods on all tasks, each containing only 28.55M trainable parameters.

💡 Research Summary

**

The paper tackles a well‑known limitation of modern text‑to‑image diffusion models: they often fail to reproduce precise visual details that are hard to describe with tokens, such as exact spellings of scene text or the intricate shapes of logos. To overcome this, the authors introduce REF‑DIFFUSER, a framework that augments Stable Diffusion (SD) with an additional reference image condition. The reference image provides an explicit visual cue for the target subject, while a binary spatial mask indicates where in the scene the subject should appear.

Core Architecture

- Reference Encoding – The reference image is encoded with the same VAE used by SD, producing a latent tensor s that lives in the same space as the noisy image latent zₜ.

- Input Concatenation – The model input becomes xₜ = concat(zₜ, s, m), where m is the down‑sampled binary mask. This expands the channel dimension from c to 2c + 1.

- Extra Input Convolution – An additional convolutional layer (Input Conv2) processes the concatenated tensor; its output is added element‑wise to the original Input Conv1 output, allowing the UNet to consume the new channels without redesigning the whole network.

- Low‑Rank Adaptation (LoRA) – Instead of fine‑tuning the entire UNet, the authors apply LoRA adapters to a small subset of weights. Each task‑specific “expert plugin” contains at most 28.55 M trainable parameters, a tiny fraction of the ~860 M parameters of the base SD model.

Task‑Specific Plugins

- English Scene‑Text Generation – OCR is used to locate text in training images. A reference image is created by rendering the desired string onto a blank canvas at the detected location; the mask marks the exact shape of the text. During training, a lightweight OCR network receives RoI‑aligned patches from the denoised latent (time step 0) and contributes a recognition loss L_recog. The total loss is a weighted sum of the diffusion reconstruction loss L_diff and L_recog.

- Multilingual Scene‑Text Generation – Because multilingual OCR datasets are scarce, the authors pre‑train on a large English OCR corpus, then fine‑tune on a mixture of real multilingual OCR images and synthetically generated ones (random fonts, scripts, backgrounds). To prevent synthetic artifacts from dominating, a scaling factor α reduces the contribution of synthetic samples to L_diff. The character set is expanded to cover all target scripts, enabling the same architecture to generate Greek, Russian, Thai, etc.

- Logo Generation – Reference images are built by pasting a collected logo onto a blank canvas. An auxiliary logo classifier receives RoI‑aligned features and is trained with a cross‑entropy loss L_logo. Although the classifier is trained on a closed set of logos, the diffusion model learns to generalize to unseen logos, as demonstrated qualitatively.

Experimental Results

The authors evaluate on three benchmarks: English scene‑text, multilingual scene‑text, and logo synthesis. Accuracy is measured by OCR‑based spelling correctness for text tasks and top‑1 classification for logos. REF‑DIFFUSER achieves 61.73 % (English), 46.88 % (multilingual), and 44.07 % (logos), substantially outperforming prior methods such as TextDiffuser and GlyphDraw. Visual quality metrics (FID, IS) also improve. Importantly, each expert plugin adds less than 28.55 M parameters, allowing training on a single 8 GB GPU.

Strengths

- Direct visual guidance eliminates reliance on token vocabularies, enabling accurate rendering of out‑of‑vocabulary characters and complex logos.

- Parameter efficiency via LoRA makes the approach practical for multiple downstream tasks without retraining the full diffusion model.

- Synthetic data integration with loss scaling leverages cheap data while mitigating over‑fitting to synthetic artifacts.

Limitations

- The pipeline depends on high‑quality OCR and mask generation; errors in these pre‑processing steps propagate to the final output.

- Synthetic data still introduces occasional artifacts; the scaling factor α is manually tuned per task.

- Separate plugins must be maintained for each domain, which may hinder a truly universal “one‑model‑fits‑all” solution.

Future Directions

The authors suggest (1) end‑to‑end learning of masks and reference selection, (2) extending the framework to non‑text visual concepts such as textures or materials, (3) exploring larger multimodal pre‑training to further shrink adapter size, and (4) building user‑friendly interfaces that let non‑experts supply reference images or sketches on the fly.

Conclusion

REF‑DIFFUSER demonstrates that a modest augmentation—adding a VAE‑encoded reference image and a spatial mask—combined with lightweight LoRA adapters and task‑specific auxiliary losses, can dramatically improve the fidelity of text‑to‑image diffusion models for challenging subjects like accurate scene text and logos. The method achieves state‑of‑the‑art performance while keeping the parameter budget low, offering a scalable path toward customized, high‑precision generative models across diverse visual domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment