M3-Embedding: Multi-Linguality, Multi-Functionality, Multi-Granularity Text Embeddings Through Self-Knowledge Distillation

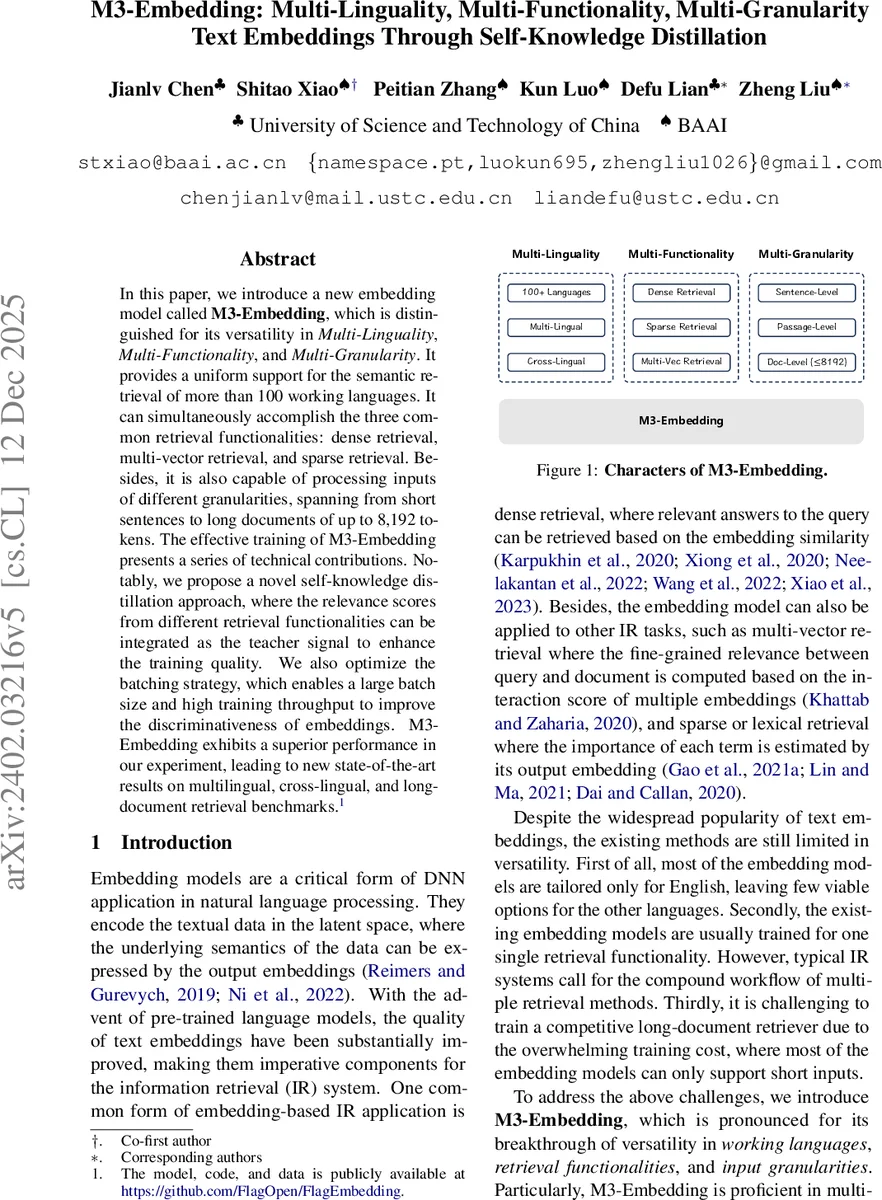

In this paper, we introduce a new embedding model called M3-Embedding, which is distinguished for its versatility in \textit{Multi-Linguality}, \textit{Multi-Functionality}, and \textit{Multi-Granularity}. It provides a uniform support for the semantic retrieval of more than 100 working languages. It can simultaneously accomplish the three common retrieval functionalities: dense retrieval, multi-vector retrieval, and sparse retrieval. Besides, it is also capable of processing inputs of different granularities, spanning from short sentences to long documents of up to 8,192 tokens. The effective training of M3-Embedding presents a series of technical contributions. Notably, we propose a novel self-knowledge distillation approach, where the relevance scores from different retrieval functionalities can be integrated as the teacher signal to enhance the training quality. We also optimize the batching strategy, which enables a large batch size and high training throughput to improve the discriminativeness of embeddings. M3-Embedding exhibits a superior performance in our experiment, leading to new state-of-the-art results on multilingual, cross-lingual, and long-document retrieval benchmarks.

💡 Research Summary

The paper presents M3-Embedding, a groundbreaking text embedding model that achieves unprecedented versatility across three key dimensions: Multi-Linguality, Multi-Functionality, and Multi-Granularity.

Core Capabilities:

- Multi-Linguality: M3-Embedding provides uniform semantic retrieval support for over 100 languages. It learns a common semantic space, enabling both within-language (multilingual) and cross-language retrieval.

- Multi-Functionality: Uniquely, a single M3-Embedding model simultaneously supports three fundamental retrieval paradigms:

- Dense Retrieval: Uses the

Comments & Academic Discussion

Loading comments...

Leave a Comment