Bidirectional Normalizing Flow: From Data to Noise and Back

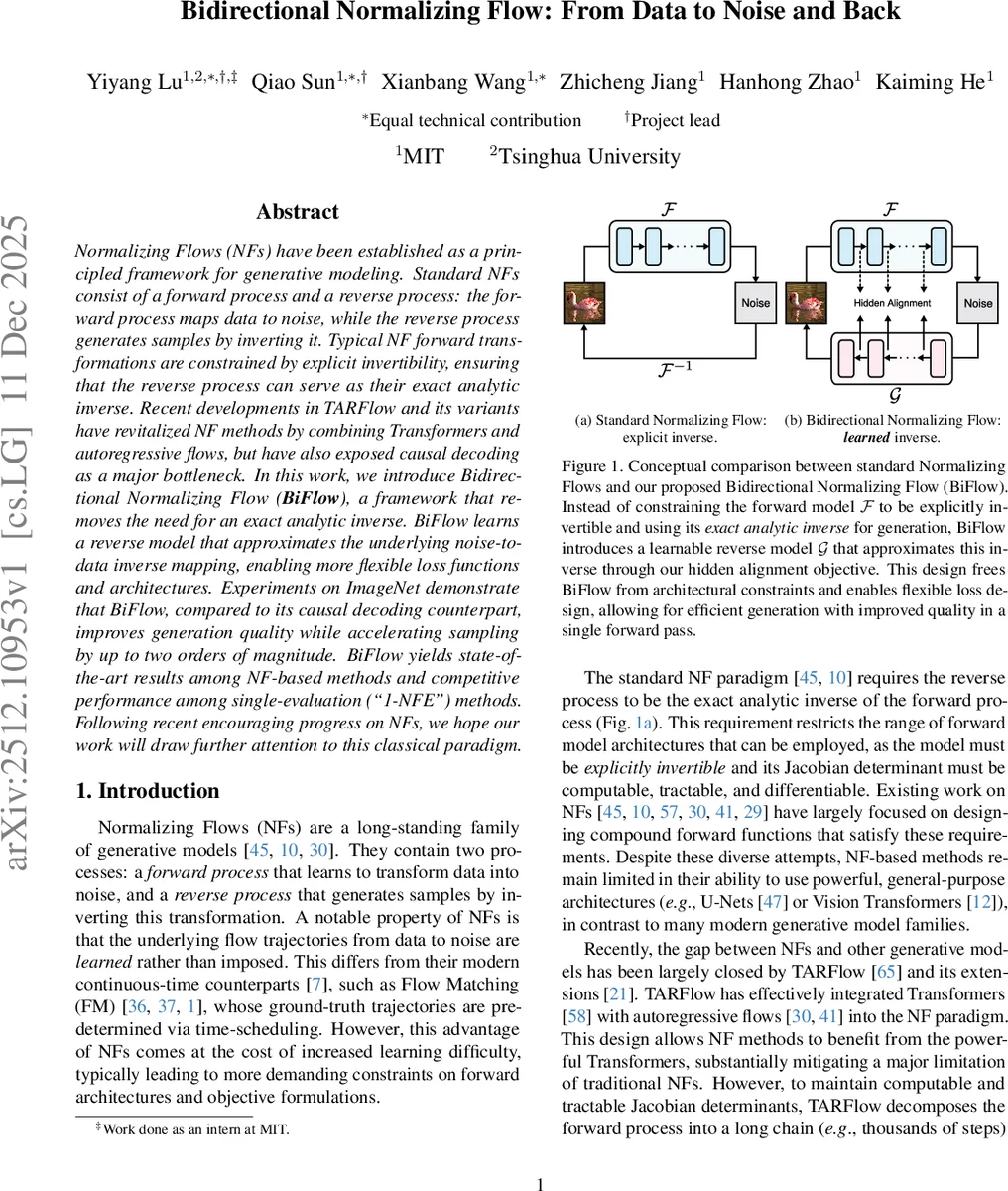

Normalizing Flows (NFs) have been established as a principled framework for generative modeling. Standard NFs consist of a forward process and a reverse process: the forward process maps data to noise, while the reverse process generates samples by inverting it. Typical NF forward transformations are constrained by explicit invertibility, ensuring that the reverse process can serve as their exact analytic inverse. Recent developments in TARFlow and its variants have revitalized NF methods by combining Transformers and autoregressive flows, but have also exposed causal decoding as a major bottleneck. In this work, we introduce Bidirectional Normalizing Flow ($\textbf{BiFlow}$), a framework that removes the need for an exact analytic inverse. BiFlow learns a reverse model that approximates the underlying noise-to-data inverse mapping, enabling more flexible loss functions and architectures. Experiments on ImageNet demonstrate that BiFlow, compared to its causal decoding counterpart, improves generation quality while accelerating sampling by up to two orders of magnitude. BiFlow yields state-of-the-art results among NF-based methods and competitive performance among single-evaluation (“1-NFE”) methods. Following recent encouraging progress on NFs, we hope our work will draw further attention to this classical paradigm.

💡 Research Summary

Normalizing Flows (NFs) provide a principled way to model complex data distributions by learning a bijective mapping between data and a simple Gaussian prior. Traditional NFs require the forward transformation to be explicitly invertible so that its analytic inverse can be used for sampling, which imposes strict architectural constraints (e.g., coupling layers, autoregressive flows) and necessitates tractable Jacobian determinants. Recent advances such as TARFlow have combined powerful Transformer backbones with autoregressive flows, dramatically improving expressiveness. However, because the autoregressive component must respect causal masking, inference proceeds sequentially over thousands of steps, creating a severe bottleneck in sampling speed.

The paper introduces Bidirectional Normalizing Flow (BiFlow), a framework that decouples forward and reverse processes. The forward model Fθ is trained exactly as in conventional NFs, using maximum‑likelihood estimation to map data x to latent noise z = Fθ(x). After Fθ is fixed, a separate reverse model Gϕ is trained to approximate the inverse mapping from z back to x. Crucially, Gϕ is not required to be analytically invertible; it can be any architecture, including non‑causal bidirectional Transformers, U‑Nets, or ConvNeXt blocks, allowing far greater flexibility and parallelism.

Three strategies for training Gϕ are explored:

-

Naïve Distillation – a simple reconstruction loss L = D(x, Gϕ(Fθ(x))) applied only at the final output. This provides a weak learning signal because the model must learn the entire complex transformation in a single step.

-

Hidden Distillation – aligns each intermediate hidden state of the forward trajectory {xi} with the corresponding state of the reverse trajectory {hi}. While this supplies richer supervision, it forces the reverse model to repeatedly project back to the input dimensionality, limiting architectural freedom.

-

Hidden Alignment (proposed) – introduces learnable projection heads φi that map the reverse hidden states hi into a space that can be directly compared with the forward states xi. The loss Σi D(xi, φi(hi)) preserves the full trajectory supervision while allowing the reverse model to maintain its own internal representation, removing the restrictive input‑space constraint.

In addition to the reverse mapping, the authors address the score‑based denoising step used in TARFlow. TARFlow generates a noisy intermediate ˜x and then applies an explicit gradient‑based denoising operation, which roughly doubles inference cost. BiFlow integrates denoising directly into Gϕ by adding an extra block that learns to map the noisy output to clean data, eliminating the separate score computation.

Empirical evaluation on ImageNet 256×256 demonstrates that BiFlow, using a DiT‑B sized backbone for the forward model, achieves an FID of 2.39—substantially better than the improved TARFlow baseline—while being up to 697× faster in sampling (approximately two orders of magnitude). This places BiFlow at the state‑of‑the‑art among NF‑based methods and makes it competitive with single‑evaluation (1‑NFE) generative models from other families. The paper provides detailed ablations showing that hidden alignment consistently outperforms naïve and hidden distillation, and that the learned denoising block yields both speed and quality gains.

Key contributions are:

- Decoupling forward and reverse processes, freeing the reverse model from invertibility constraints and enabling modern non‑causal architectures.

- Proposing hidden alignment, a flexible supervision scheme that leverages the full forward trajectory without imposing input‑space projections.

- Embedding denoising into the reverse network, removing the costly score‑based post‑processing step.

- Demonstrating that learned inverse models can surpass the exact analytic inverse of the forward flow in both fidelity and efficiency.

The work revives interest in classic NF principles by showing that learning a bidirectional mapping, rather than pre‑scheduling trajectories as in diffusion or continuous‑time flows, can achieve competitive performance without sacrificing speed. Future directions include combining BiFlow with continuous normalizing flows, exploring flow‑matching objectives, and extending the approach to other modalities such as audio, video, and 3D data.

Comments & Academic Discussion

Loading comments...

Leave a Comment