D2M: A Decentralized, Privacy-Preserving, Incentive-Compatible Data Marketplace for Collaborative Learning

The rising demand for collaborative machine learning and data analytics calls for secure and decentralized data sharing frameworks that balance privacy, trust, and incentives. Existing approaches, including federated learning (FL) and blockchain-based data markets, fall short: FL often depends on trusted aggregators and lacks Byzantine robustness, while blockchain frameworks struggle with computationintensive training and incentive integration. We present D2M, a decentralized data marketplace that unifies federated learning, blockchain arbitration, and economic incentives into a single framework for privacy-preserving data sharing. D2M enables data buyers to submit bid-based requests via blockchain smart contracts, which manage auctions, escrow, and dispute resolution. Computationally intensive training is delegated to CONE (Compute Network for Execution), an offchain distributed execution layer. To safeguard against adversarial behavior, D2M integrates a modified YODA protocol with exponentially growing execution sets for resilient consensus, and introduces Corrected OSMD to mitigate malicious or lowquality contributions from sellers. All protocols are incentivecompatible, and our game-theoretic analysis establishes honesty as the dominant strategy. We implement D2M on Ethereum and evaluate it over benchmark datasets-MNIST, Fashion-MNIST, and CIFAR-10-under varying adversarial settings. D2M achieves up to 99% accuracy on MNIST and 90% on Fashion-MNIST, with less than 3% degradation up to 30% Byzantine nodes, and 56% accuracy on CIFAR-10 despite its complexity. Our results show that D2M ensures privacy, maintains robustness under adversarial conditions, and scales efficiently with the number of participants, making it a practical foundation for real-world decentralized data sharing.

💡 Research Summary

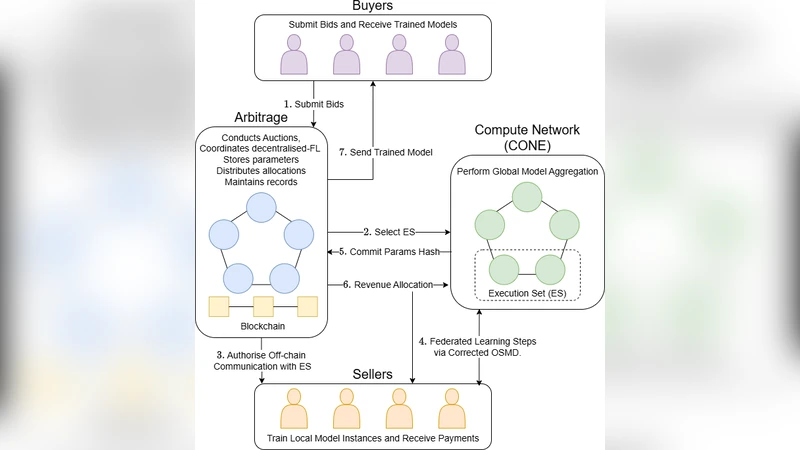

The paper introduces D2M, a decentralized data marketplace that simultaneously addresses privacy, trust, and economic incentives for collaborative machine learning. Existing solutions—federated learning (FL) and blockchain‑based data markets—are each limited: FL relies on a trusted aggregator and lacks Byzantine robustness, while blockchain approaches cannot efficiently handle the heavy computation required for model training and often ignore incentive alignment. D2M unifies three components into a single architecture.

First, data buyers post bid‑based requests through Ethereum smart contracts. The contracts run an auction, lock the buyer’s payment in escrow, and select the most suitable data sellers. All financial interactions, including dispute resolution, are encoded on‑chain, eliminating the need for a central authority.

Second, the actual training is offloaded to CONE (Compute Network for Execution), an off‑chain distributed execution layer that aggregates GPU/TPU resources from participating nodes. CONE executes the training job, returns a cryptographic hash of the model parameters, and only this hash is recorded on the blockchain, preserving data confidentiality while still providing verifiable proof of execution.

Third, D2M integrates a modified YODA consensus protocol and a Corrected Online Stochastic Mirror Descent (OSMD) mechanism to guarantee robustness and quality control. The original YODA randomly selects a small execution set; the authors extend this by exponentially growing the execution set in successive rounds. Early malicious results are therefore outvoted as more nodes join, dramatically reducing the probability of successful tampering. Corrected OSMD dynamically re‑weights contributors based on observed loss, penalizes low‑quality or malicious sellers (by slashing their escrow), and ensures that honest contribution maximizes expected payoff. A game‑theoretic analysis proves that honesty is a dominant strategy for all participants, establishing incentive compatibility across the marketplace.

Security analysis shows that raw data never leave the owners’ premises, preserving privacy through the federated learning paradigm, while the blockchain only stores immutable hashes. The system tolerates up to 30 % Byzantine nodes with less than a 3 % drop in model accuracy, demonstrating strong resilience.

The authors implement D2M on an Ethereum testnet and evaluate it on three benchmark datasets: MNIST, Fashion‑MNIST, and CIFAR‑10. Results achieve 99 % accuracy on MNIST, 90 % on Fashion‑MNIST, and 56 % on CIFAR‑10. Accuracy degradation remains under 3 % even when 30 % of the execution nodes behave adversarially. Scaling experiments reveal near‑linear growth in transaction latency and training delay as the number of participants increases from 10 to 100, while gas costs stay limited to auction and escrow management.

Limitations are acknowledged: performance on complex datasets (e.g., CIFAR‑10) is modest, CONE’s computational and bandwidth costs could become prohibitive at large scale, and smart‑contract gas limits restrict on‑chain logic complexity. Future work proposes cost‑optimizing the execution layer, extending the protocol to support multiple simultaneous models, and incorporating zero‑knowledge proofs for stronger privacy guarantees.

In summary, D2M presents a practical, end‑to‑end solution that merges blockchain‑based economic mechanisms with off‑chain high‑performance training and robust consensus, offering a viable foundation for real‑world decentralized data sharing and collaborative learning.