Zero-shot Adaptation of Stable Diffusion via Plug-in Hierarchical Degradation Representation for Real-World Super-Resolution

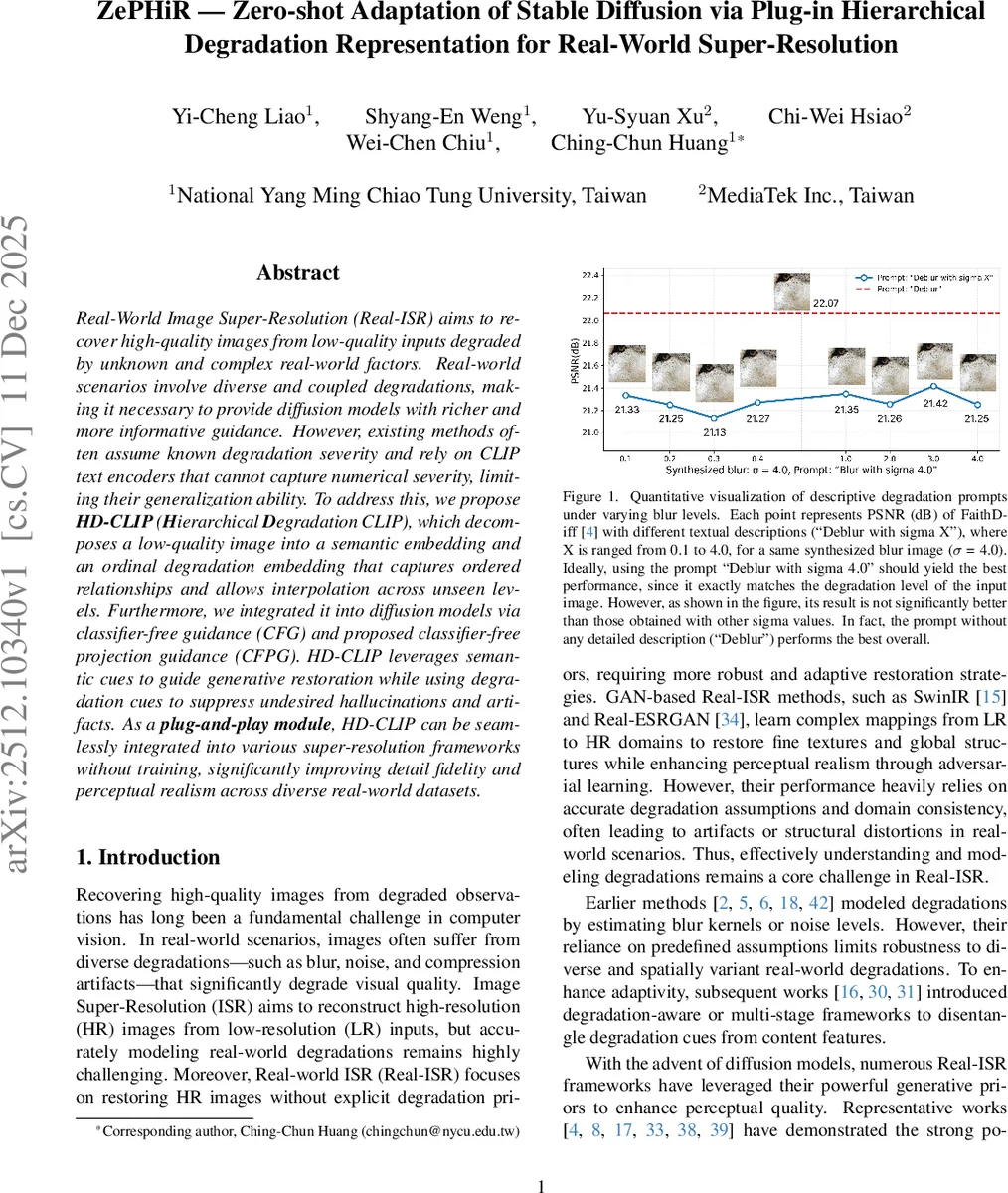

Real-World Image Super-Resolution (Real-ISR) aims to recover high-quality images from low-quality inputs degraded by unknown and complex real-world factors. Real-world scenarios involve diverse and coupled degradations, making it necessary to provide diffusion models with richer and more informative guidance. However, existing methods often assume known degradation severity and rely on CLIP text encoders that cannot capture numerical severity, limiting their generalization ability. To address this, we propose \textbf{HD-CLIP} (\textbf{H}ierarchical \textbf{D}egradation CLIP), which decomposes a low-quality image into a semantic embedding and an ordinal degradation embedding that captures ordered relationships and allows interpolation across unseen levels. Furthermore, we integrated it into diffusion models via classifier-free guidance (CFG) and proposed classifier-free projection guidance (CFPG). HD-CLIP leverages semantic cues to guide generative restoration while using degradation cues to suppress undesired hallucinations and artifacts. As a \textbf{plug-and-play module}, HD-CLIP can be seamlessly integrated into various super-resolution frameworks without training, significantly improving detail fidelity and perceptual realism across diverse real-world datasets.

💡 Research Summary

This paper presents ZePHiR, a novel zero-shot plug-and-play adaptation framework for real-world image super-resolution (Real-ISR) based on Stable Diffusion. The core challenge in Real-ISR is restoring high-quality images from low-quality inputs degraded by complex, unknown, and often mixed real-world factors like blur, noise, and compression artifacts. Existing diffusion-based methods often rely on CLIP text encoders, which lack numerical understanding, or assume prior knowledge of degradation severity, limiting their generalization.

To address this, the authors propose HD-CLIP (Hierarchical Degradation CLIP), a key module that learns to disentangle and represent degradation in a structured manner. HD-CLIP decomposes a low-quality (LQ) image into two components: a semantic embedding capturing the image content and an ordinal degradation embedding capturing both the degradation type (e.g., Blur, JPEG) and its intensity level. The innovation lies in constructing a “Composition Ordinal Text Embedding” space. For a prompt like “Blur with sigma 1.0”, the embedding is formed by summing: 1) the base text embedding for the degradation type (“Blur”), 2) an “ordinal embedding” derived from the normalized intensity value (1.0) using a sinusoidal positional encoding function, which inherently encodes ordered relationships, and 3) a learnable “shift embedding” specific to the degradation type. This design allows the model to understand that sigma 2.0 represents stronger blur than sigma 1.0. Furthermore, HD-CLIP employs a “Local Interpolation Regression” strategy, enabling it to estimate unseen, intermediate degradation levels (e.g., sigma 0.7) by interpolating between the nearest known level embeddings in the ordinal space.

The second major contribution is a novel guidance strategy for diffusion models called “Classifier-free Projection Guidance (CFPG)”. While standard Classifier-free Guidance (CFG) linearly interpolates between conditional and unconditional noise predictions, CFPG refines this process. It actively “projects” the conditional prediction towards the direction of the semantic embedding from HD-CLIP to enhance content preservation. Simultaneously, it projects the unconditional prediction away from the direction of the degradation embedding to suppress unwanted hallucinations and artifacts introduced by the diffusion model. This provides a unified mechanism to guide the generation using both positive (what to generate) and negative (what to suppress) signals.

The entire ZePHiR framework is designed as a plug-and-play module. It can be seamlessly integrated into various pre-trained diffusion-based SR backbones (such as DiffBIR, PASD, SeeSR) without requiring any additional training of the base models. Extensive experiments on multiple real-world SR datasets (RealSR, DRealSR, RealBlur-J, etc.) demonstrate that ZePHiR significantly outperforms existing state-of-the-art methods in both quantitative metrics (PSNR, LPIPS, FID) and qualitative visual quality. It shows remarkable robustness in handling varying and mixed degradation severities. Ablation studies validate the effectiveness of each component: the ordinal embedding space, the local interpolation scheme, and the dual projection mechanism in CFPG.

In summary, this work reframes the Real-ISR problem by introducing a hierarchical and ordinal representation for real-world degradations. By making this representation actionable within diffusion models via a novel projection guidance technique, ZePHiR achieves superior fidelity and perceptual realism in a training-free, plug-and-play manner, offering a powerful and flexible tool for adaptive image restoration.

Comments & Academic Discussion

Loading comments...

Leave a Comment