Efficient-VLN: A Training-Efficient Vision-Language Navigation Model

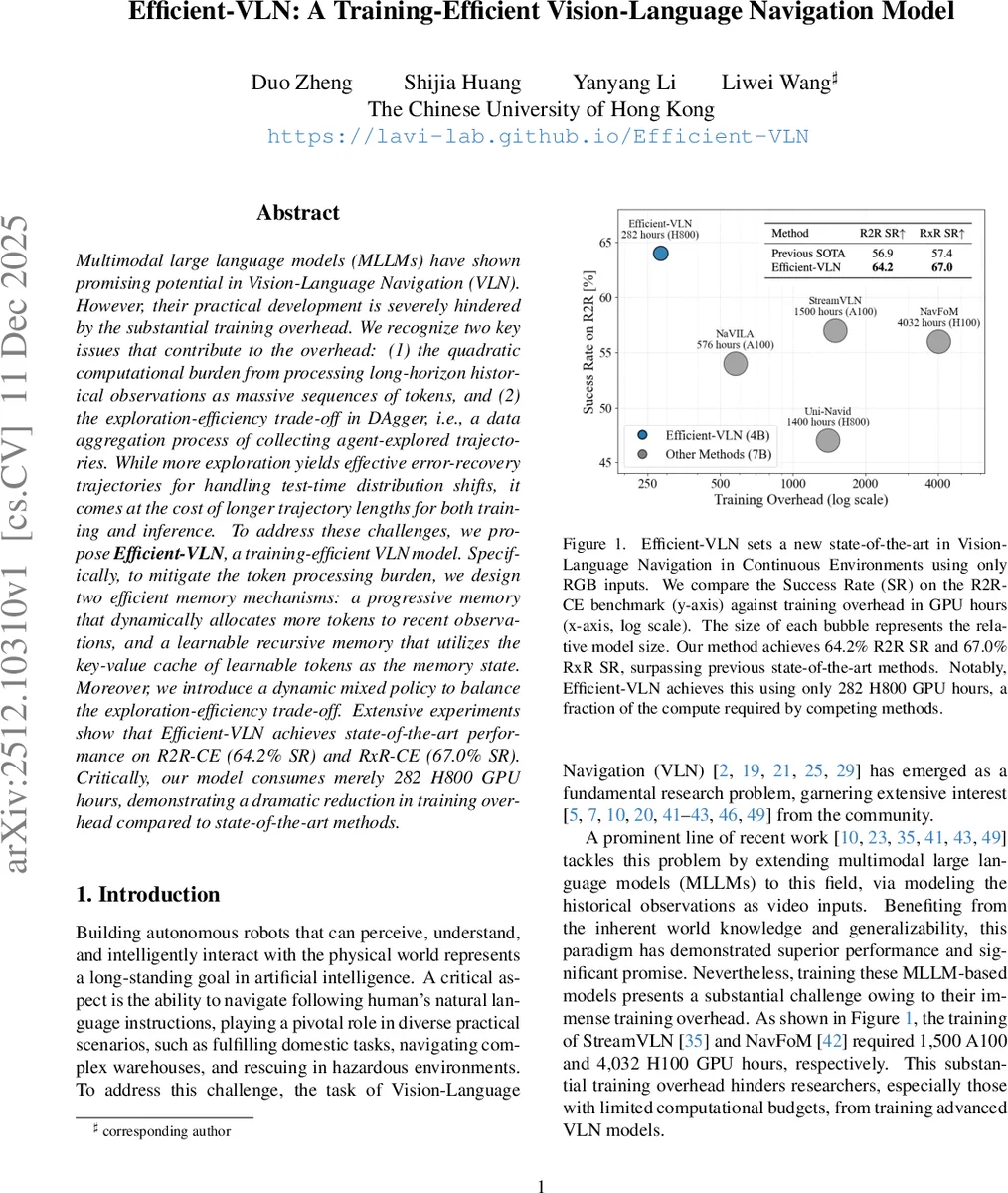

Multimodal large language models (MLLMs) have shown promising potential in Vision-Language Navigation (VLN). However, their practical development is severely hindered by the substantial training overhead. We recognize two key issues that contribute to the overhead: (1) the quadratic computational burden from processing long-horizon historical observations as massive sequences of tokens, and (2) the exploration-efficiency trade-off in DAgger, i.e., a data aggregation process of collecting agent-explored trajectories. While more exploration yields effective error-recovery trajectories for handling test-time distribution shifts, it comes at the cost of longer trajectory lengths for both training and inference. To address these challenges, we propose Efficient-VLN, a training-efficient VLN model. Specifically, to mitigate the token processing burden, we design two efficient memory mechanisms: a progressive memory that dynamically allocates more tokens to recent observations, and a learnable recursive memory that utilizes the key-value cache of learnable tokens as the memory state. Moreover, we introduce a dynamic mixed policy to balance the exploration-efficiency trade-off. Extensive experiments show that Efficient-VLN achieves state-of-the-art performance on R2R-CE (64.2% SR) and RxR-CE (67.0% SR). Critically, our model consumes merely 282 H800 GPU hours, demonstrating a dramatic reduction in training overhead compared to state-of-the-art methods.

💡 Research Summary

The paper “Efficient-VLN: A Training-Efficient Vision-Language Navigation Model” addresses a critical bottleneck in the development of Multimodal Large Language Model (MLLM)-based agents for Vision-Language Navigation (VLN). While MLLMs show great promise in this embodied AI task, their practical adoption is severely hindered by prohibitive training costs. The authors identify two primary sources of this overhead: (1) the quadratic computational burden from processing long sequences of historical visual observations as tokens, and (2) the exploration-efficiency trade-off inherent in the DAgger data aggregation process, where more exploration for error-recovery leads to longer, costlier trajectories.

To tackle these challenges, the authors propose the Efficient-VLN framework, which introduces innovations across memory representation, spatial reasoning, and training strategy.

First, to reduce the token processing load, the paper designs two novel, efficient memory mechanisms. The Progressive Memory Representation mimics human forgetting by dynamically allocating token budgets based on temporal recency. It applies mild spatial compression to recent visual frames and progressively stronger compression to older ones, drastically reducing the total token count for historical context. The Learnable Recursive Memory Representation offers a different approach by utilizing the Key-Value (KV) cache of a set of learnable “sentinel tokens” as a fixed-size memory state. This state is recursively updated across time steps, propagating context without linearly increasing token count, and using the KV cache helps mitigate gradient propagation issues in deep models.

Second, to enhance the model’s spatial understanding without requiring depth sensors, the paper proposes a Geometry-Enhanced Visual Representation. It extracts latent 3D geometry tokens from raw RGB video using a 3D geometry encoder (StreamVGGT) and fuses them with standard 2D visual features, enriching the agent’s perception of the environment.

Third, to optimize the data collection process, the authors introduce a Dynamic Mixed Policy for DAgger. Instead of using a fixed ratio between the learner and oracle policies, this policy starts with a high proportion of learner actions to induce and capture error-recovery scenarios, then gradually shifts towards the oracle policy to ensure task completion. This balances the need for informative error data with the desire for shorter, more efficient training trajectories.

The model is built upon the Qwen2.5-VL backbone and trained in a two-stage process: first on a mixed dataset (R2R-CE and RxR-CE) to learn basic navigation, then refined using DAgger with the proposed dynamic policy.

Extensive experiments on the R2R-CE and RxR-CE benchmarks demonstrate state-of-the-art performance, achieving 64.2% Success Rate (SR) on R2R-CE and 67.0% SR on RxR-CE. The most striking result is the dramatic reduction in training cost: Efficient-VLN requires only 282 H800 GPU hours, a fraction of the compute needed by previous top methods like StreamVLN (1,500 A100 hours) or NavFoM (4,032 H100 hours). Ablation studies reveal that the progressive memory excels in long-horizon tasks (RxR-CE), while the recursive memory is effective for shorter trajectories but struggles with very long-term dependencies. The dynamic mixed policy is shown to reduce exploration overhead by 56% while improving success rates.

In conclusion, Efficient-VLN establishes a new paradigm for developing high-performance VLN models that are not only effective but also dramatically more efficient to train, significantly lowering the barrier to entry for research in this field.

Comments & Academic Discussion

Loading comments...

Leave a Comment