Latent Chain-of-Thought World Modeling for End-to-End Driving

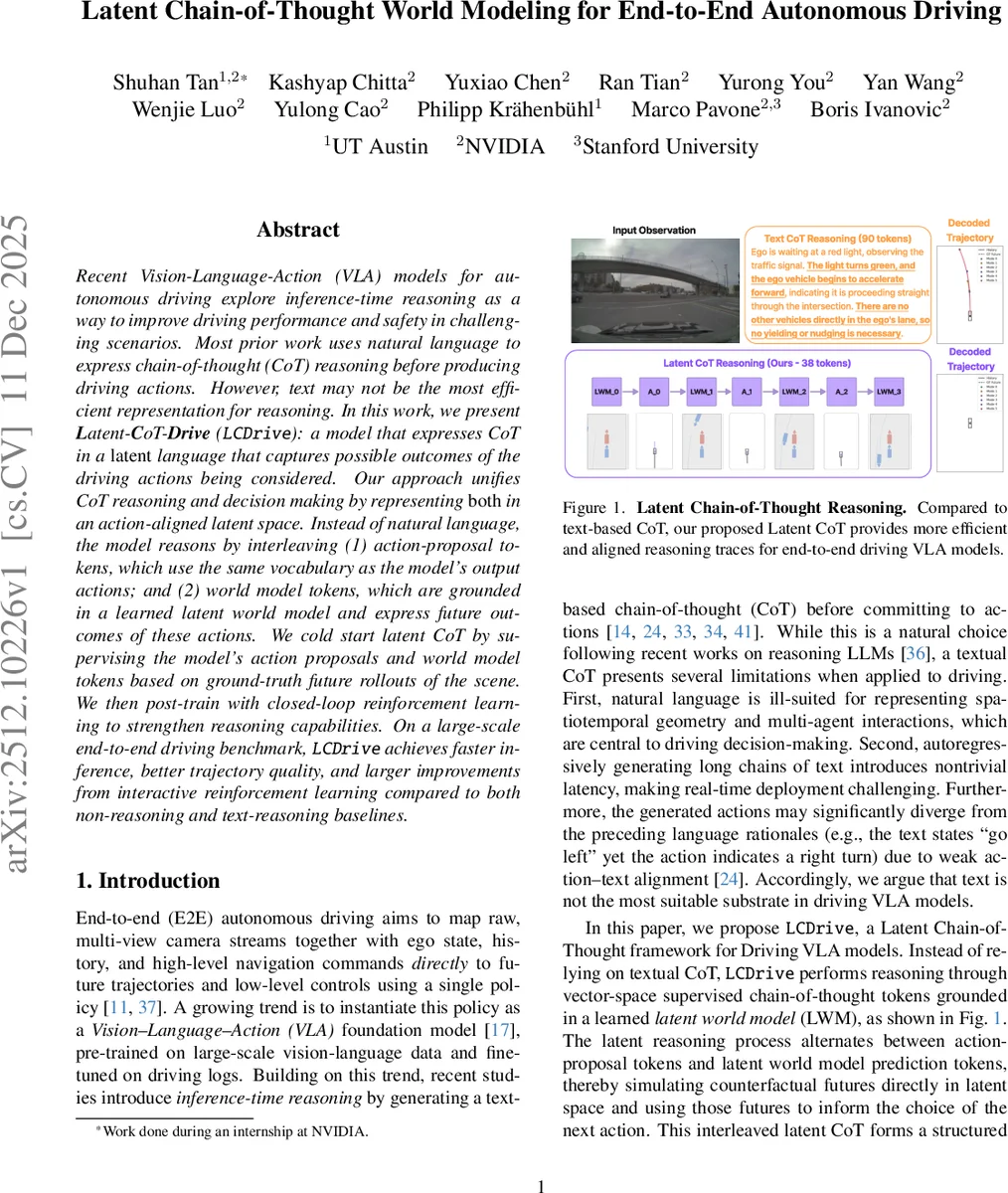

Recent Vision-Language-Action (VLA) models for autonomous driving explore inference-time reasoning as a way to improve driving performance and safety in challenging scenarios. Most prior work uses natural language to express chain-of-thought (CoT) reasoning before producing driving actions. However, text may not be the most efficient representation for reasoning. In this work, we present Latent-CoT-Drive (LCDrive): a model that expresses CoT in a latent language that captures possible outcomes of the driving actions being considered. Our approach unifies CoT reasoning and decision making by representing both in an action-aligned latent space. Instead of natural language, the model reasons by interleaving (1) action-proposal tokens, which use the same vocabulary as the model’s output actions; and (2) world model tokens, which are grounded in a learned latent world model and express future outcomes of these actions. We cold start latent CoT by supervising the model’s action proposals and world model tokens based on ground-truth future rollouts of the scene. We then post-train with closed-loop reinforcement learning to strengthen reasoning capabilities. On a large-scale end-to-end driving benchmark, LCDrive achieves faster inference, better trajectory quality, and larger improvements from interactive reinforcement learning compared to both non-reasoning and text-reasoning baselines.

💡 Research Summary

Recent Vision‑Language‑Action (VLA) models for autonomous driving have begun to incorporate inference‑time reasoning, typically by generating a textual chain‑of‑thought (CoT) before committing to control actions. While this leverages the reasoning abilities of large language models, text is a poor substrate for driving because it cannot efficiently encode spatio‑temporal geometry, multi‑agent interactions, and because long text sequences increase latency and may become misaligned with the final actions.

The paper introduces LCDrive (Latent‑CoT‑Drive), a framework that replaces textual CoT with a latent‑language reasoning process tightly coupled to the action space. The model interleaves two kinds of tokens, both drawn from the same vocabulary used for the final trajectory output:

-

Action‑proposal tokens (A) – these are blocks of 10 fine‑grained action tokens (one per 0.1 s) that together form a 1‑second proposal. The vocabulary is identical to that used for the 64‑step trajectory (Δx, Δy, Δψ) quantized into a 1024‑codebook.

-

Latent world‑model tokens (LWM) – a compact ego‑centric latent representation of the surrounding scene. Each LWM token summarizes a 1‑second window at 10 Hz, encoding the ego vehicle and the K nearest agents as a fixed‑size set of vectors. A lightweight transformer or MLP predicts the next LWM conditioned on the current proposal.

A reasoning trace for branch i is defined as

R⁽ⁱ⁾ = {A⁽ⁱ⁾₀, LWM⁽ⁱ⁾₁, A⁽ⁱ⁾₁, LWM⁽ⁱ⁾₂, …, A⁽ⁱ⁾_{K‑1}, LWM⁽ⁱ⁾_K}.

The process starts from an initial latent state LWM₀ derived from sensor inputs (or online perception). The model can generate B parallel branches (default B = 2) sequentially, each branch conditioning on the previously generated branches to encourage diverse counter‑factual futures within a bounded token budget.

Training proceeds in three stages:

Stage 0 – Non‑reasoning pre‑training: A standard VLA is trained in a supervised fashion to predict trajectory tokens from raw images and ego state. Two copies are kept: one serves as the initialization for LCDrive, the other is frozen and used as a “teacher” to generate action proposals.

Stage 1 – Latent CoT cold‑start: Using the frozen teacher, B different trajectories are sampled for each training example, sliced into 1‑second action blocks ˜A⁽ⁱ⁾ₜ. For each block, the ground‑truth future rollout is re‑centered into the ego frame and encoded into a target latent world state ˜LWM⁽ⁱ⁾_{t+1}. The interleaved sequence (˜A, ˜LWM) forms the supervision for the latent CoT tokens. The loss combines cross‑entropy on action tokens, cross‑entropy on the final trajectory, and an L2 loss on the predicted LWM embeddings.

Stage 2 – Closed‑loop reinforcement learning: With the token budget (K, B) fixed, the model autonomously generates action‑proposal blocks and corresponding LWM predictions, forming the full reasoning context R_EASON = {LWM₀, R⁽¹⁾, …, R⁽ᴮ⁾}. The final trajectory is then produced conditioned on this context. A trajectory‑level reward (collision avoidance, lane‑keeping, comfort) is computed, and a PPO‑style policy gradient updates both the action‑proposal and LWM prediction heads. This stage encourages the model to produce reasoning that genuinely improves downstream control, rather than merely imitating the teacher.

Experiments are conducted on the large‑scale PhysicalAI‑AV dataset (≈ 1727 h of urban driving with dense multi‑agent interactions). LCDrive is compared against (a) a baseline non‑reasoning VLA, and (b) a textual CoT model (AR1). Results show:

- Inference latency: Latent CoT requires 3–4× fewer tokens than text, reducing latency to < 45 ms, suitable for real‑time deployment.

- Trajectory quality: Average Displacement Error (ADE) and Final Displacement Error (FDE) improve by 12–15 % relative to baselines; collision rate drops by > 20 %.

- Reinforcement learning efficiency: Under identical interactive RL budgets, LCDrive achieves roughly double the reward gain of the textual CoT, indicating that latent counter‑factual rollouts provide more useful learning signals.

Qualitative rollouts illustrate that LCDrive’s latent reasoning yields coherent multi‑step plans (e.g., “prepare to turn left → create gap with lead vehicle → execute turn”) encoded directly in the action‑world token sequence, whereas textual CoT often contains extraneous language or mismatched action descriptions.

The paper’s contributions are threefold: (1) a novel latent‑language CoT representation aligned with the action space, (2) a training pipeline that combines supervised cold‑start with RL‑fine‑tuning to activate latent reasoning, and (3) empirical evidence that this approach delivers faster inference, higher‑fidelity trajectories, and stronger RL improvements on a demanding autonomous‑driving benchmark.

Limitations include the fixed‑size ego‑centric LWM (currently only K nearest agents) and the linear latent encoding that may not capture complex vehicle dynamics such as tire slip or aggressive maneuvers. Future work is suggested on dynamic agent set handling, physics‑aware latent models, multimodal sensor fusion (LiDAR, radar), and large‑scale online RL for safety‑critical validation.

In summary, LCDrive demonstrates that reasoning need not be expressed in natural language; a compact, action‑aligned latent chain‑of‑thought can provide the necessary counter‑factual foresight while meeting the stringent latency and reliability requirements of real‑world autonomous driving.

Comments & Academic Discussion

Loading comments...

Leave a Comment