Active Optics for Hyperspectral Imaging of Reflective Agricultural Leaf Sensors

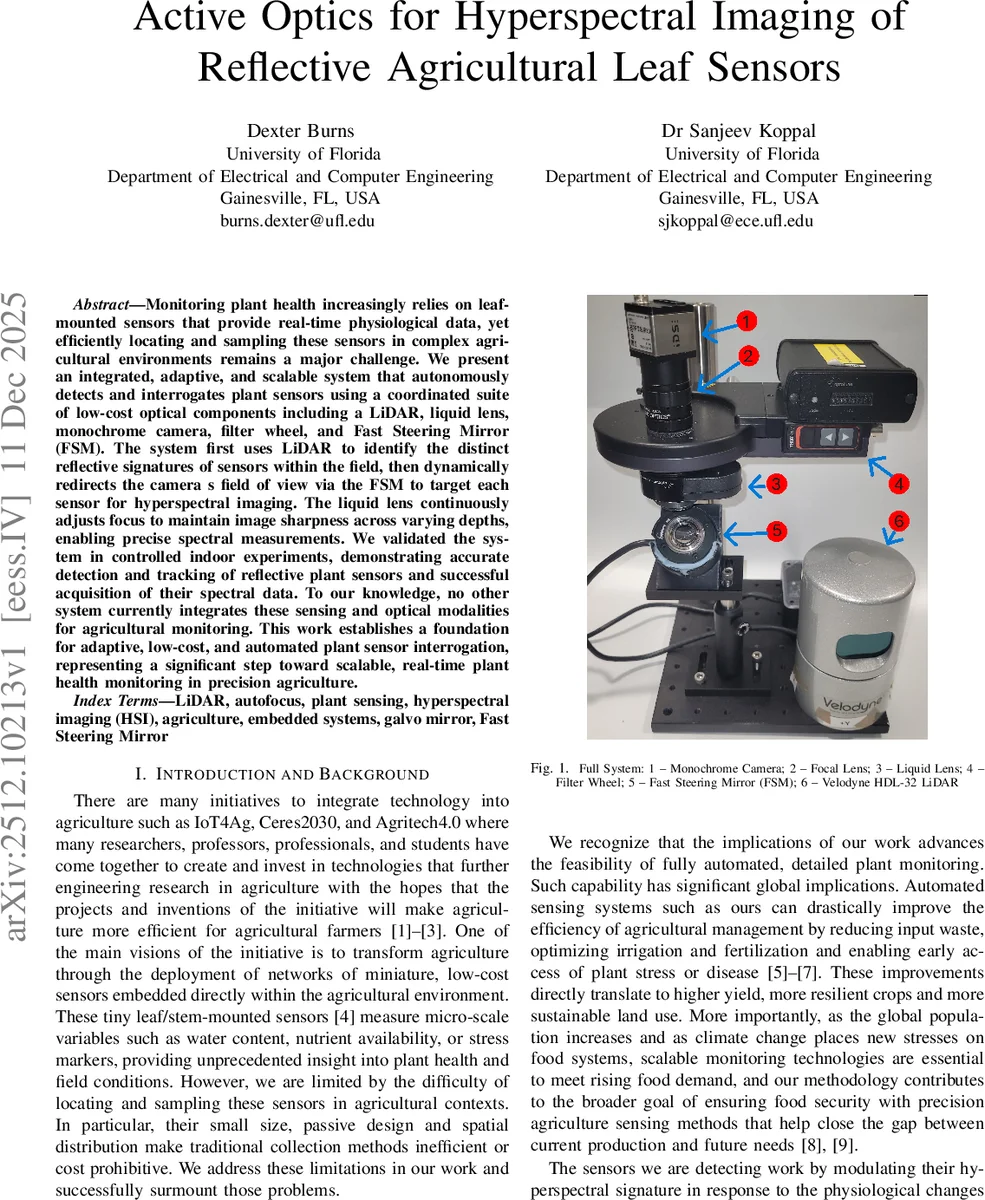

Monitoring plant health increasingly relies on leaf-mounted sensors that provide real-time physiological data, yet efficiently locating and sampling these sensors in complex agricultural environments remains a major challenge. We present an integrated, adaptive, and scalable system that autonomously detects and interrogates plant sensors using a coordinated suite of low-cost optical components including a LiDAR, liquid lens, monochrome camera, filter wheel, and Fast Steering Mirror (FSM). The system first uses LiDAR to identify the distinct reflective signatures of sensors within the field, then dynamically redirects the camera s field of view via the FSM to target each sensor for hyperspectral imaging. The liquid lens continuously adjusts focus to maintain image sharpness across varying depths, enabling precise spectral measurements. We validated the system in controlled indoor experiments, demonstrating accurate detection and tracking of reflective plant sensors and successful acquisition of their spectral data. To our knowledge, no other system currently integrates these sensing and optical modalities for agricultural monitoring. This work establishes a foundation for adaptive, low-cost, and automated plant sensor interrogation, representing a significant step toward scalable, real-time plant health monitoring in precision agriculture.

💡 Research Summary

This paper presents a novel, integrated optical system designed to autonomously detect and interrogate passive, reflective leaf-mounted sensors for precision agriculture. The core challenge addressed is the inefficient and costly process of manually locating and sampling numerous small, distributed sensors in a field. The proposed system offers an adaptive and scalable alternative by combining a suite of low-cost, coordinated optical components.

The system operates through a sequential pipeline. First, a Velodyne HDL-32 LiDAR scans the environment, generating a 3D point cloud. The system filters this point cloud for points with a specific intensity (reflectivity) value corresponding to the retro-reflective markers attached to the plant sensors. To isolate the sensor from other reflective surfaces and noise, the DBSCAN clustering algorithm is employed, providing the precise 3D spatial coordinates of each sensor cluster.

Once a sensor is located, its distance and position data are fed to two adaptive optics components via a ROS2-based control framework. A Fast Steering Mirror (FSM) from Optotune dynamically adjusts its pan and tilt angles to redirect the field of view of a monochrome camera (IDS Imaging U3-3270CP-M-GL) directly towards the sensor’s coordinates. Simultaneously, an Optotune EL-16-40-TC liquid lens continuously adjusts its focal power based on the sensor’s distance, ensuring the target remains in sharp focus despite depth variations—a critical feature for moving leaves in outdoor conditions.

With the camera’s view aligned and focused, the system proceeds to hyperspectral interrogation. A Thorlabs FW102C filter wheel, positioned in front of the camera, cycles through six optical bandpass filters (630, 640, 650, 660, 670, and 680 nm, each with 10nm FWHM). These wavelengths are selected as they correspond to the most responsive spectral signature of the target plant sensors. By capturing an image at each filtered band, the system acquires a discrete hyperspectral profile of the sensor. The intensity values across these bands encode the sensor’s state, which in turn reflects the physiological condition of the plant (e.g., water content, stress levels).

The methodology was validated in controlled indoor experiments. Results demonstrated successful point cloud segmentation and sensor tracking within a range of 0.8 to 2 meters from the LiDAR, accurate redirection of the camera’s FOV using the FSM, effective maintenance of focus via the liquid lens, and clear spectral isolation using the filter wheel, as visualized by the enhanced brightness of red and adjacent colors.

The paper concludes by acknowledging current limitations, primarily the restricted detection range imposed by the Avalanche Photodiode (APD) technology in the chosen LiDAR and potential interference from other reflective materials in complex environments like vertical farms. It suggests future improvements, including the adoption of more sensitive SPAD-based LiDARs and refinement of the clustering algorithms. This work establishes a foundational proof-of-concept for an active optical sensing pipeline, representing a significant step towards fully automated, scalable, and real-time monitoring of plant health through distributed sensor networks.

Comments & Academic Discussion

Loading comments...

Leave a Comment